Data is not numbers on a screen or files in a server room. For people, it has always meant the raw inputs of life — harvests tallied, rumors gathered, stars charted, neighbors counted. Driven by the need to impose order on the world, humans turned perception into concepts that both described and reshaped reality. What mattered were the tools and rituals that turned fragments into patterns, and patterns into action.

Civilization itself can be read as a system of data processing: cities grew from managed grain, cultures from stored knowledge, empires from censuses, democracies from tallies, industries from punch cards. Seen this way, computing isn’t a rupture but a continuation — the latest expression of our urge to turn information into judgment, structure, and power.

Each machine and theory marks a stage in this history, a crystallized moment of cultural logic that made the modern world possible.

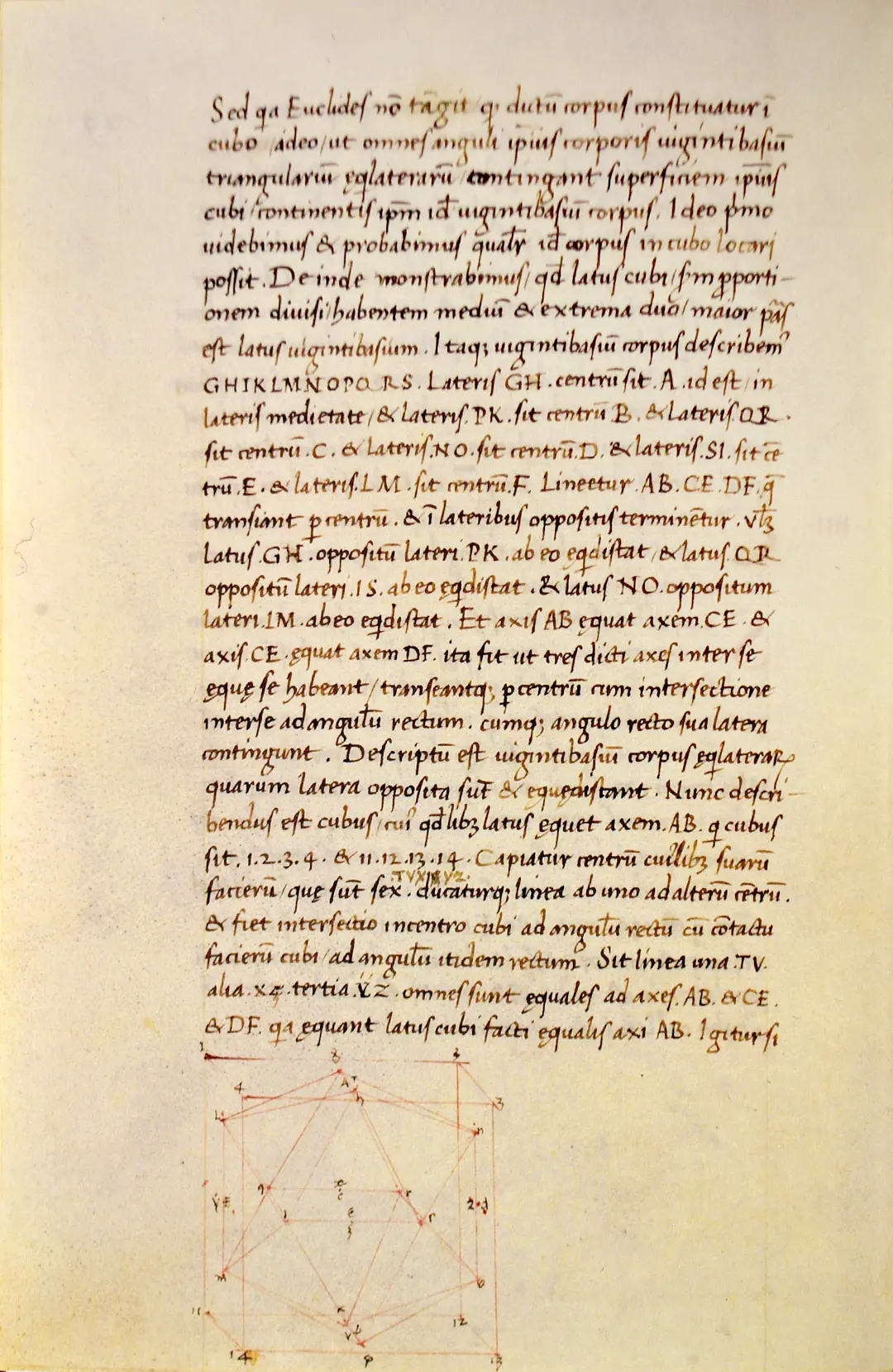

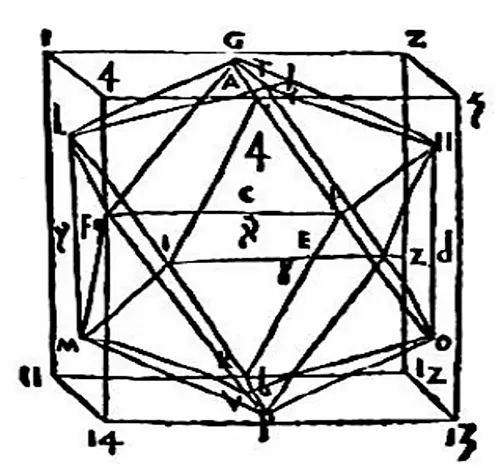

The Pre-History of Programmable Hardware

Many processes we now consider to be data processing also exist in nature. The ability to sense, measure, and store information is found in many life forms, allowing them to detect food, danger, and other external stimuli.

Humans started counting tens of thousands of years ago. Several notched bones, dated to 30,000-40,000 BCE, evidence this practice. These tools are thought to have helped our ancestors count things like lunar cycles. The ability to count repetitive events and the attempt to simplify this process were key factors in building and advancing society, as they formed a foundation for trade, navigation, resource management, and communication between individuals and groups.

Whether through piles of sticks, knots on threads, stones on wires, cogs and levers, punched cards, vacuum tubes and circuits, or disks and clouds, humankind has always been in search of ways to extend its capacity for storing and processing information. This search has one primary approach: automation, which involves deconstructing a process and outsourcing its elements to external tools.

A01_AUTOMATA_CONTROL

Early Automation — Temple Miracles [Tower Clocks] and Music Boxes

Before punch cards and programmable computation, humans engineered astonishing mechanical systems to represent, control, and display data. These devices served as precursors to modern information technologies, blending automation with symbolic function.

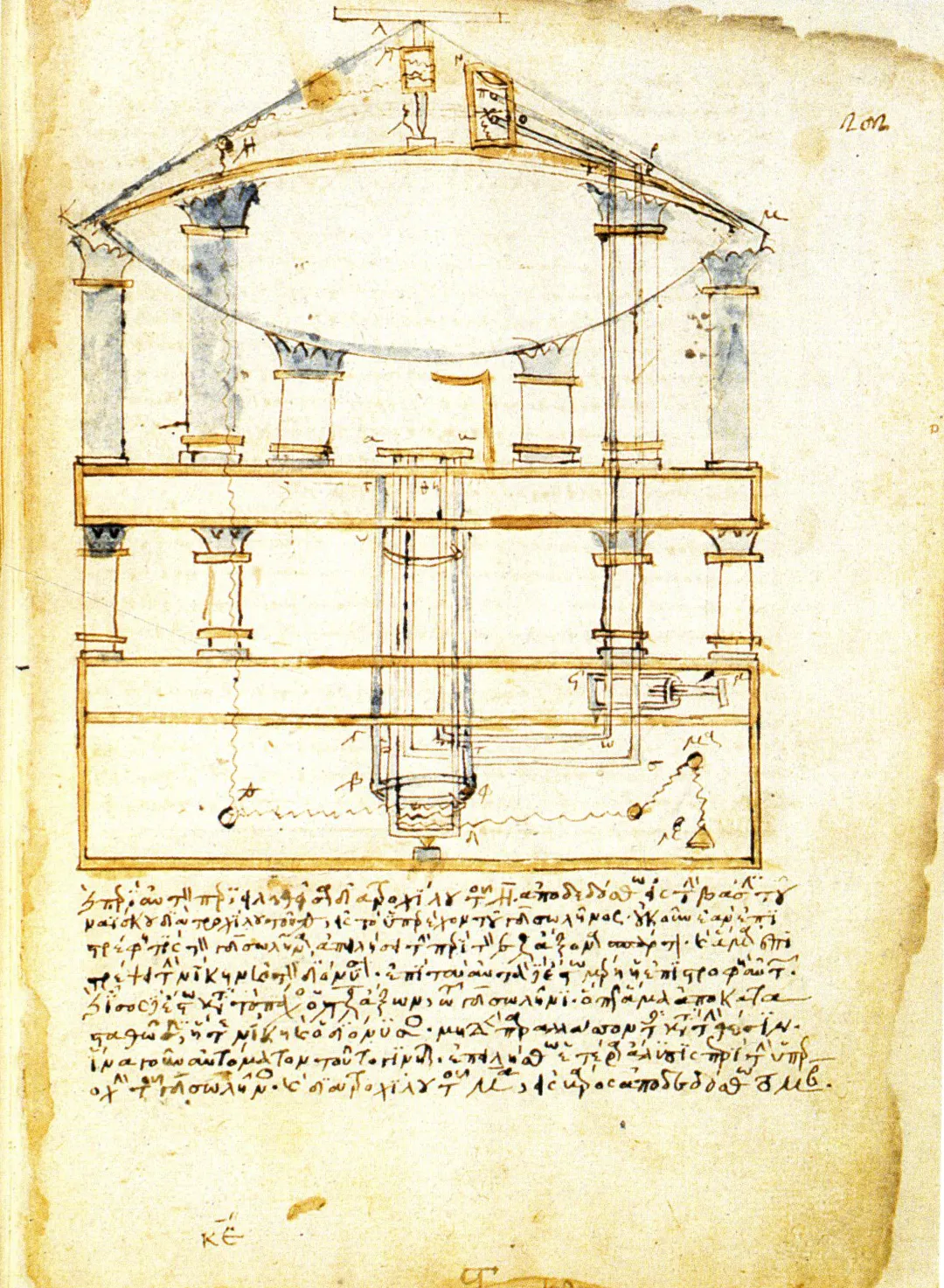

One of the earliest documented examples is Heron of Alexandria’s 1st-century CE automata: programmable theater devices, coin-operated dispensers, and wind-powered machines. These relied on systems of ropes, gears, and counterweights — physical logic encoded in motion. They demonstrated that information could be embedded in a mechanism, with repeatable outputs triggered by controlled inputs.

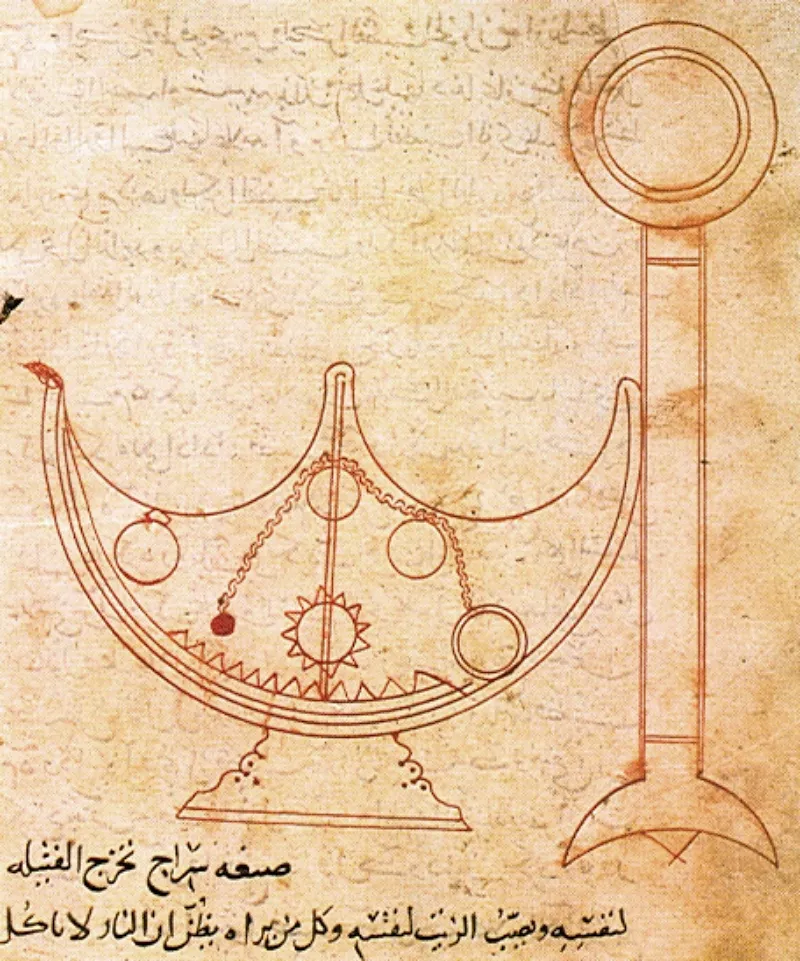

By the late Middle Ages and early modern period, public clocks and automata took on increasingly representational functions. The Strasbourg astronomical clock, for instance, combined religious calendar tracking with celestial mechanics, animating saints, angels, and planetary positions. These weren't mere decorations — they automated civic rituals and encoded cosmic models for public audiences.

In the 17th century, the Hemony brothers pioneered pinned-cylinder bell carillons in Amsterdam, prefiguring later musical machines and punch card systems. Their programmable melodies translated sound into hardware logic — a principle reused in music boxes, player pianos, and eventually looms and computers.

A01_AUTOMATA_CONTROL

A01_AUTOMATA_CONTROL

A11_JACQUARD_BINARY

Automata and cathedral clocks show that processing began as spectacle. These devices staged order in public, encoding rules in gears and rituals. They remind us that before computation was practical, it was performative — a demonstration of control over time, matter, and imagination. The next leap took this spectacle of control and turned it into infrastructure: the Jacquard loom.

A11_JACQUARD_BINARY

Jacquard Loom — A Mechanism of Memory and Control

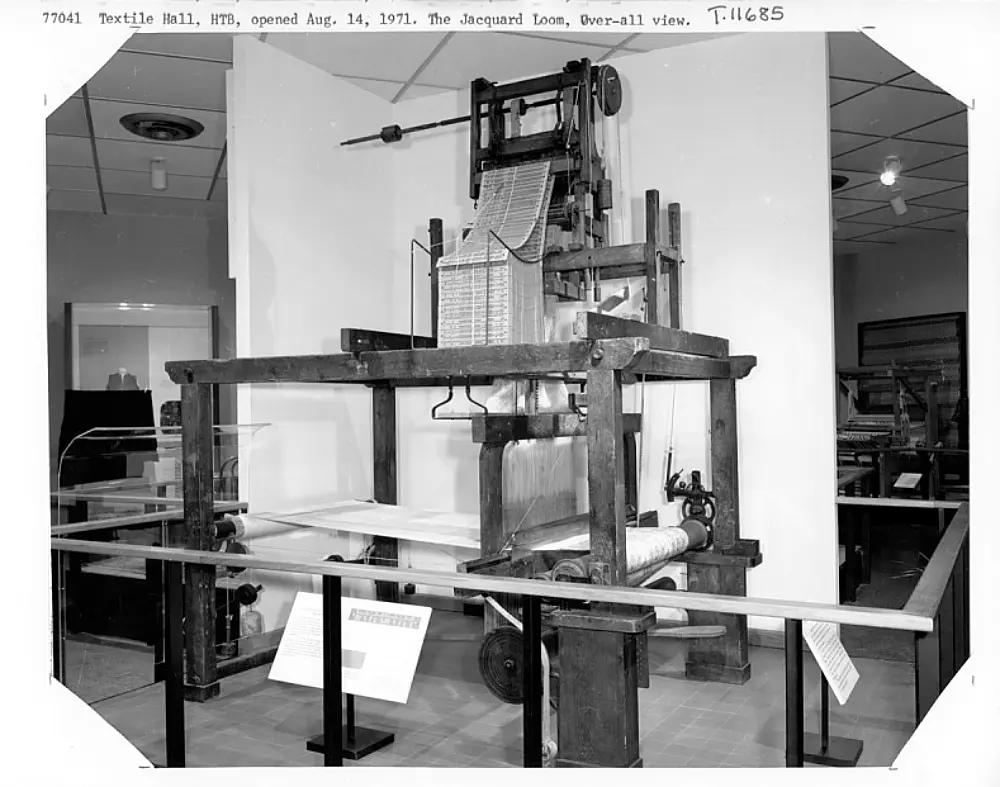

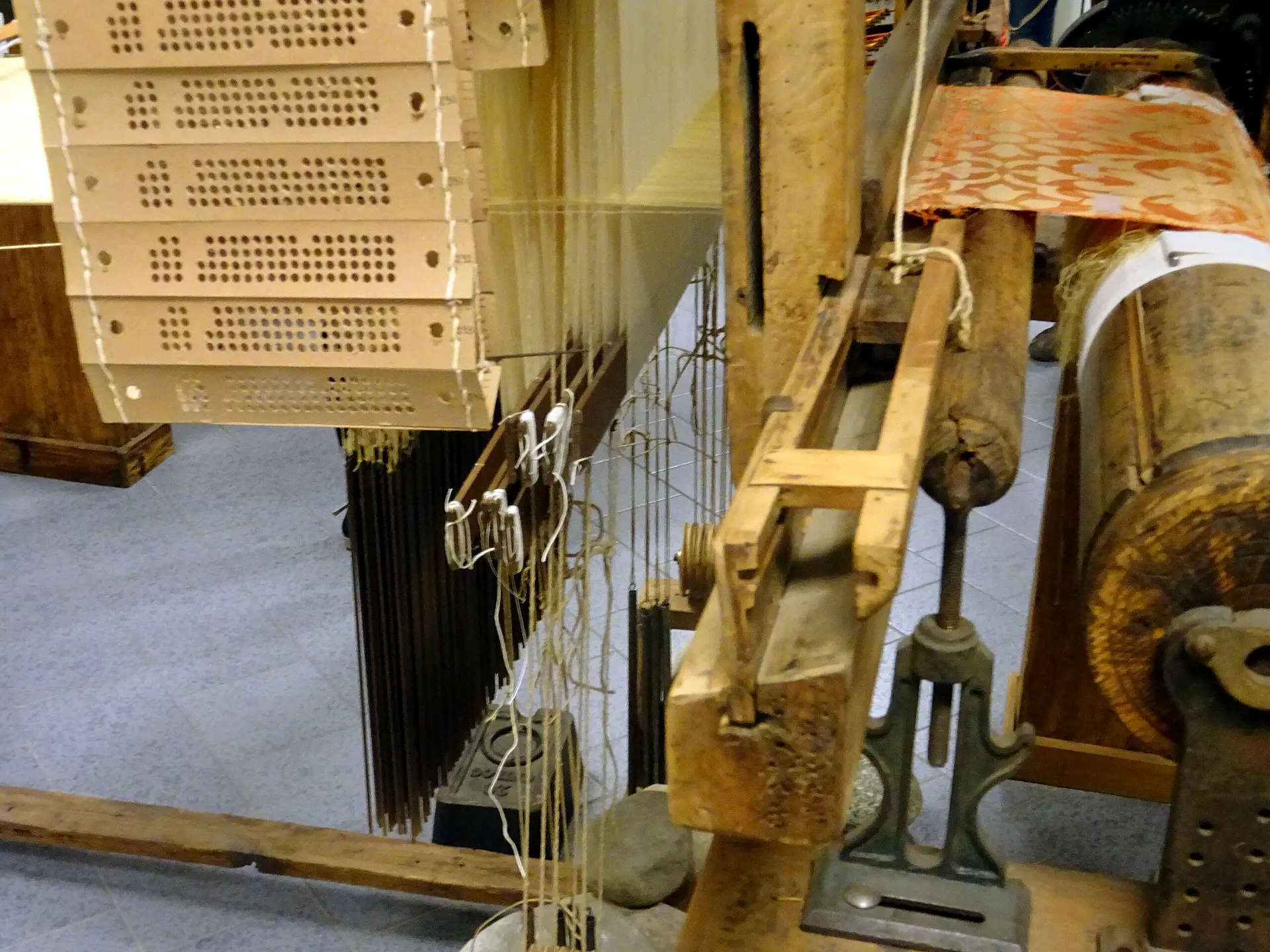

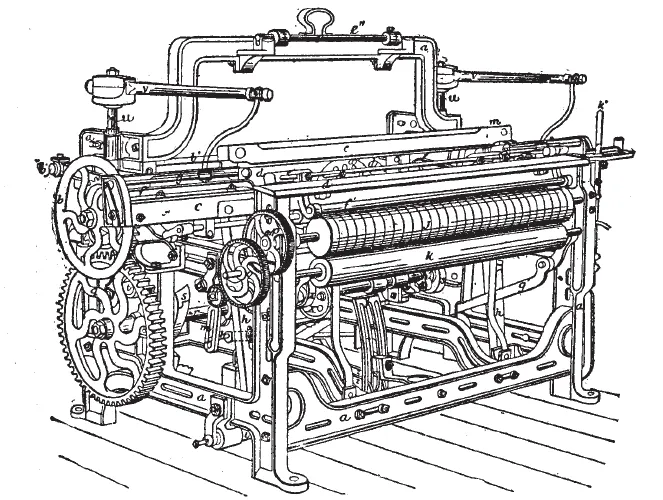

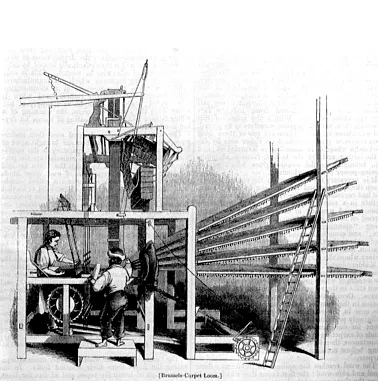

In 1725, Basile Bouchon, a textile worker from Lyon, adapted techniques from pinned music automata to control weaving with perforated paper tape. It was a subtle revolution: a machine that could remember patterns. This innovation marked the beginning of externalized, symbolic memory for controlling machines.

By 1804, Joseph-Marie Jacquard refined this concept into a fully automated loom using punched cards. Each row of holes controlled which warp threads were lifted, encoding textile patterns in binary sequences.

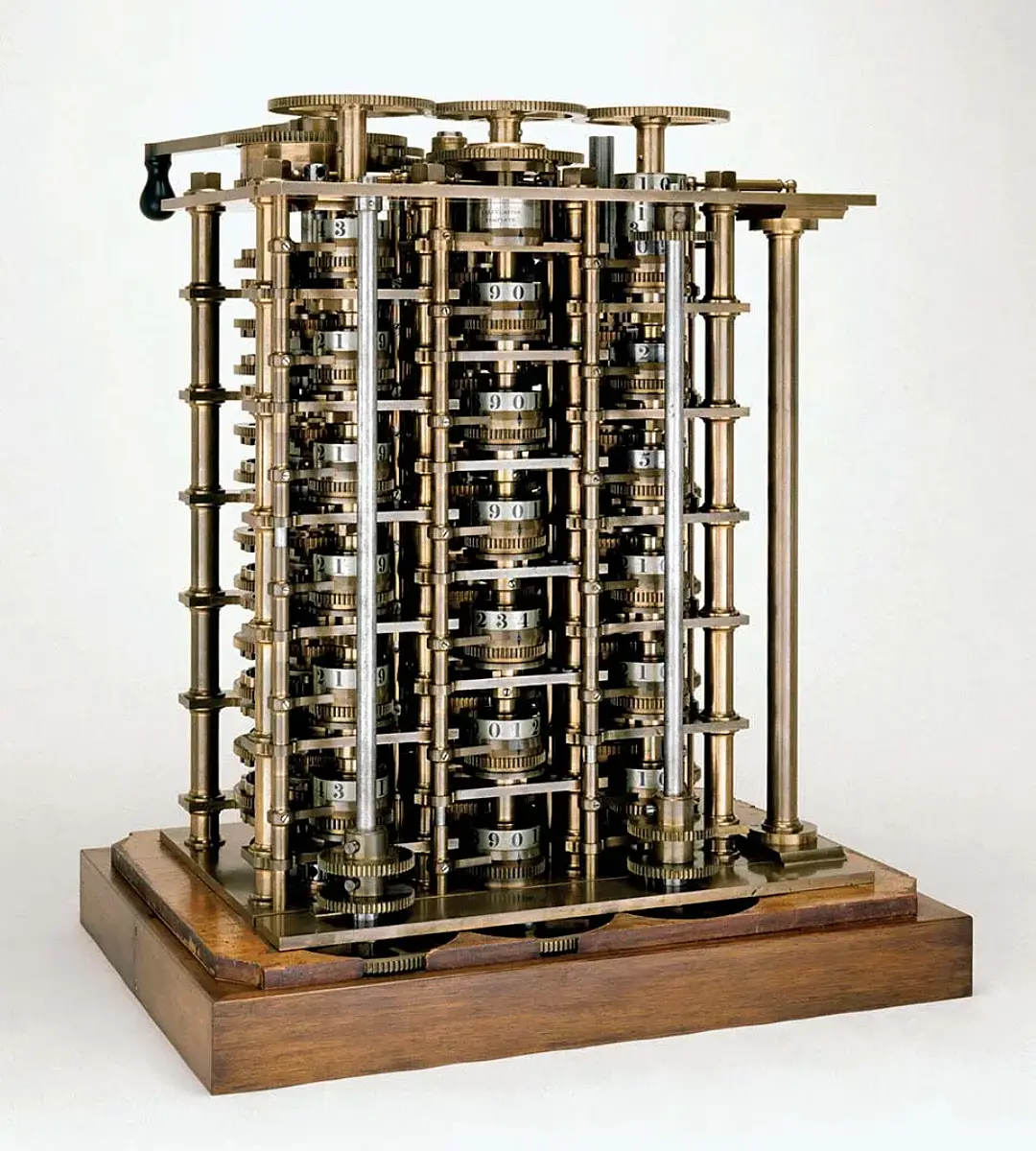

These punch cards enabled repeatability, modularity, and the separation of logic from mechanics. The loom became a programmable system — its behavior governed by structured data. Charles Babbage later cited Jacquard’s cards as direct inspiration for programming his Analytical Engine, applying the same logic to mathematical computation. Textile control became abstract logic.

A11_JACQUARD_BINARY

A11_JACQUARD_BINARY

A13_HOLLERITH_TABULATOR

The importance of the Jacquard loom lies less in weaving than in abstraction. It marked the moment when patterns became code — transferable sequences that could be stored, reused, and combined. What had once been tacit craft knowledge was recast as programmable logic. This conceptual leap — treating instructions as independent data — is what made later computational systems imaginable.

A13_HOLLERITH_TABULATOR

From Loom to Census — Herman Hollerith and the Birth of Data Machines

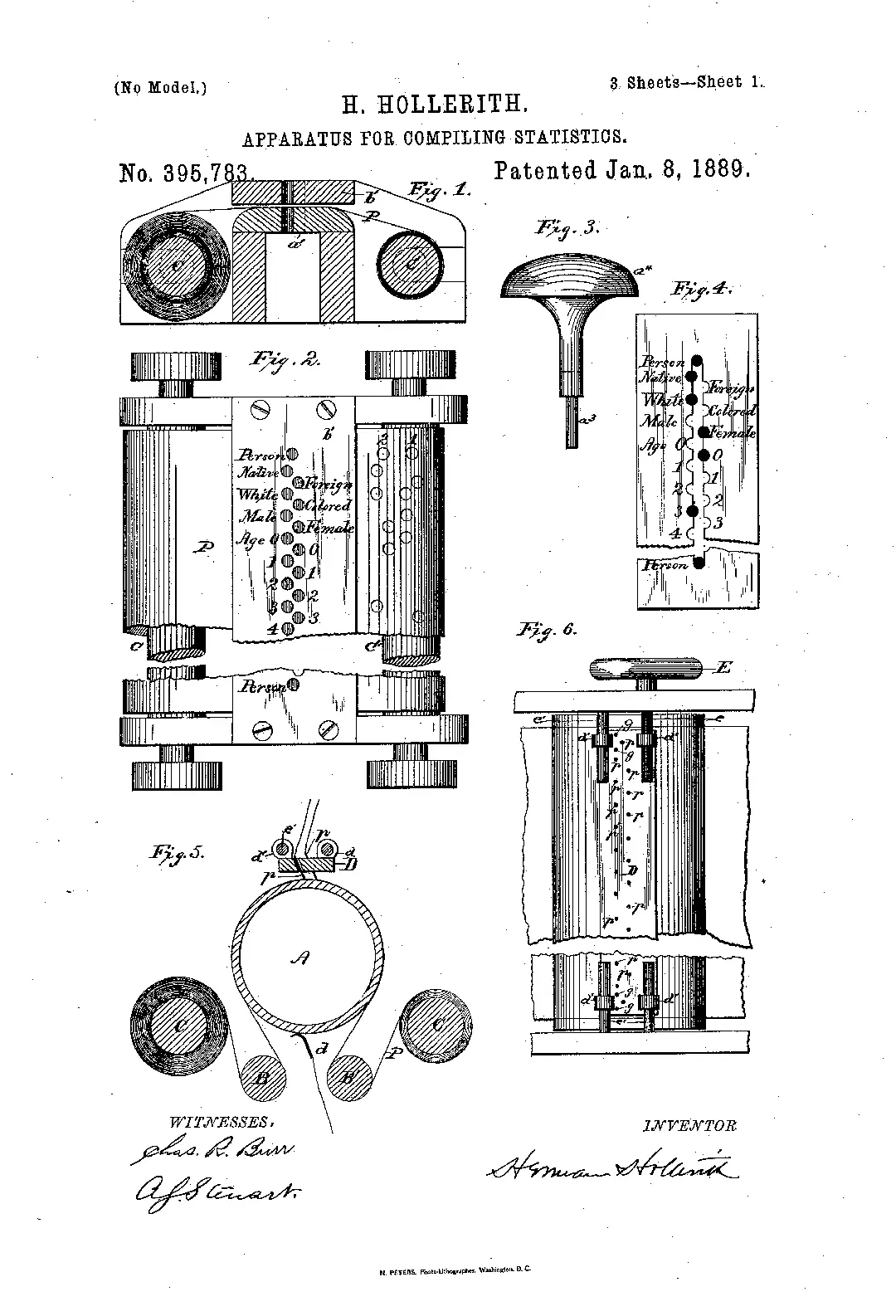

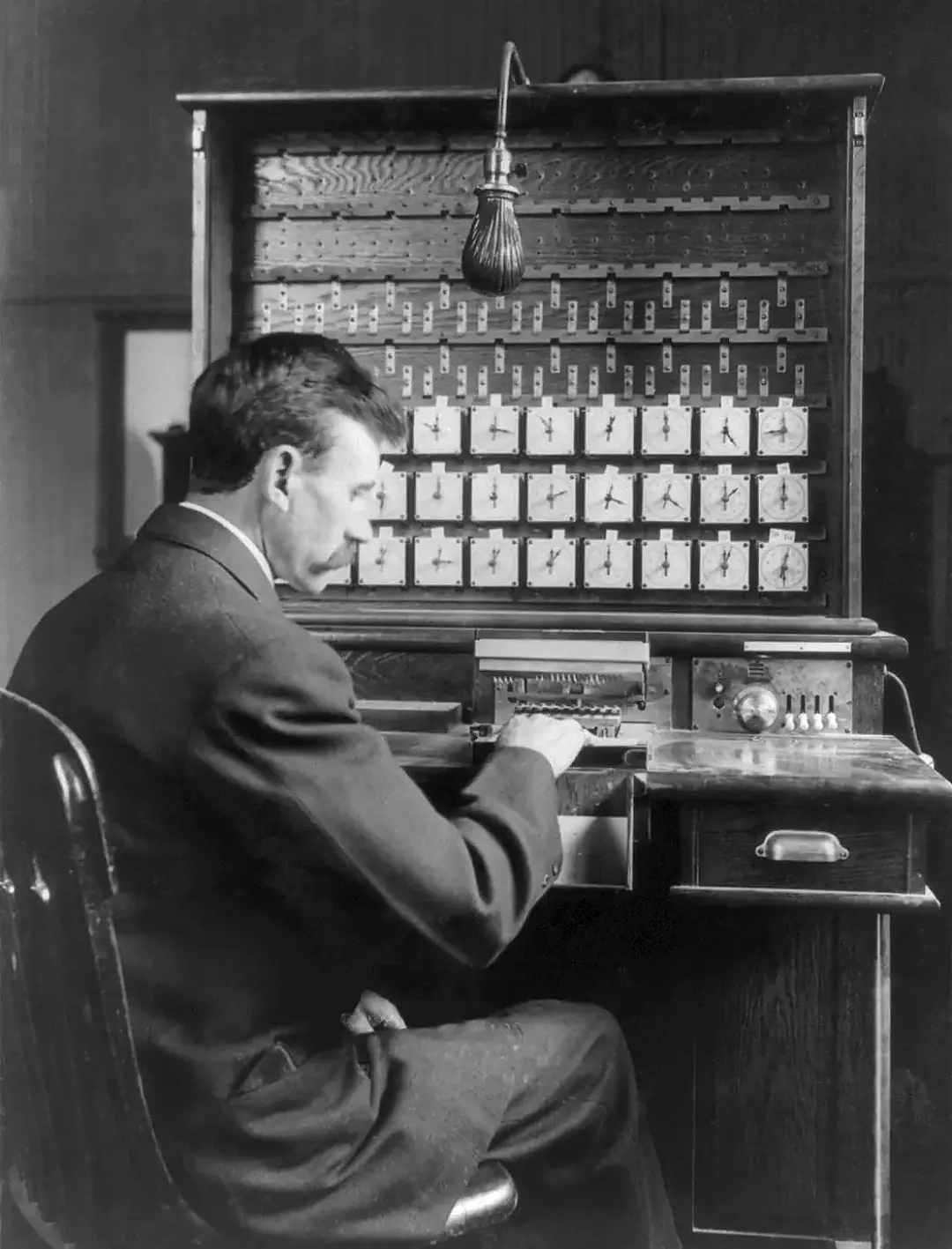

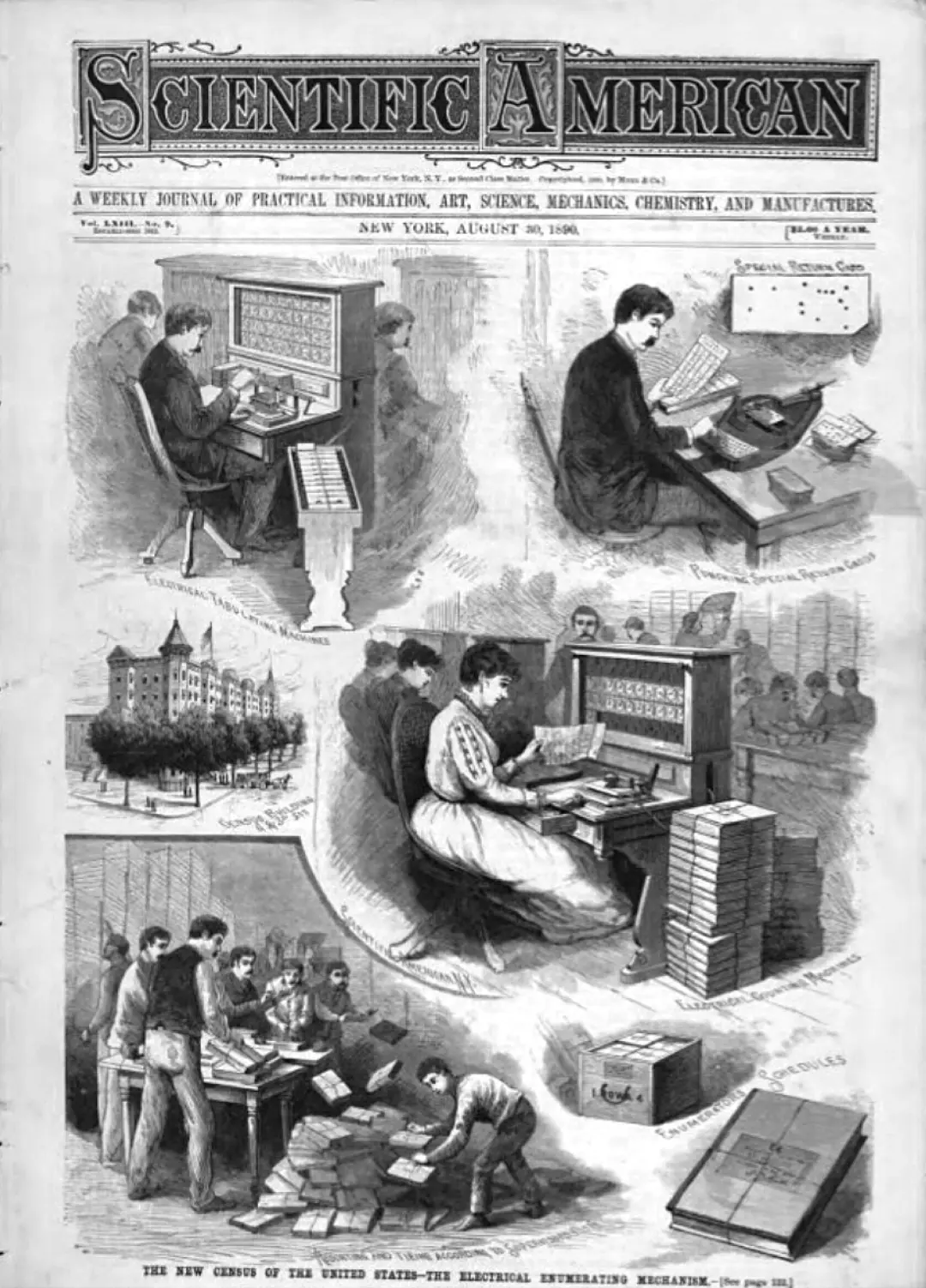

By 1880, the U.S. Census had become a monumental paper problem. Processing returns by hand had taken nearly a decade — far too long for a modern bureaucracy. To break the bottleneck, a young engineer named Herman Hollerith turned to a familiar source: punch cards, inspired by Jacquard looms and railway tickets.

Hollerith’s breakthrough wasn’t the cards themselves, but the electromechanical system that could read them. His 1889 tabulator used metal pins to detect holes in a card and close electrical circuits, counting data categories automatically. With custom sorters and punched templates, information could be processed faster than ever before — and with far less human labor.

A13_HOLLERITH_TABULATOR

A13_HOLLERITH_TABULATOR

A2_Rationalizing the World

What Hollerith introduced was not just a faster way to count but a new relationship between people and data. Populations were redefined as machine-processable units, and governance began to depend on the categories a system could recognize. The conceptual shift was profound: data processing became a condition of modern administration.

A2

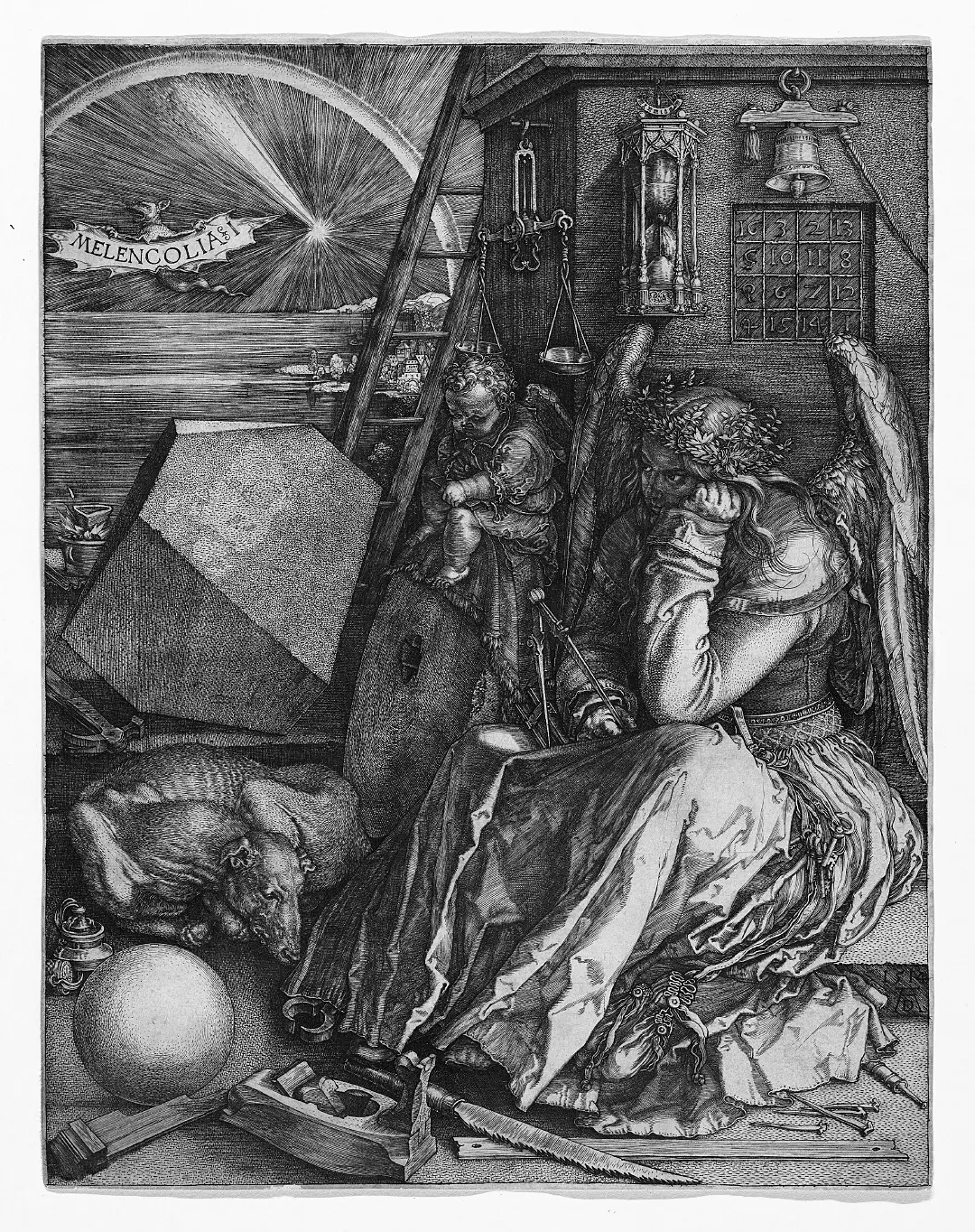

Rationalizing the World: Data, Design, and the Logic of Representation

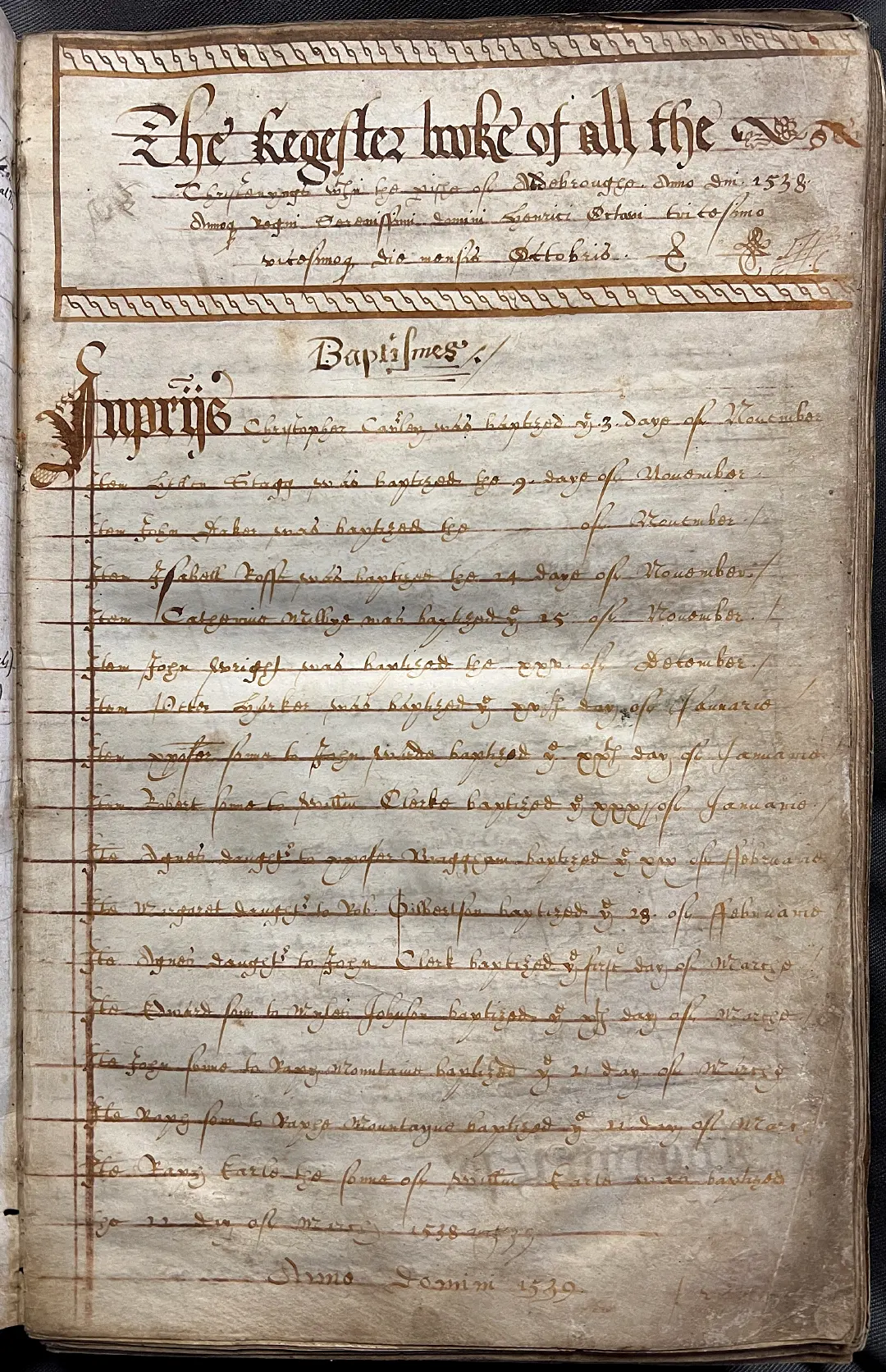

Transforming the real world into records, scripts, and protocols gave writing a double power: permanence and authority. To inscribe was to decide — which harvests to count, which families to list, which debts to record. The written word condensed reality into a fixed, portable form, turning choices into facts. What began as memory externalized soon became governance encoded, where the act of writing itself structured power.

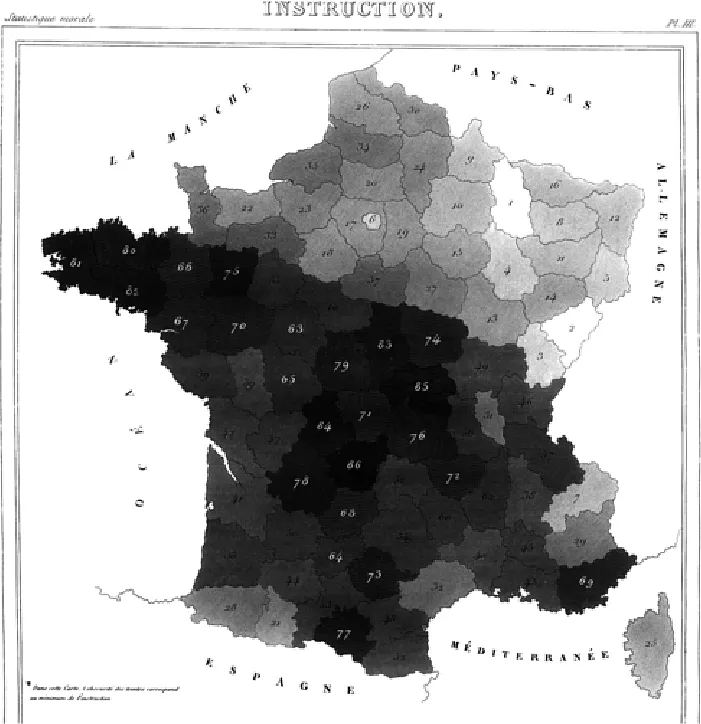

By the early modern era, this logic intensified. Political arithmetic and statistics reframed societies as measurable systems, while new visual models translated abstractions into curves, tables, and diagrams. Representation became its own form of processing — reducing lived complexity into formats that could be compared, manipulated, and controlled.

A21_EARLY_STATISTICS

Counting to Govern — Political Arithmetic and Early Statistics

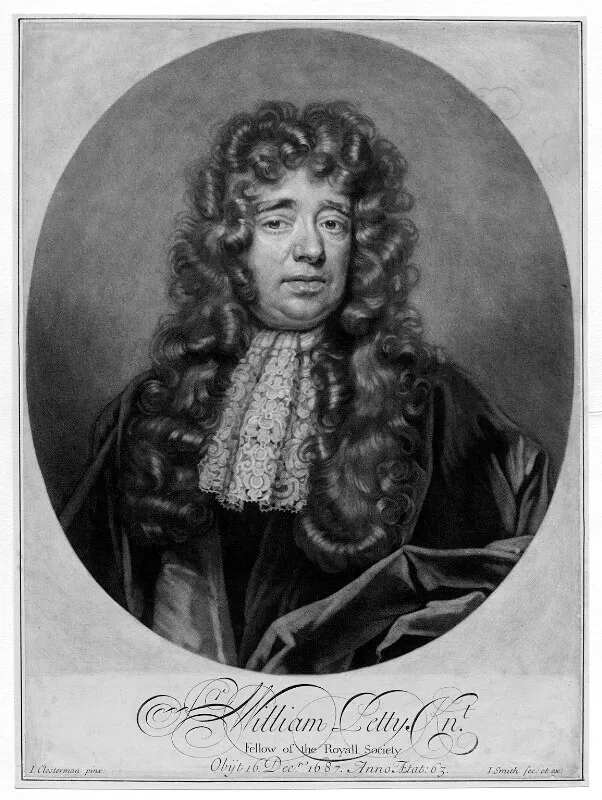

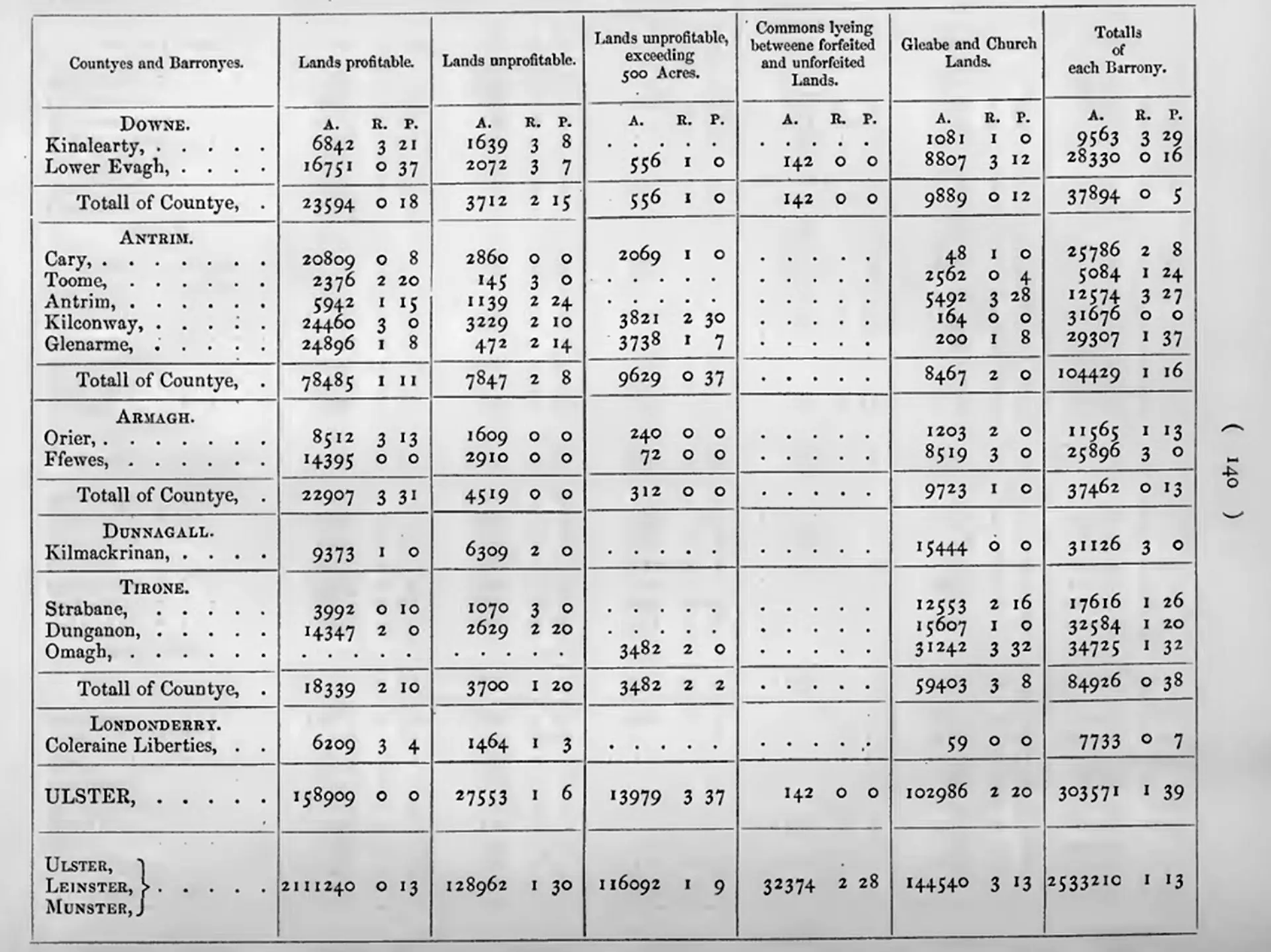

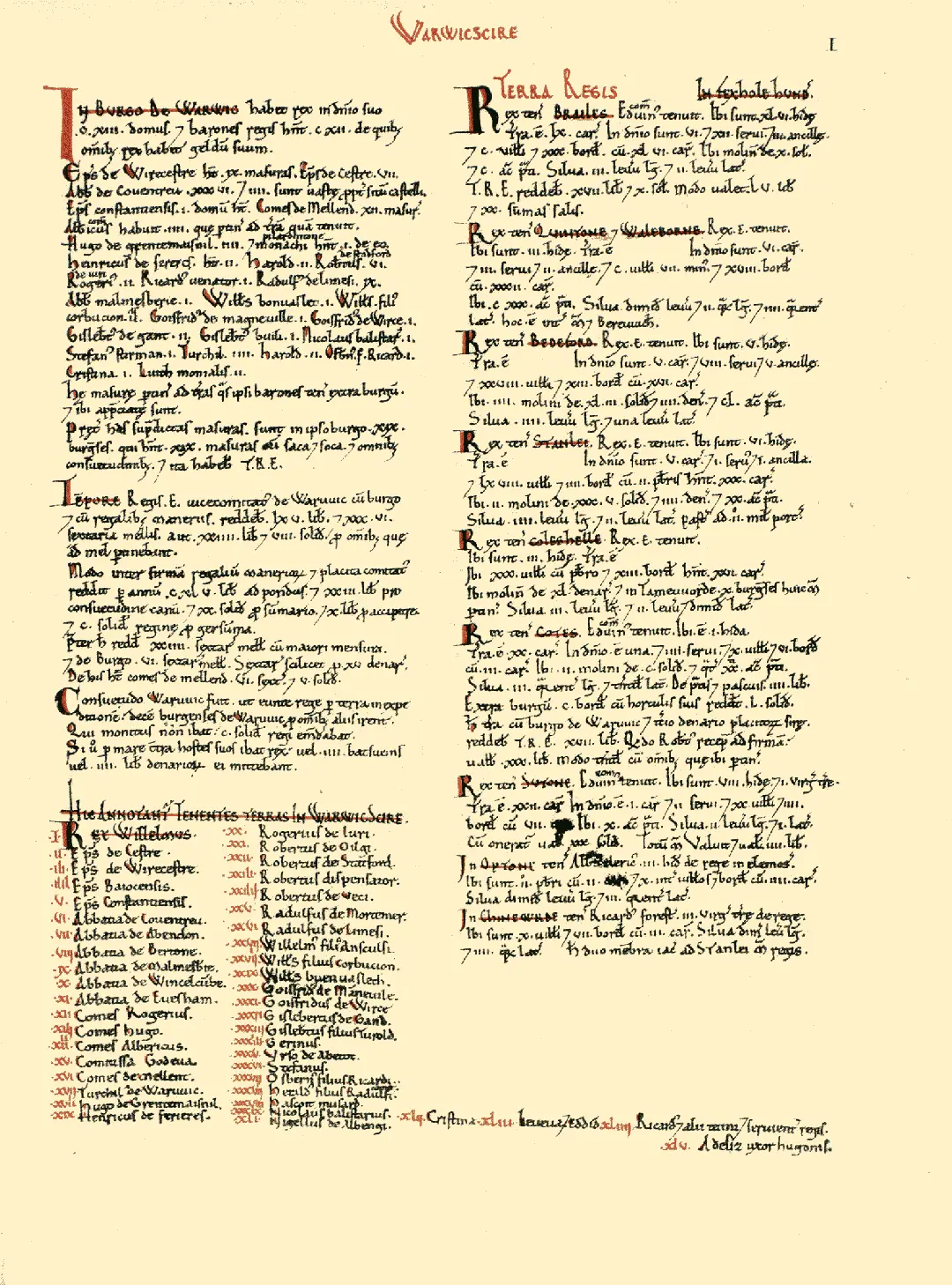

Long before mechanical tabulators, governments used data to exert control. In early modern Europe, the rise of absolutist states brought new pressures to measure, compare, and forecast populations. Inspired by older registers like the Domesday Book, a new intellectual tradition emerged in the 17th century: political arithmetic.

Pioneers like John Graunt and William Petty introduced statistical summaries of mortality and trade in London, proposing that societies could be studied mathematically. In German-speaking states, this formalized into Staatswissenschaft — the “science of the state” — combining demographic counts, land surveys, and resource assessments into a toolkit for rational administration

A21_EARLY_STATISTICS

A21_EARLY_STATISTICS

A24_INSTITUTIONAL_CARDS

Political arithmetic reframed governance as a matter of calculation. Lives, land, and trade were translated into numbers that could be compared, projected, and acted upon. This was processing in its most political form — abstraction as a tool of control. Once rulers had begun to see societies as datasets, it was only a matter of time before machines like Hollerith’s tabulator were absorbed into the bureaucratic machinery, making the arithmetic of states permanent.

A24_INSTITUTIONAL_CARDS

Tabulated Society — Punched Cards in Government and Business

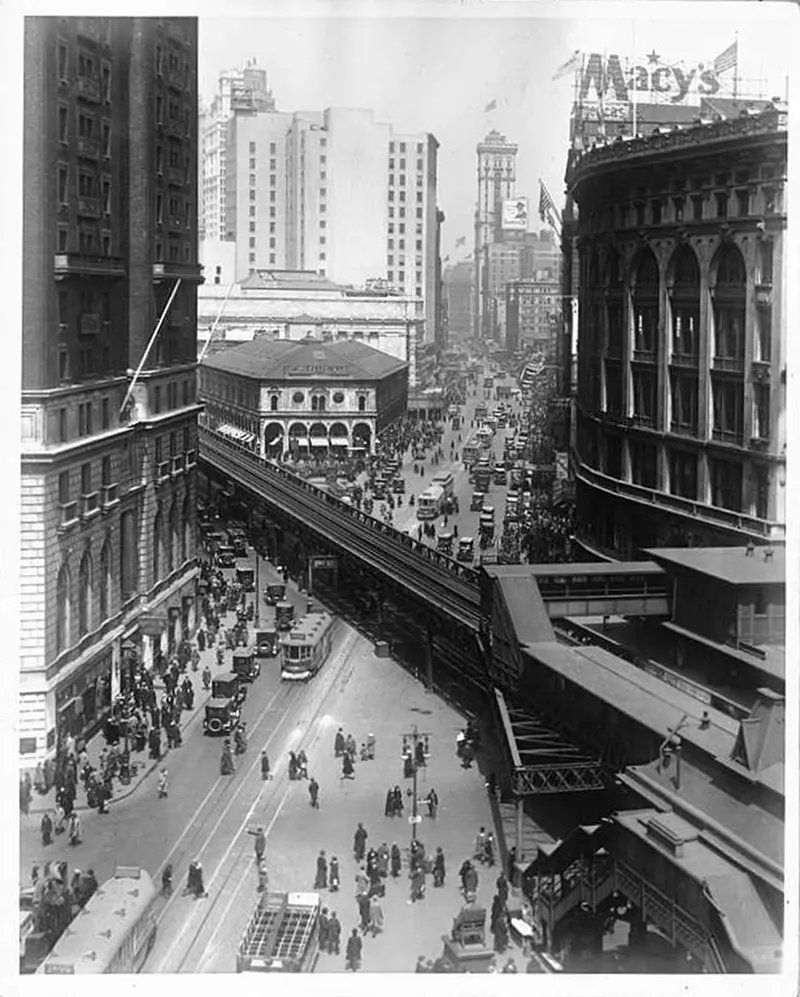

After the success of the 1890 U.S. Census, punched card machines quickly became a pillar of state and corporate infrastructure. Already in 1891, governments in Canada, Norway, and Austria had adopted versions of punch-card tabulators for their own censuses.

Then, Hollerith’s systems — now under the Computing-Tabulating-Recording Company (CTR), which later became IBM — expanded from population counts to civil registries, tax records, military logistics, and industrial payroll.

In Europe, punched card tabulators were used to track unemployment during the Weimar Republic and later for darker purposes under totalitarian regimes. In the U.S., corporations adopted the technology to manage workers and inventories. Punched cards became tokens of identity: each card a fragile stand-in for a worker, a file, a life — processed at scale.

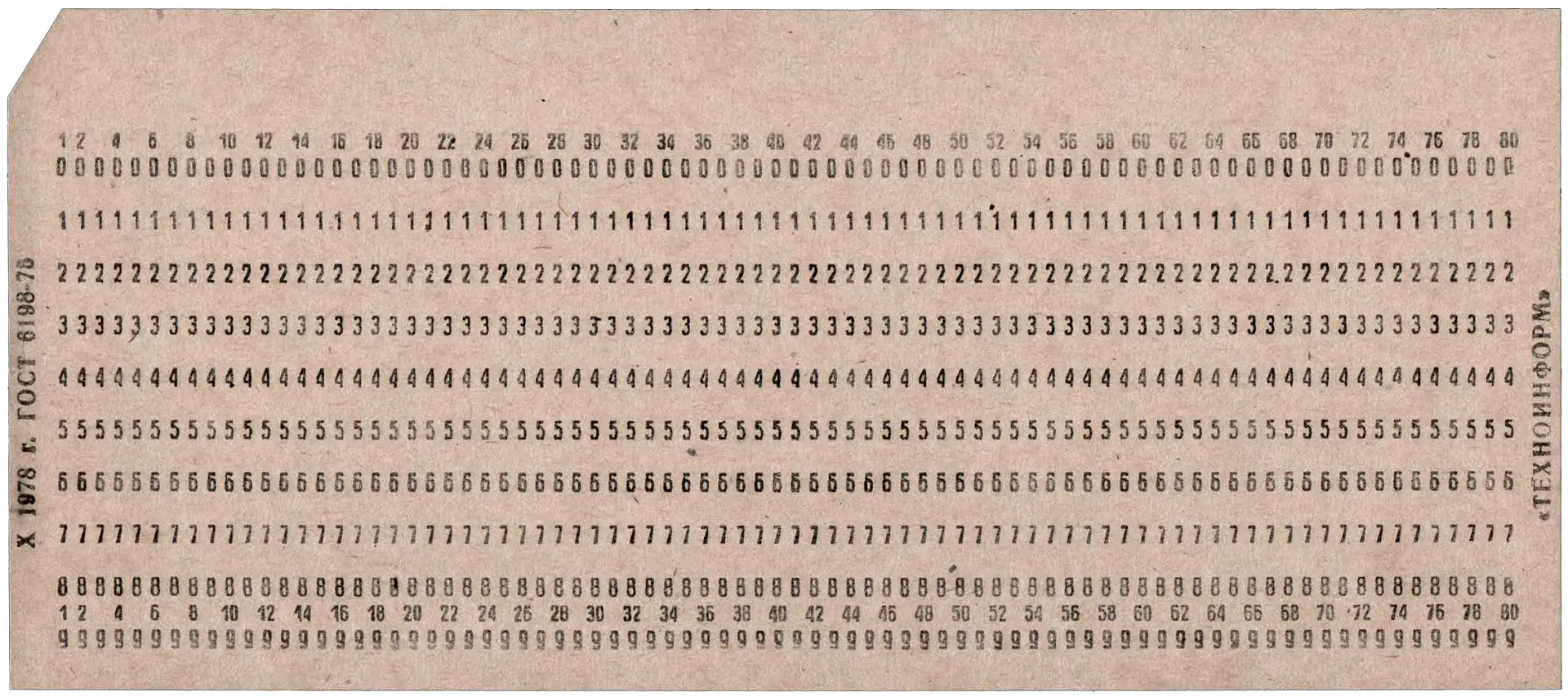

Their layout became standardized: 80 columns, rectangular holes, precise rows. What began as a statistical tool evolved into a universal format for encoding human activity. This expansion paved the way for mainframe computing, but it also quietly embedded new logics of sorting, surveillance, and abstraction — long before digital systems arrived.

A24_INSTITUTIONAL_CARDS

A24_INSTITUTIONAL_CARDS

A3_Enumerating the World

Punched card systems changed the scale of data processing. No longer tied to a single loom or census, they became the connective tissue of states and corporations. Civil registries, payrolls, tax records, and inventories all began to flow through standardized cards and tabulators.

Processing here was not invention but normalization — a logic absorbed into institutions until bureaucracy itself depended on it. To see how deep these habits ran, we need to rewind to older traditions of counting, where enumeration was less about machines than about ritual order.

A3

Enumerating the World: Counting as Ritual, Power, and Abstraction

Transforming the real world into records, scripts, and protocols gave writing a double power: permanence and authority. To inscribe was to decide — which harvests to count, which families to list, which debts to record. The written word condensed reality into a fixed, portable form, turning choices into facts. What began as memory externalized soon became governance encoded, where the act of writing itself structured power.

By the early modern era, this logic intensified. Political arithmetic and statistics reframed societies as measurable systems, while new visual models translated abstractions into curves, tables, and diagrams. Representation became its own form of processing — reducing lived complexity into formats that could be compared, manipulated, and controlled.

A31_EARLY_ENUMERATION

Sacred Numbers and Civic Counts — Early Enumeration as Control and Ritual

Long before modern statistics, societies developed elaborate systems for counting people, goods, and time.

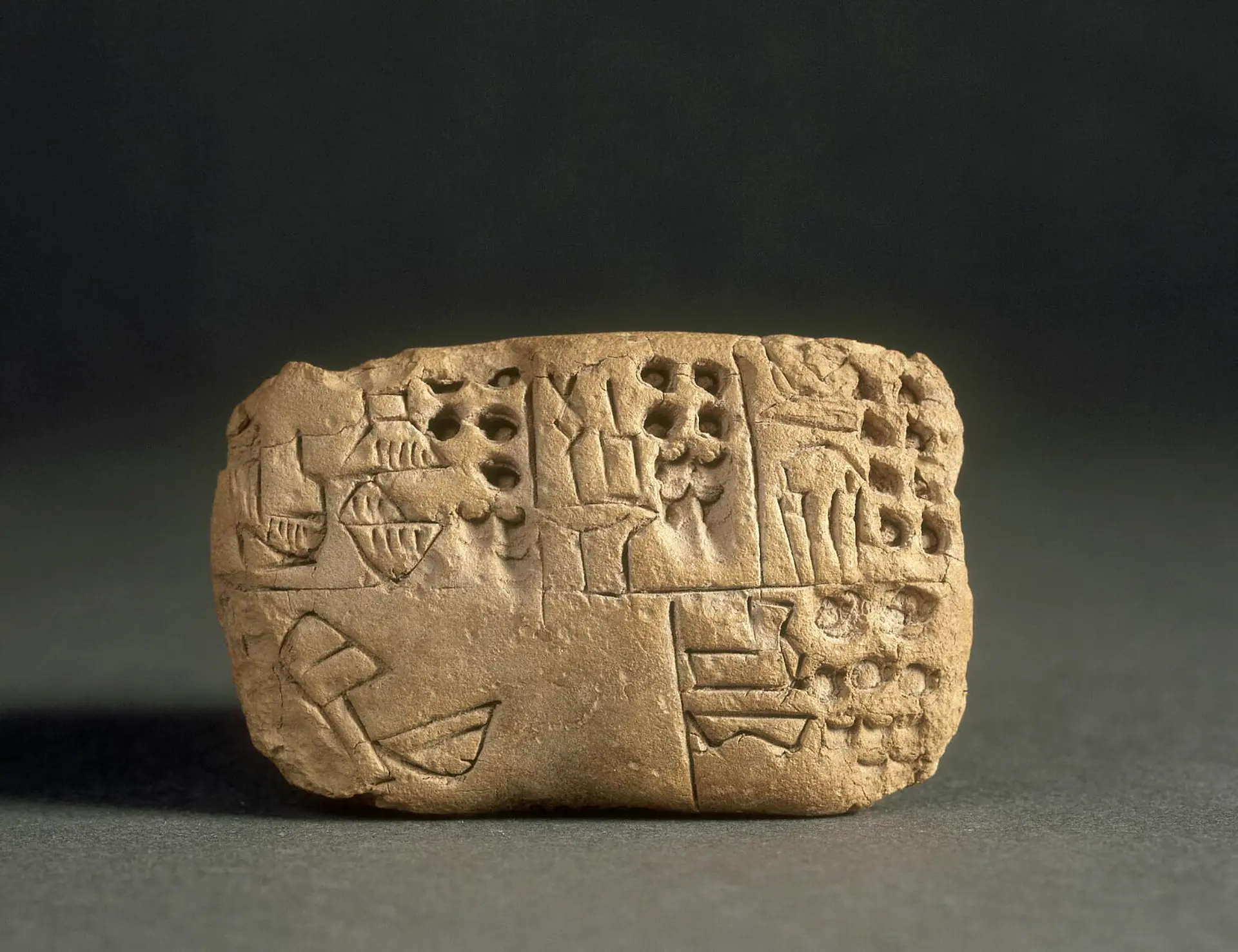

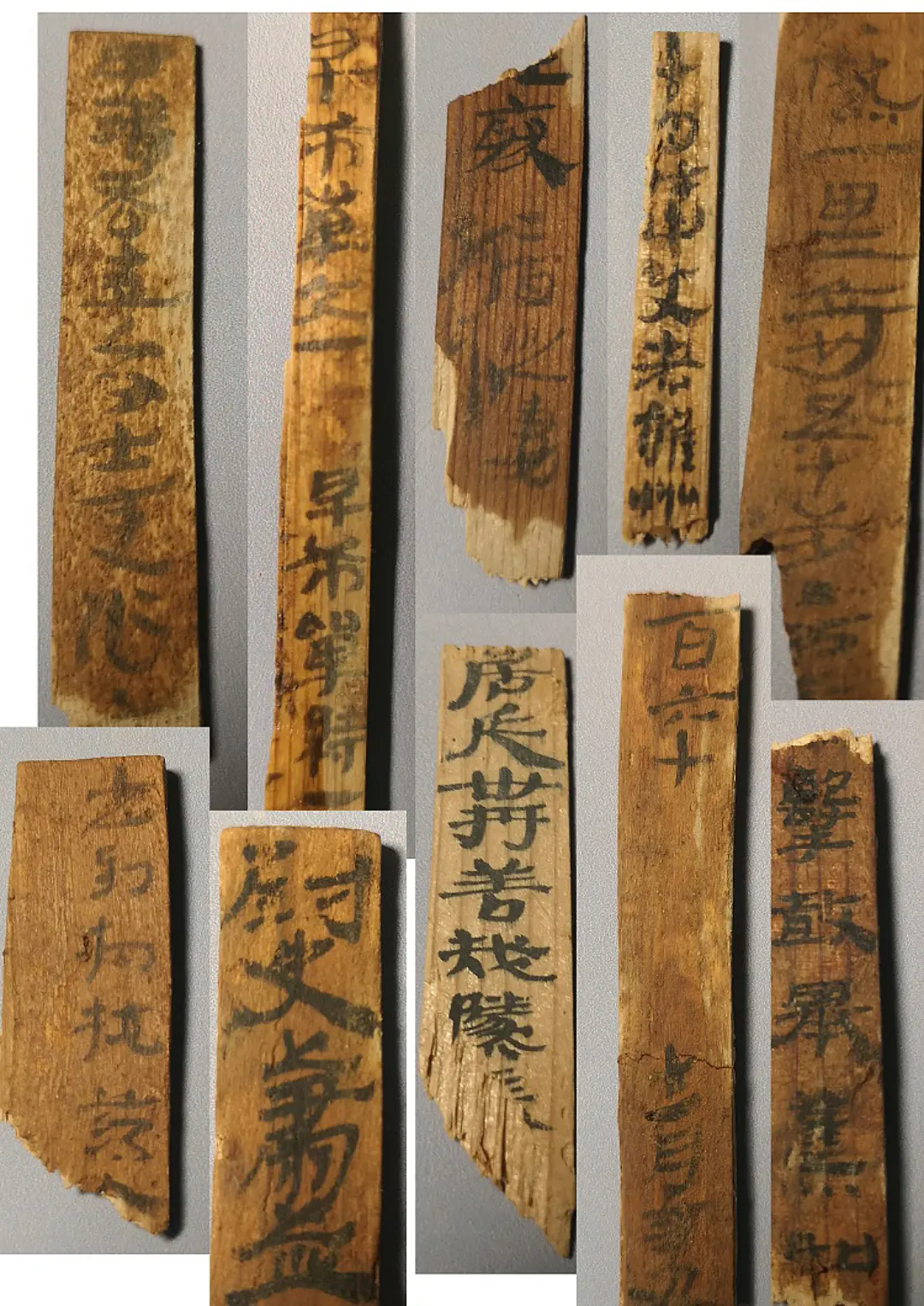

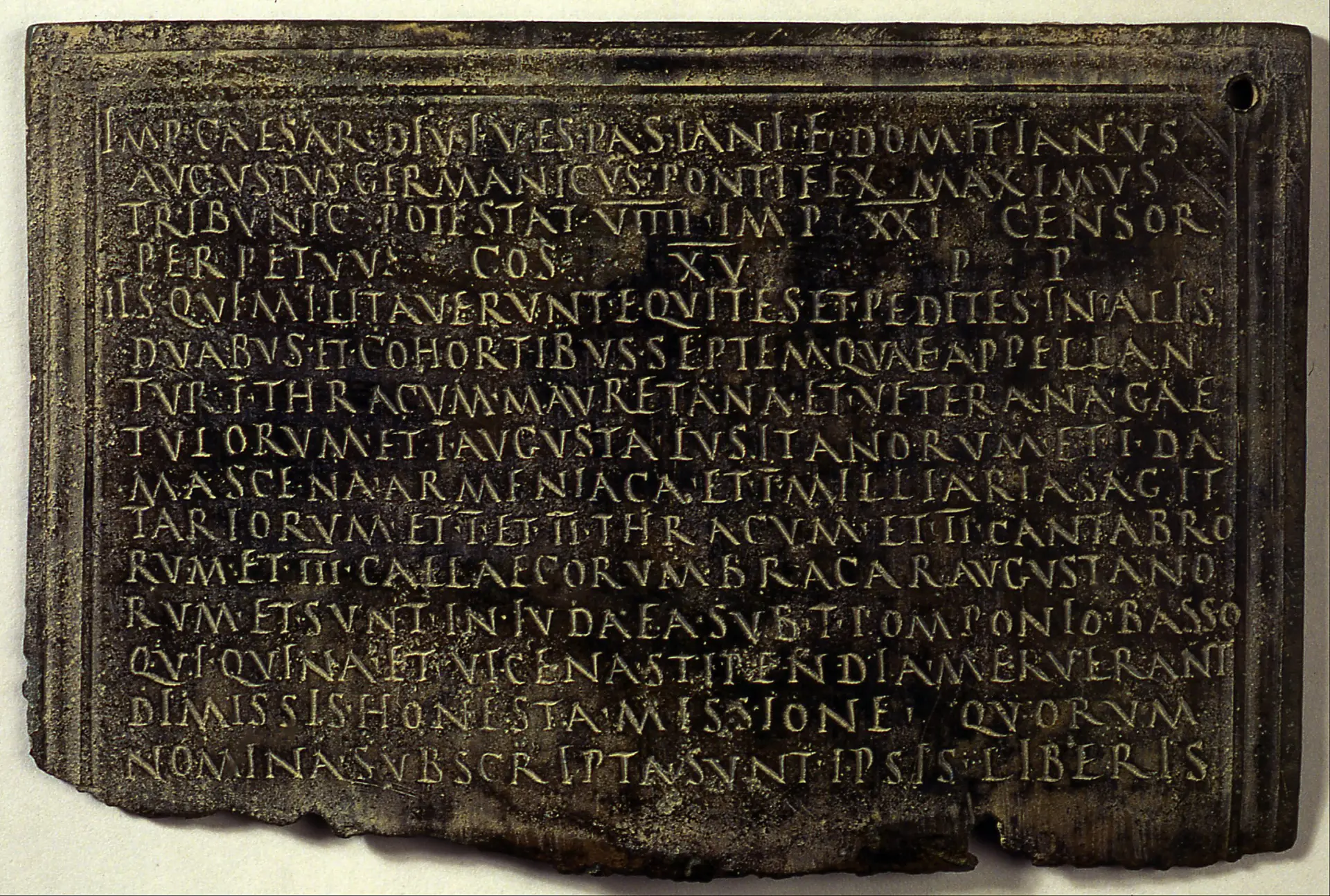

In ancient Sumer, clay tokens evolved into cuneiform tablets to track grain, livestock, and labor — a direct response to the needs of temple economies. Egypt’s annual nilometer readings and population tallies shaped everything from taxation to cosmic order. In Imperial China, the household registration system (hukou) maintained dynastic stability. Rome’s census did more than record — it legitimized class, military duty, and political privilege.

A31_EARLY_ENUMERATION

A31_EARLY_ENUMERATION

A32_TABLES_GRAPHICS

Enumeration in ancient societies was more than a tally — it was a way to make order tangible. Counting grain or soldiers gave rulers proof of dominion, while censuses sanctified authority by linking earthly administration to cosmic or religious order. The key idea is that numbers here acted as instruments of power as much as records of fact. From this foundation, it was a short step to formats that didn’t just count but structured information — tabular thought.

A32_TABLES_GRAPHICS

Tabular Thought — From Gospels to Graphs

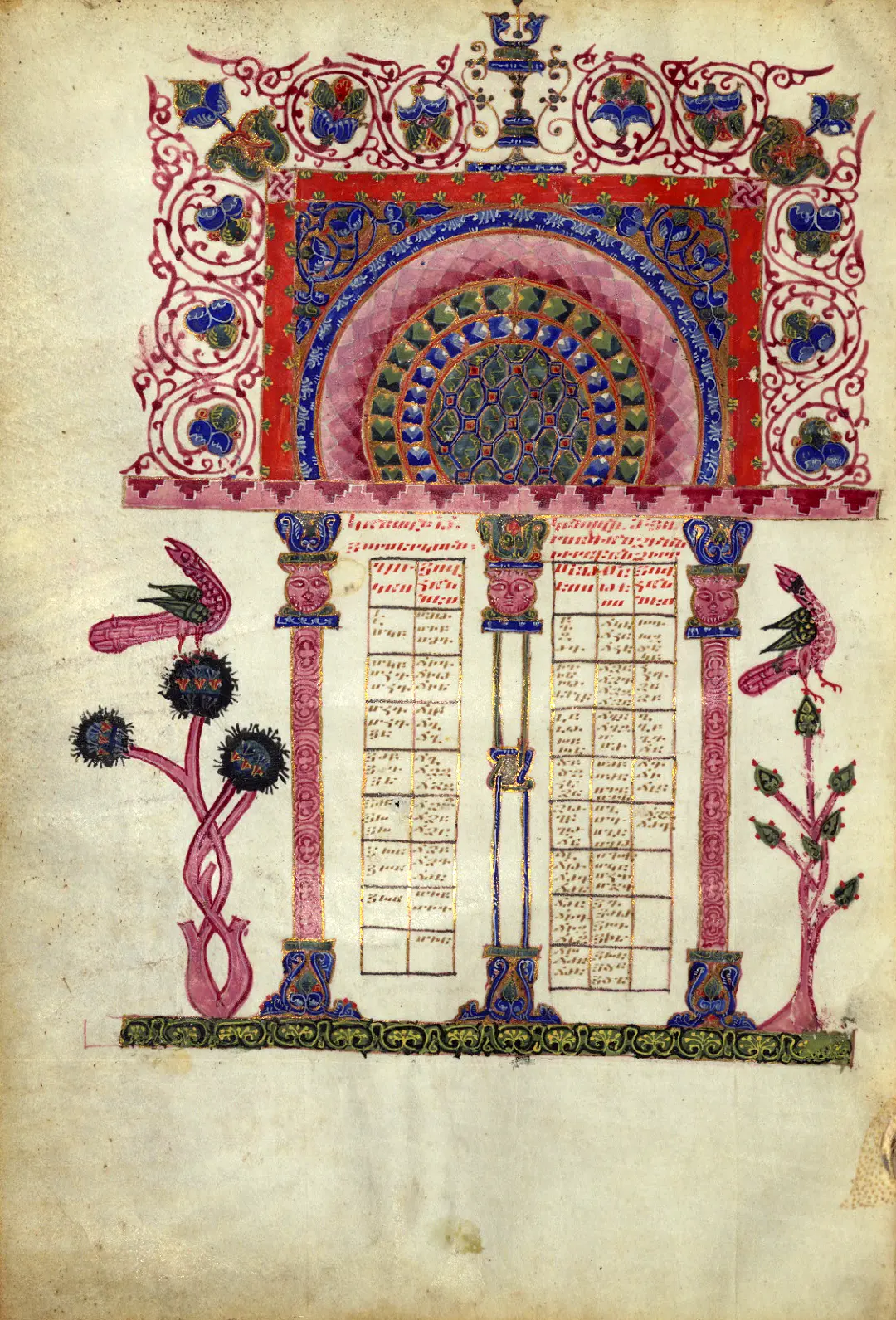

Tables were among the earliest tools used to organize and transmit information. In antiquity, cuneiform tablets managed inventories and taxes. By the 6th century CE, the Eusebian Canon Tables linked Gospel passages across the New Testament, facilitating theological study and laying the foundation for relational thinking.

Medieval Islamic scholars refined astronomical and mathematical tables, using them to convey empirical and calculated data. In Western Europe, tables were employed for liturgical purposes such as computing the Easter date, exemplified by Dionysius Exiguus's calendrical system in 532 CE.

By the 17th century, printed tables circulated widely, covering subjects from demographic counts to planetary motion, and provided a standardized format for organizing and comparing information.

A32_TABLES_GRAPHICS

A32_TABLES_GRAPHICS

A34_ECON_ABSTRACTION

Tables did more than arrange information. They trained readers to think in grids. By forcing data into rows and columns, tables created a structure that made comparison, correlation, and projection possible. This was processing on paper — a format that compressed complexity into manageable sequences and prepared the ground for more abstract models. The next step pushed this logic further, as political arithmetic turned lists and tables into diagrams of entire economies.

A34_ECON_ABSTRACTION

From Political Arithmetic to Economic Diagrams — Data Selection and Abstraction

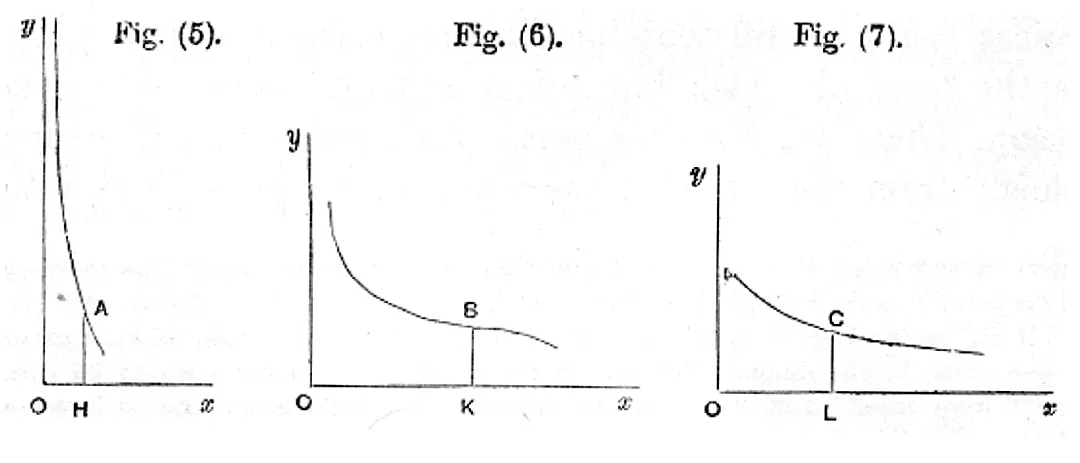

Mathematical methods pushed economic data beyond counts and inventories. But the abstraction came with choices: what to count, what to ignore, and how to draw a system where labor and material resistance disappeared.

This was no longer about describing society, but simulating it. Graphs of cost and output treated work as a function, not a force. The body vanished. Diagrams circulated through ministries and lecture halls, presenting a world of perfect inputs and optimized exchange.

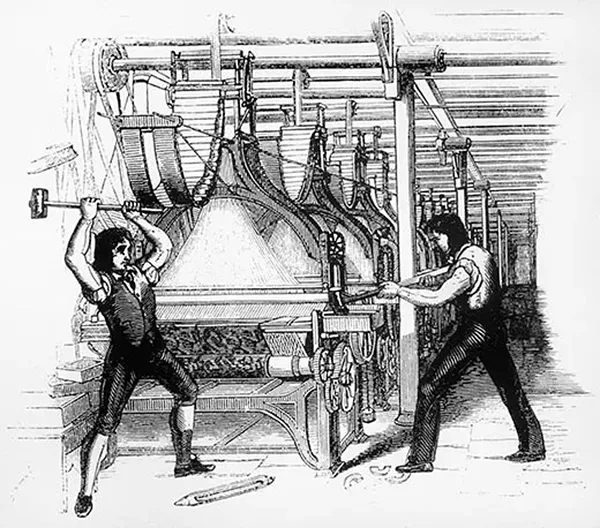

In this shift, the loom returned — not as a machine, but as a metaphor. Economic modeling borrowed its logic: patterns encoded, complexity reduced, skill displaced. And just as mechanical looms once triggered uprisings among artisans, this new epistemic loom — operated by economists — restructured the world with invisible consequences.

A34_ECON_ABSTRACTION

A34_ECON_ABSTRACTION

A35_HYDRAULIC_ECONOMY

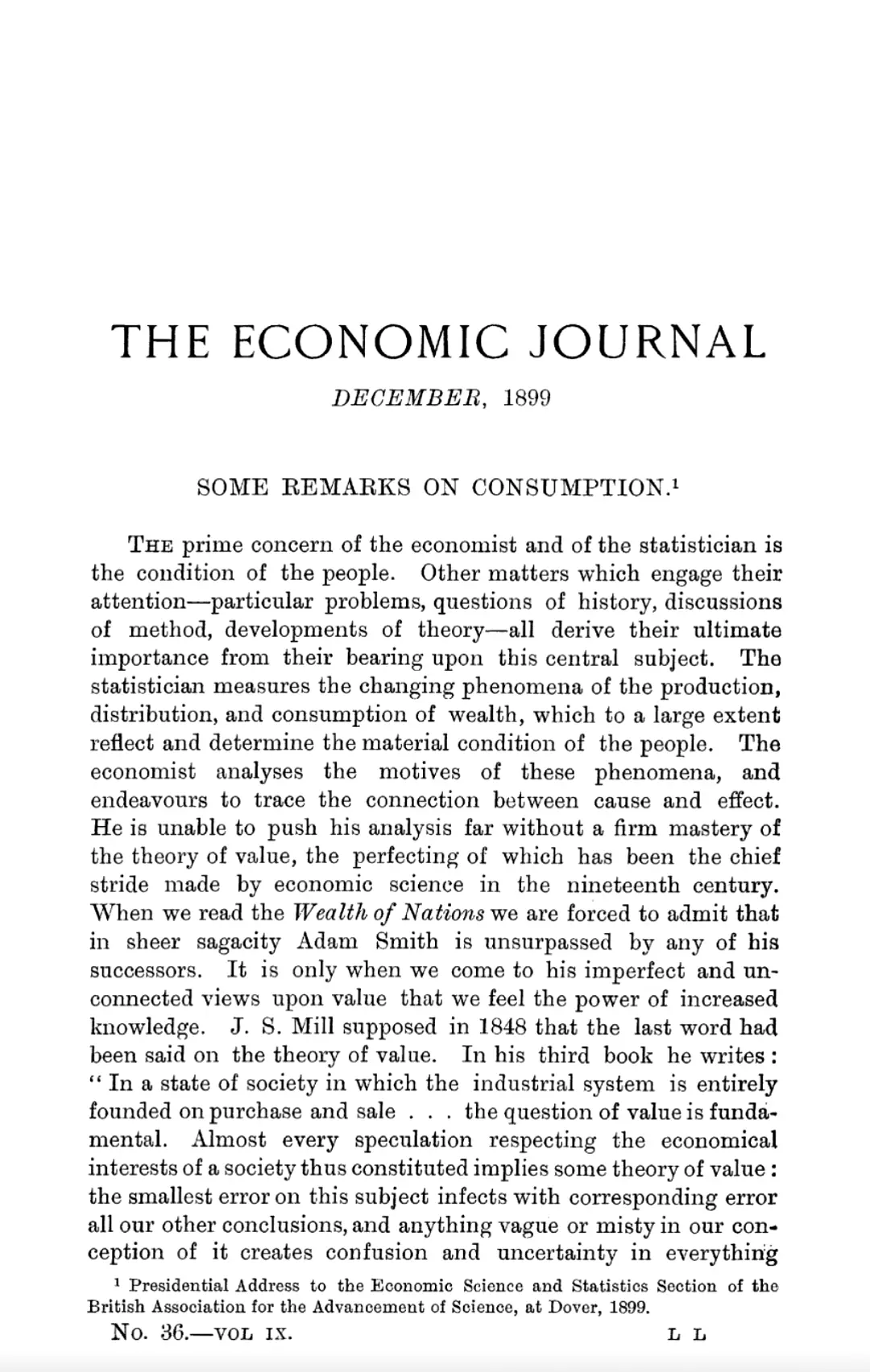

By the late 19th century, economists increasingly worked with diagrams, curves, and abstract models to represent flows of goods and behaviors of markets. These visualizations did not just display numbers but organized them into systems, turning production, cost, and exchange into patterns that could be manipulated and compared. Such models helped shift economic reasoning from descriptive accounts to formalized structures of analysis.

A35_HYDRAULIC_ECONOMY

Liquid Models — Simulating Economies with Analog Machines

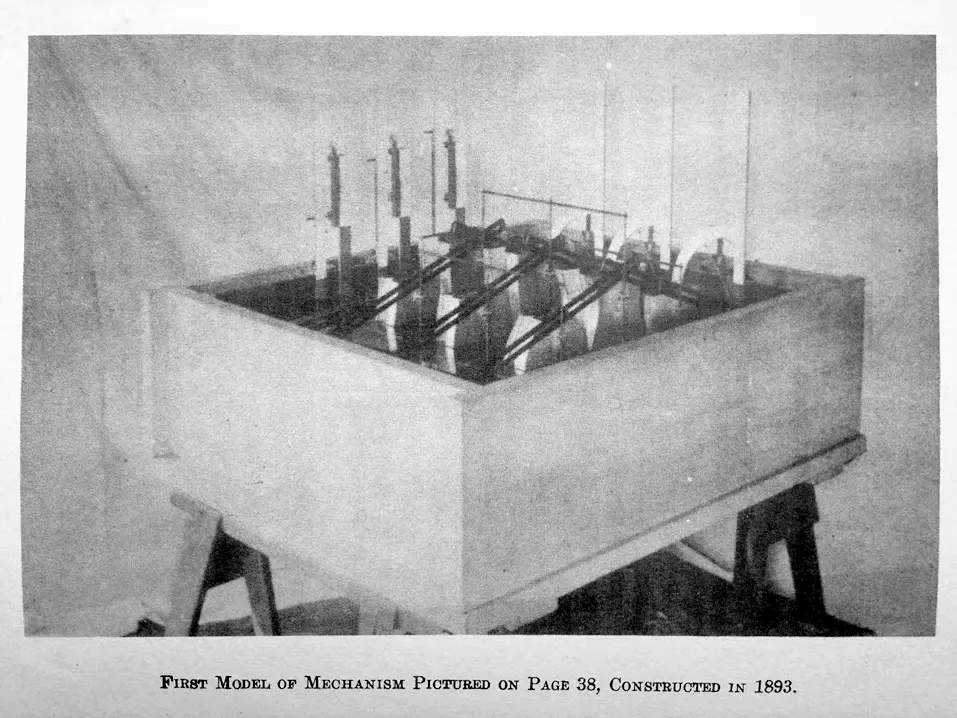

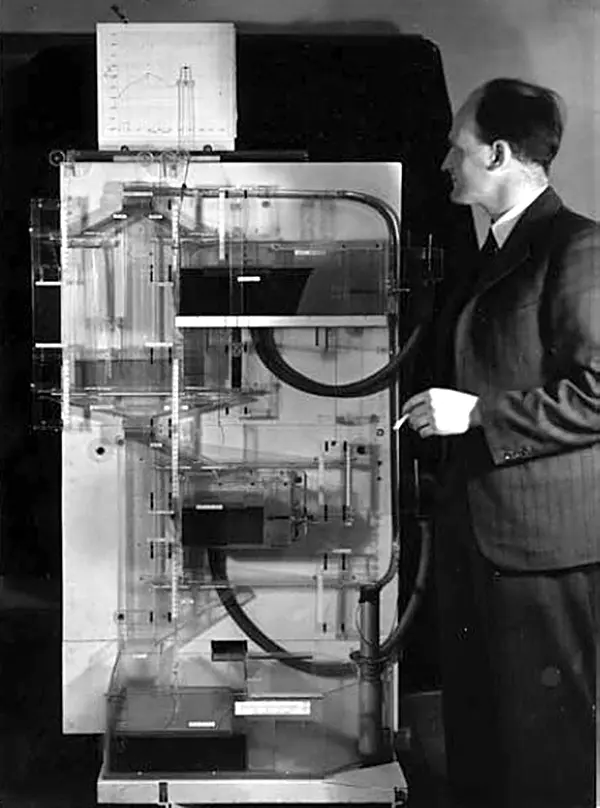

While Herman Hollerith’s punch-card systems became the foundation of administrative computing, other thinkers explored more physical metaphors for modeling economic systems. In 1891, economist Irving Fisher built a hydraulic machine to simulate general equilibrium using interconnected tubes and flowing water. By adjusting valves and fluid levels, users could visualize supply, demand, and price dynamics across markets.

This idea didn’t end there. In 1949, Bill Phillips, a New Zealand economist and engineer, built the MONIAC — a larger hydraulic computer that modeled national income flows, taxes, investments, and savings using colored water in tanks and pipes. It became a teaching and policy tool, particularly in postwar Britain.

These analog machines never reached administrative scale. But they represented an alternative path: computation through embodied simulation rather than abstraction. Their legacy is conceptual — a reminder that the urge to visualize systems long predated digital spreadsheets, and that data modeling once flowed, quite literally, through pipes.

A4

Business Data and Tabulation

By the early 20th century, the abstractions of economists and statisticians were mirrored by the spread of mechanical tools for everyday business. Cash registers, adding machines, and tabulators no longer modeled economies in principle — they processed transactions, wages, and inventories in practice.

Data processing now entered offices, banks, and shops. Reliability and speed mattered as much as theory. Machines hardened routines into mechanisms, ensuring that calculation, accounting, and reporting could happen without delay or dispute. This shift marked the move from experimental models to practical infrastructures of commerce and industry.

A42_BUSINESS_INTELLIGENCE

Early Business Intelligence — From Intuition to Data

Sir Henry Furnese, who founded the Bank of England in 1694, was among the first merchants to build espionage networks, gathering reports from across Europe on military, political, and trade affairs. His ability to anticipate market shifts through such information marked an early form of business intelligence, moving commerce from instinctual decision-making toward data-driven speculation.

With the rise of industrial capitalism, businessmen sought more formalized, empirical ways to manage complexity. In the 1880s, engineer Frederick Winslow Taylor developed time-and-motion studies to measure labor productivity. His data-driven insights redefined managerial decision-making.

In the early 20th century, the pioneering films by Lillian Gilbreth and Ralph Barnes, investigating industrial operations and developing motion study techniques (1910-1924), further advanced the systematic approach to business intelligence. Their work laid the foundation for the growing use of data to streamline operations and decision-making.

Soon after, Henry Ford adapted such techniques to refine production lines, timing the manufacture of each component and embedding measurement into industrial practice.

A42_BUSINESS_INTELLIGENCE

A42_BUSINESS_INTELLIGENCE

A43_GRAPHIC_STATS

What these practices revealed was a deeper shift in the culture of decision-making. Business leaders demanded evidence that could be logged, compared, and replicated. Data became an active instrument, not a by-product — something managers could wield to control processes and outpace rivals.

This new expectation set the stage for another leap: turning numbers into convincing images.

A43_GRAPHIC_STATS

Visualizing Society — Finance, Mortality, and Governance

As statistical thinking matured, so did methods to visualize it. By the 17th century, England’s Bills of Mortality were not only collected but interpreted. John Graunt’s 1662 analysis offered early demographic insights, tracking plague deaths and life expectancy. A few decades later, Edmund Halley’s mortality tables laid the foundation for actuarial science.

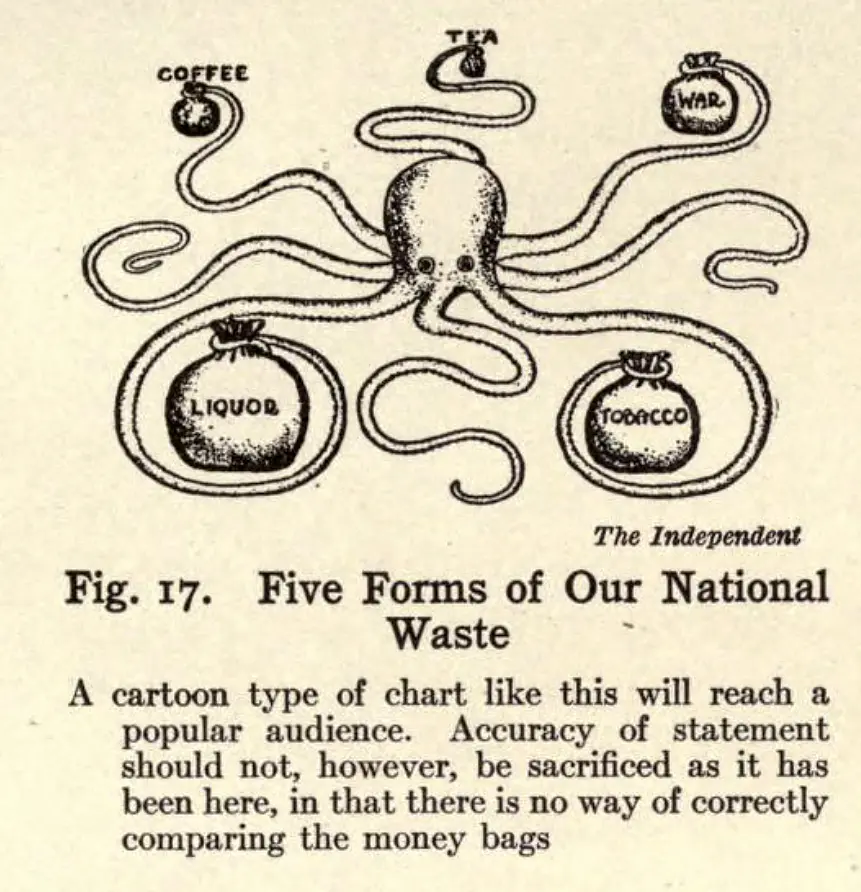

In the 19th century, the use of charts and graphs became more systematic. William Playfair’s bar and pie charts helped represent trade balances and budgets. In the U.S., business statisticians embraced infographics to guide decision-making.

Willard Brinton’s Graphic Methods for Presenting Facts (1914) emphasized that clear representation could shape executive understanding. His manual ranged from simplified charts to complex 3D diagrams, promoting visuals as practical tools for business and government.

A43_GRAPHIC_STATS

A43_GRAPHIC_STATS

A44_MECHANICAL_ENTRY

Visual representation revealed that numbers alone could not persuade. Turning data into images transformed abstraction into patterns that could be grasped quickly and acted upon. Graphs and charts compressed complexity into forms that executives, administrators, and citizens could read at a glance.

Processing here was not only about calculation but about communication, making information usable across institutions. The next frontier took this logic further, embedding calculation directly into machines that recorded transactions and mechanized the ledger.

A44_MECHANICAL_ENTRY

From Cash Registers to Calculators — Mechanizing the Ledger

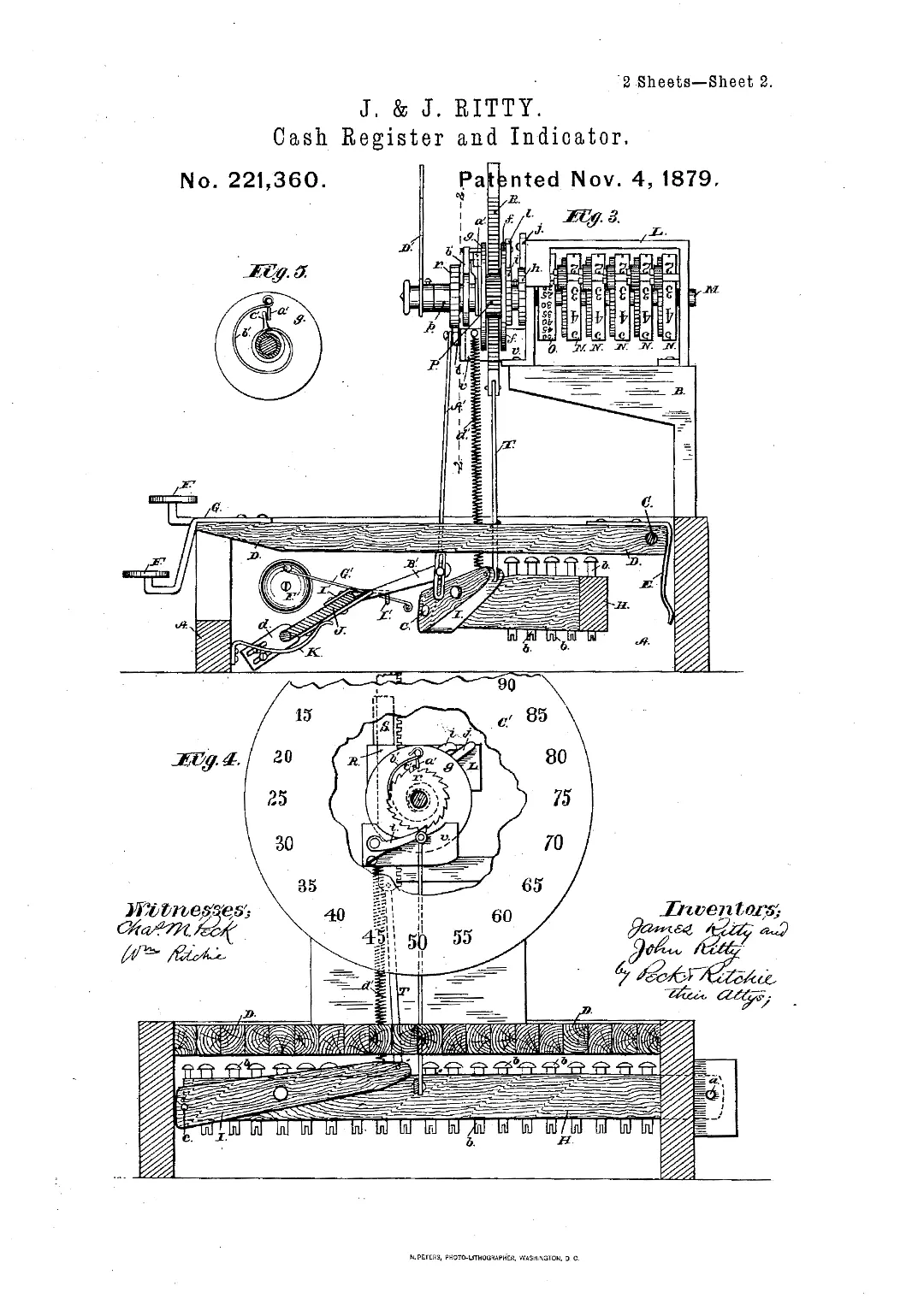

The industrial push to mechanize business records brought calculating devices out of labs and into the workplace. In 1879, Ohio saloon owner James Ritty and inventor John Birch patented the “incorruptible cashier,” a mechanical register designed to prevent employee fraud.

Models were soon enhanced with paper rolls for printed receipts, turning cash registers into trusted tools for recording financial transactions. This innovation led to the founding of companies like Burroughs and National Cash Register, which later pivoted into the computing industry.

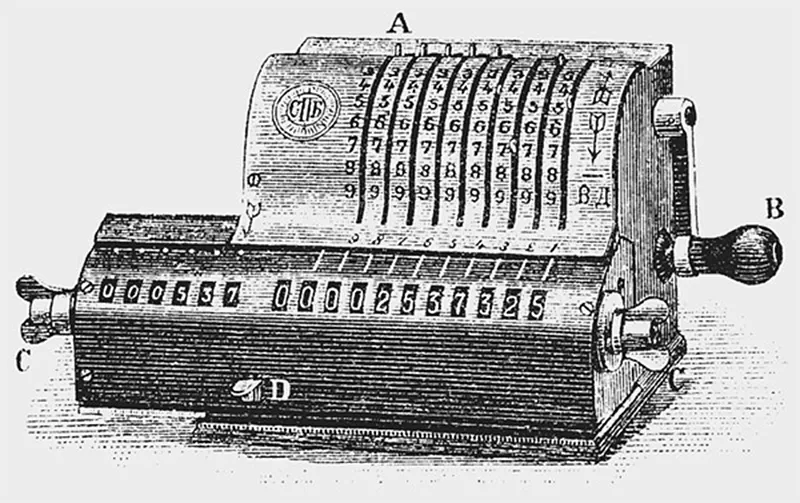

Meanwhile, Swedish engineer Willgodt T. Odhner, working in St. Petersburg (Russian Empire), developed a compact mechanical arithmometer in the 1870s. His device, refined over decades, became a standard in Soviet administrations and was marketed in the West under the “Facit” brand.

A44_MECHANICAL_ENTRY

A44_MECHANICAL_ENTRY

A45_TABULATOR_COMMERCE

Cash registers and arithmometers did more than speed up transactions. They redefined how information was captured and trusted, standardizing entries into receipts and ledgers that machines could process.

With key-driven inputs, calculation became a mechanical routine rather than a mental task. This was the first real interface between everyday business and computational logic, preparing the ground for tabulators that would extend the same principles across entire industries.

A45_TABULATOR_COMMERCE

Tabulating Commerce — Insurance, Railroads, and Beyond

In 1890, Herman Hollerith demonstrated his punch card tabulator to the Actuarial Society of America, marking a pivotal moment in the adoption of data-driven decision-making in business. Insurance companies like Prudential quickly saw the potential and adopted the system to streamline claims processing, though some actuaries, including John K. Gore, developed faster alternatives to Hollerith’s machines.

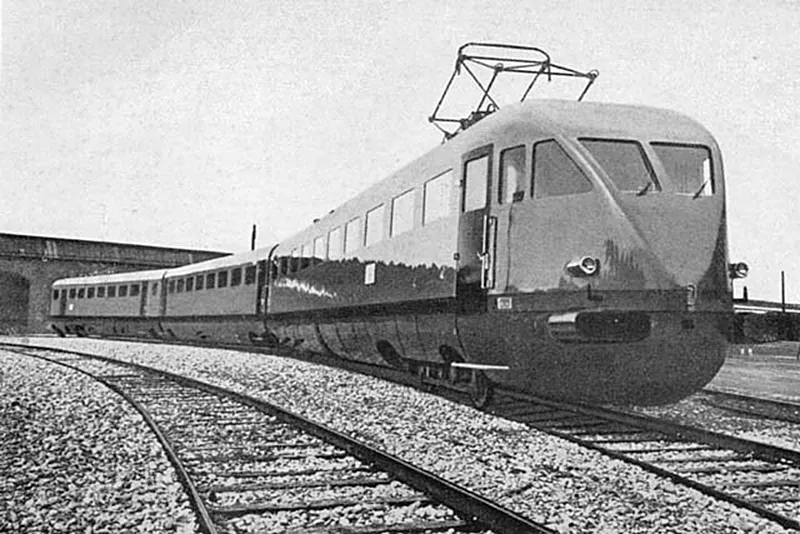

By 1910, Hollerith’s tabulator was being used by British railways to monitor costs and revenue per locomotive, linking granular data to profit margins. As Hollerith’s company evolved into IBM, the technology spread into European transportation networks — in 1928, Ferrovie dello Stato in Italy began using IBM equipment to manage train schedules and spare parts.

A45_TABULATOR_COMMERCE

A45_TABULATOR_COMMERCE

Supercluster B

Tabulators enabled a deeper understanding of organizations by breaking down revenue, costs, and claims with a precision no clerk could match. Processing here meant seeing patterns inside companies as clearly as governments had begun to see them in populations. From this point forward, data was deliberately mined for advantage.

***

Processing data was never only about machines. It grew out of habits and formats that shaped how societies worked. Counting, tabulating, and recording made information durable and transportable. Tables, punch cards, and ledgers imposed order, turning scattered facts into sequences that could be compared, recombined, and acted upon.

Mechanical registers, calculators, and tabulators embedded processing into shops, offices, and corporations. What had once been ritual or spectacle had become infrastructure, preparing the ground for electronic systems.

The Database Before Databases — Scale, Standardization, and Control

The mid-20th century brought a rupture in scale. Expanding states and corporations produced torrents of information that no manual routines could absorb.

It was like a loom suddenly overwhelmed with threads spilling in every direction. New machines had to take over, not as mechanical aids but as electronic systems able to hold entire fabrics of records together.

UNIVAC and its successors arrived in this moment — not as incremental upgrades, but as wagers that the basic functions of society could be entrusted to digital logic.

B1

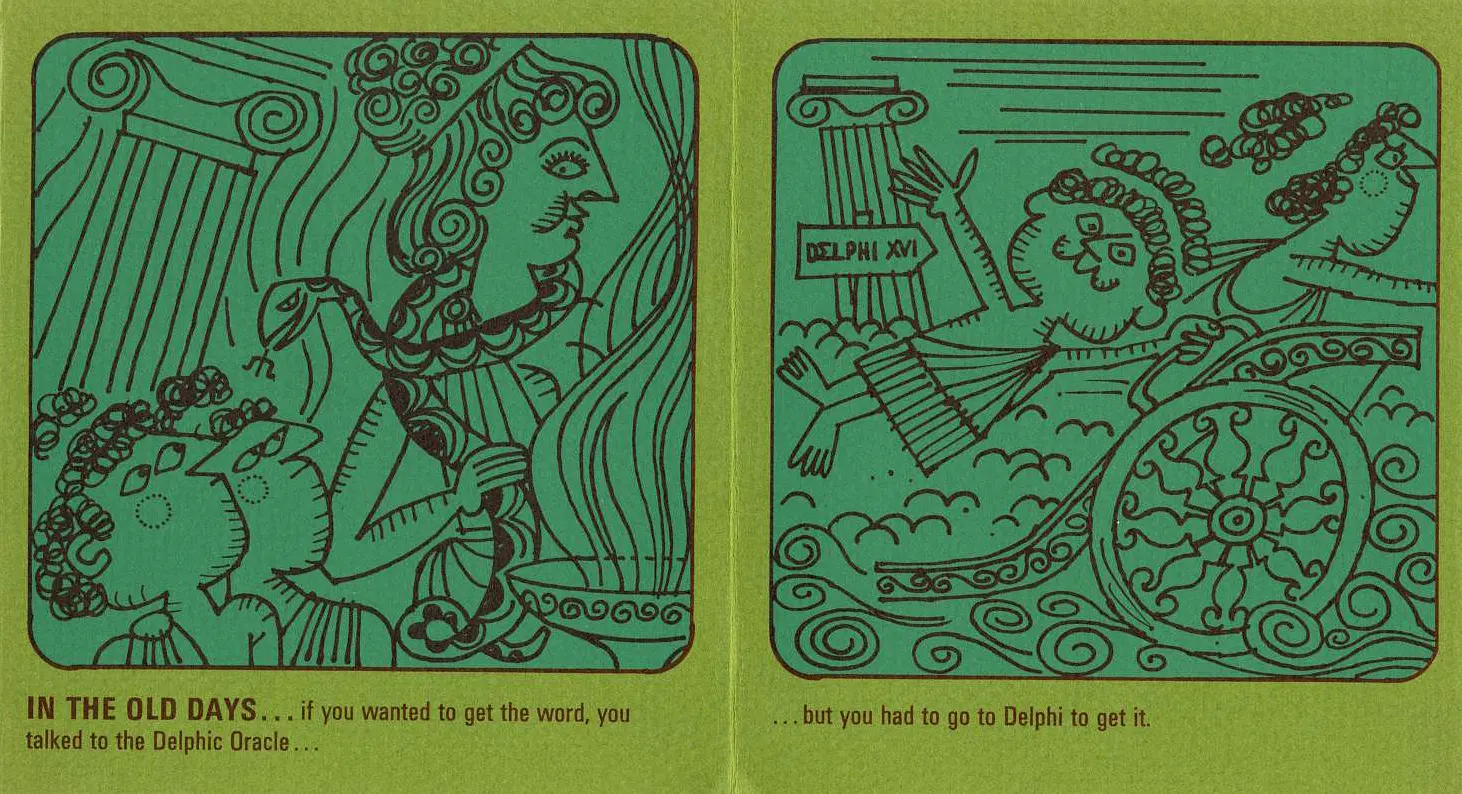

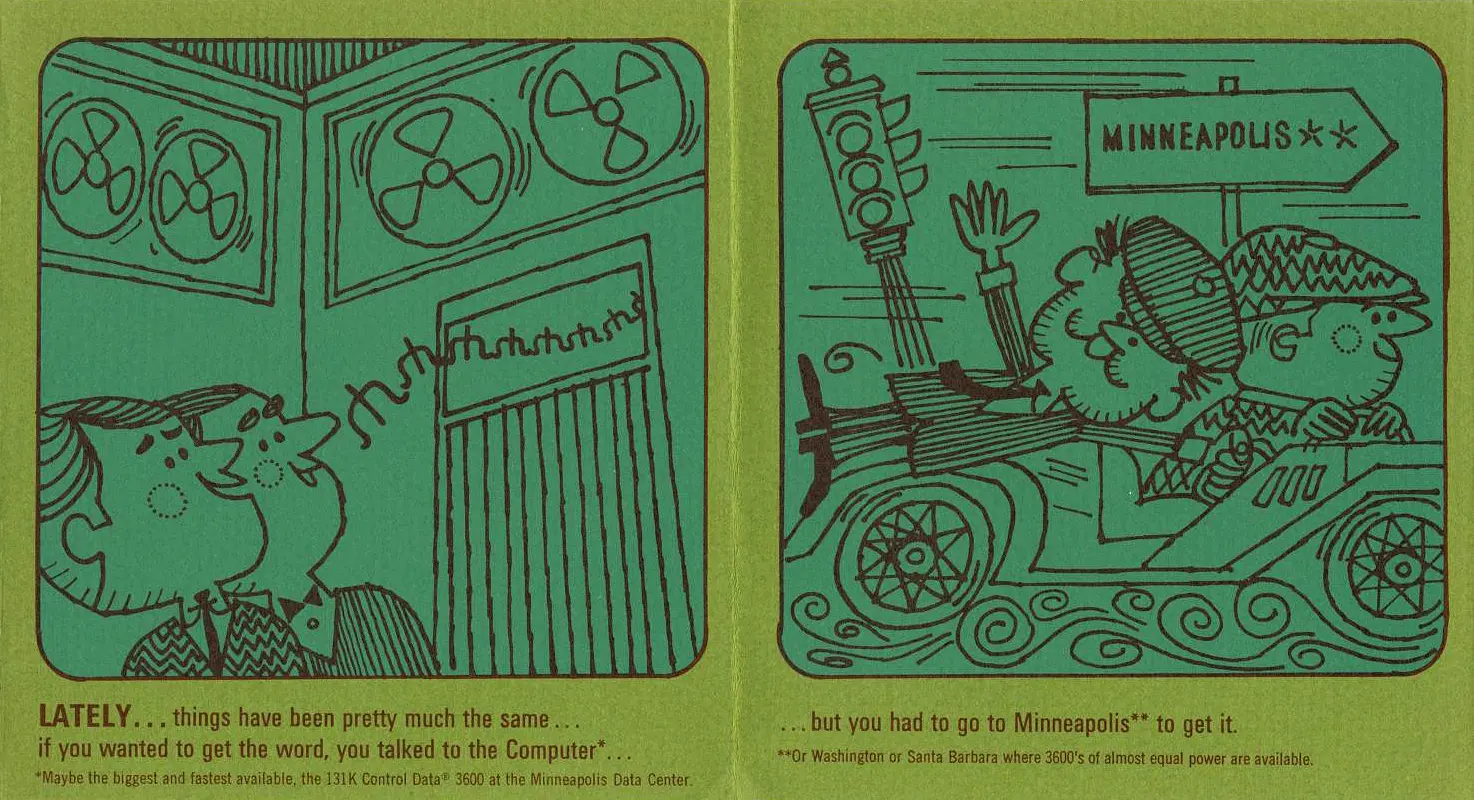

UNIVAC — From Machine to Metaphor

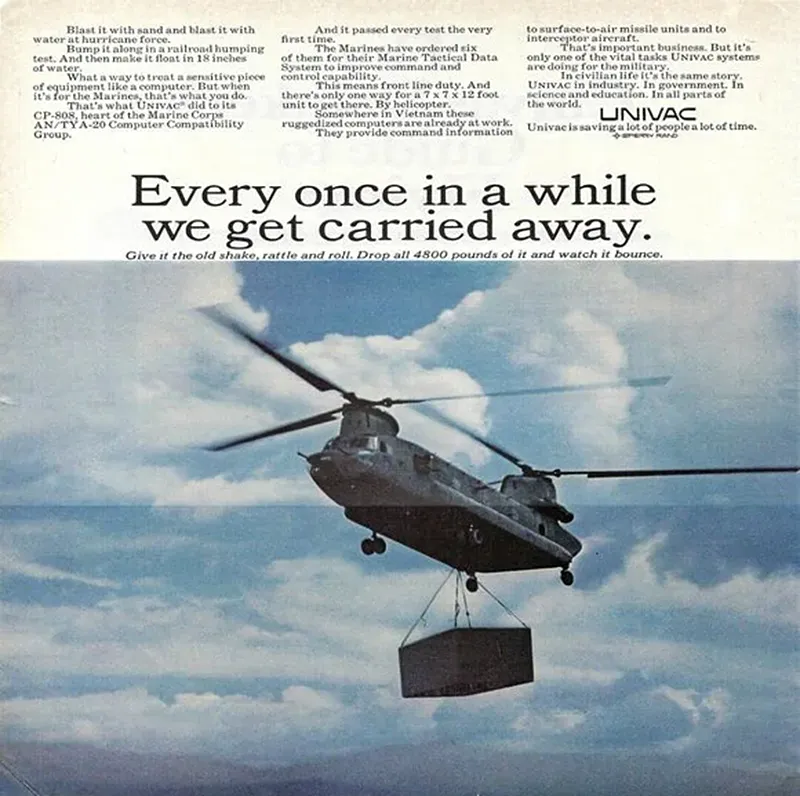

What made UNIVAC distinct was the combination of scale and symbolism. It was a commercial object priced in millions, yet also a cultural emblem that made electronic data processing visible to the wider public. UNIVAC pointed toward futures in which records, statistics, and planning would be managed by logic circuits rather than clerks.

B11_UNIVAC

UNIVAC I — Betting on Electronic Processing

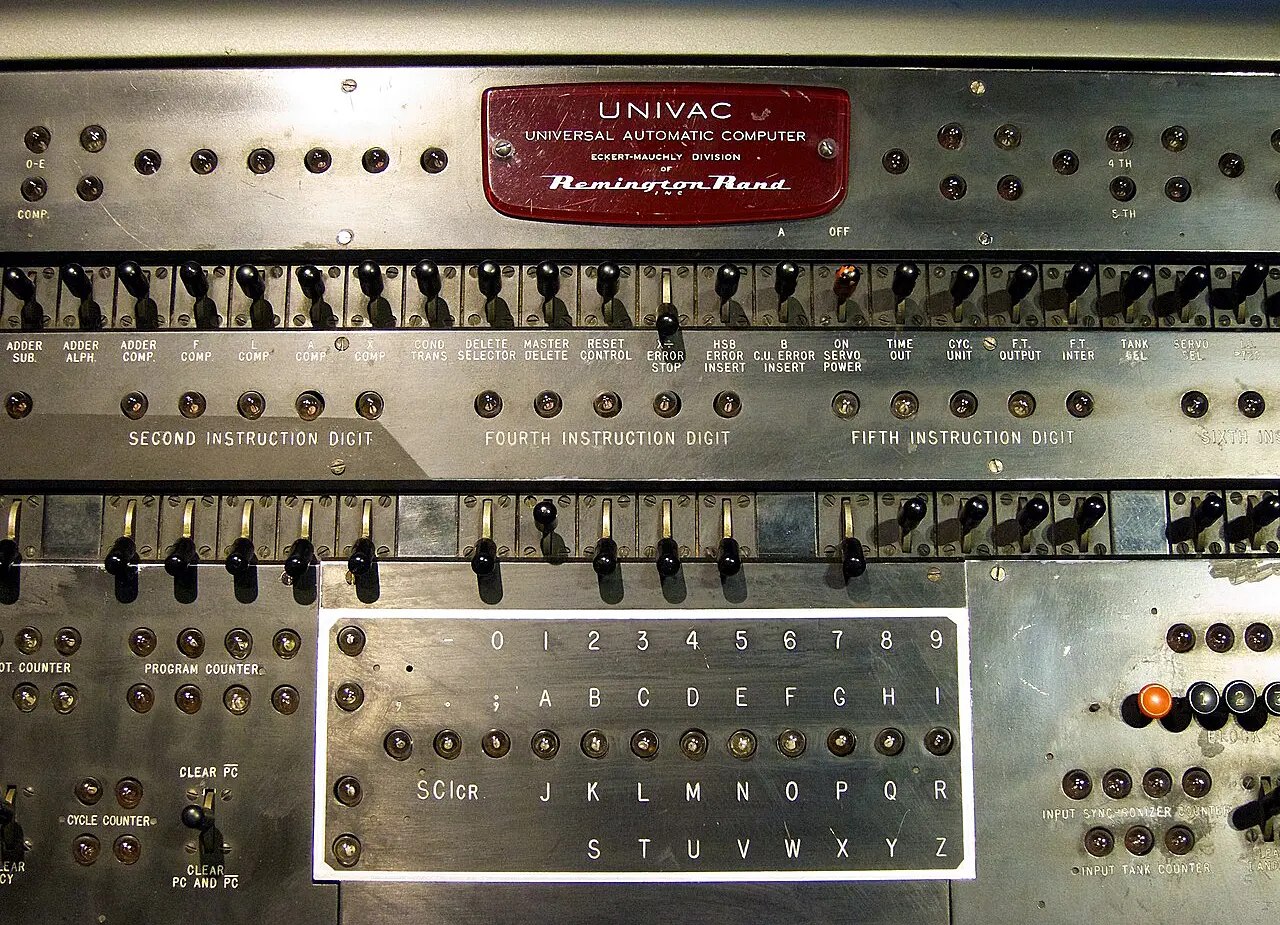

The UNIVAC I (Universal Automatic Computer I), introduced in 1951, was the first digital computer designed for commercial use in the United States. It was a room-sized system powered by thousands of vacuum tubes, using magnetic tape drives along with punch cards. UNIVAC could execute approximately 1,905 operations per second, and it employed mercury delay line memory capable of storing 1,000 words of 12 characters each.

This hardware allowed UNIVAC to handle both numeric and alphabetic data at unprecedented speeds for its time. It was programmable, universal in purpose, and significantly outpaced earlier mechanical and electromechanical systems.

The machine sold for between $1.25 and $1.5 million, which limited its potential to spread across industries. But its cultural impact was such that it quickly became a symbol of the new era of machine-powered thinking.

B11_UNIVAC

B11_UNIVAC

B12_UNIVAC_CENSUS_GE

Purchasing UNIVAC was a gamble as much as an investment. At more than a million dollars and with no proven market, the machine asked its buyers to take a leap of faith in electronic processing. This wasn’t about chasing marginal efficiency — it was about entrusting entire record systems to circuits and tape.

That choice carried symbolic weight: in placing confidence in a black box of logic, institutions declared that data itself could be managed by machines. The wager would be tested immediately in practice, beginning with the U.S. Census Bureau.

B12_UNIVAC_CENSUS_GE

UNIVAC in Practice — From Census to Payroll

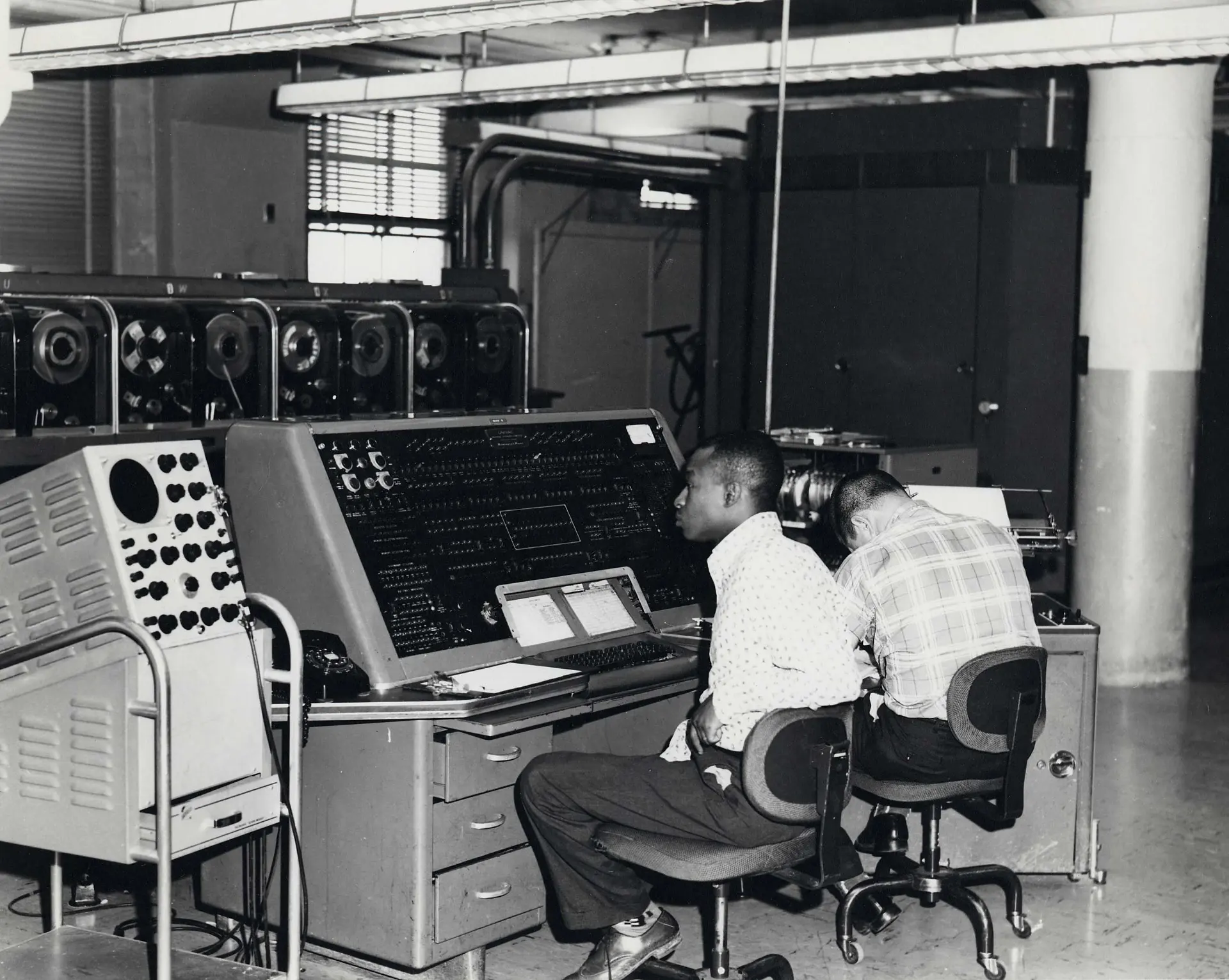

UNIVAC’s debut wasn’t in theory — it was in practice. The U.S. Census Bureau became the first non-military, non-academic institution to operate an electronic digital computer. The agency used it to tabulate census data, performing large-scale record matching and statistical analysis that would have taken human clerks weeks or months.

This early deployment in 1951 was so successful that the Bureau later declared UNIVAC had never been involved in any error incident — a powerful vote of confidence in machine logic at a time when skepticism ran deep. Soon after, General Electric became the first private business to use UNIVAC, adopting it for payroll computation.

At the time, IBM dominated government installations. Their tabulators were already embedded across agencies. Many public-sector computing offices were effectively IBM-run — staffed by former IBM employees, running IBM systems, and thinking in IBM workflows. UNIVAC, though technically superior, was considered an outsider.

As one official put it:

“If I recommend UNIVAC and it fails, I made a mistake. If I recommend IBM and it fails, IBM made a mistake.”

B12_UNIVAC_CENSUS_GE

B12_UNIVAC_CENSUS_GE

B13_UNIVAC_GOVERNMENT

To adopt UNIVAC in the early 1950s was to defy the gravitational pull of IBM. Its tabulators and staff dominated the U.S. government offices so thoroughly that breaking away looked like betrayal. Choosing UNIVAC meant stepping outside the safe default, accepting personal responsibility if the new system failed.

For the Census Bureau and General Electric, that decision carried as much political risk as technical risk — a calculated act of institutional defiance that showed electronic processing could challenge the incumbent order.

B13_UNIVAC_GOVERNMENT

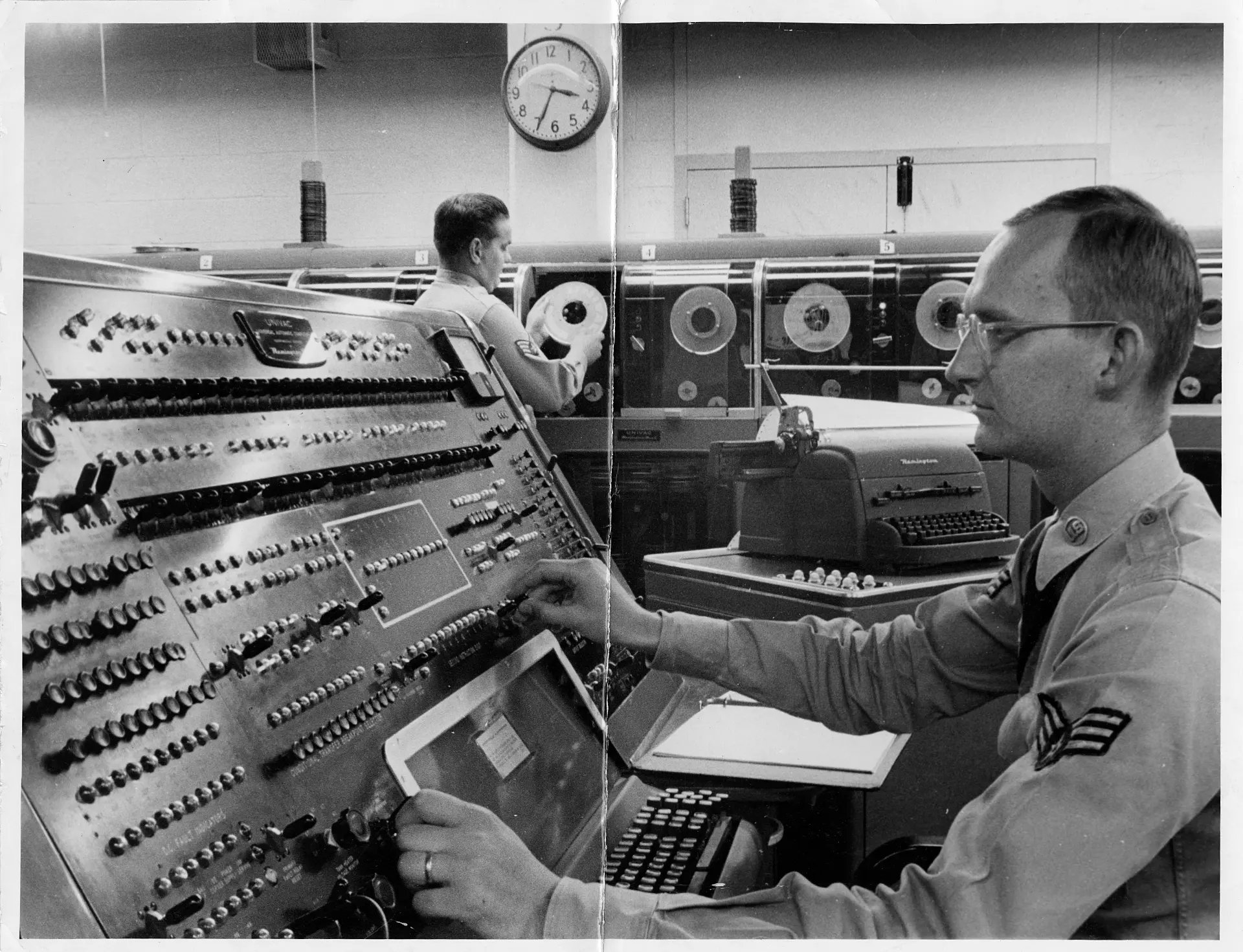

UNIVAC in Government and Military — Cold War Logistics

By the mid-1950s, the U.S. Air Force and other federal agencies had adopted UNIVAC systems for logistical planning, missile tracking, and resource scheduling. These systems weren’t designed for battlefield control, but served instead as part of the administrative backbone of Cold War operations.

UNIVAC’s ability to process alphabetic data was a major advantage, allowing for more complex record-keeping and scenario modeling than number-crunching machines alone.

Toward the end of the decade, Remington Rand’s UNIVAC division was bidding alongside IBM for government defense contracts, embedding itself in the infrastructure of federal automation. Unlike IBM’s tightly controlled systems, UNIVAC’s flexibility and tape-based I/O made it attractive for unconventional environments — from defense logistics centers to early weather modeling programs.

B13_UNIVAC_GOVERNMENT

B13_UNIVAC_GOVERNMENT

B14_UNIVAC_ENGINEERS

UNIVAC’s adoption by the Air Force showed how civilian business machines could be repurposed as tools of strategy. The same architecture that processed payrolls or census returns was reoriented toward missile inventories and nuclear logistics.

This blurred line between corporate data work and Cold War planning signaled a shift: information systems themselves had become part of the arsenal.

B14_UNIVAC_ENGINEERS

UNIVAC Engineers — From Startup to Corporate Backing

J. Presper Eckert and John Mauchly, already known for ENIAC, left wartime research to form the Eckert–Mauchly Computer Corporation. Their aim was to build a “universal automatic computer” that could handle business records as easily as equations.

UNIVAC began not with a corporation but with two engineers pushing an idea beyond ballistics. But their startup struggled: capital was scarce, the market uncertain, and the technology untested.

In 1950, the project was absorbed by Remington Rand, a typewriter and office-equipment giant with money and distribution power. That merger kept the machine alive — and with it, the possibility of a commercial computing industry.

B14_UNIVAC_ENGINEERS

B14_UNIVAC_ENGINEERS

B16_FERRANTI

The transfer from Eckert and Mauchly’s fragile startup to Remington Rand revealed how computing needed more than invention — it needed industry. Ideas that began as technical experiments survived only when attached to corporate pipelines of capital, manufacturing, and sales. UNIVAC’s persistence was less about isolated genius than about finding an institutional body large enough to carry it forward.

B16_FERRANTI

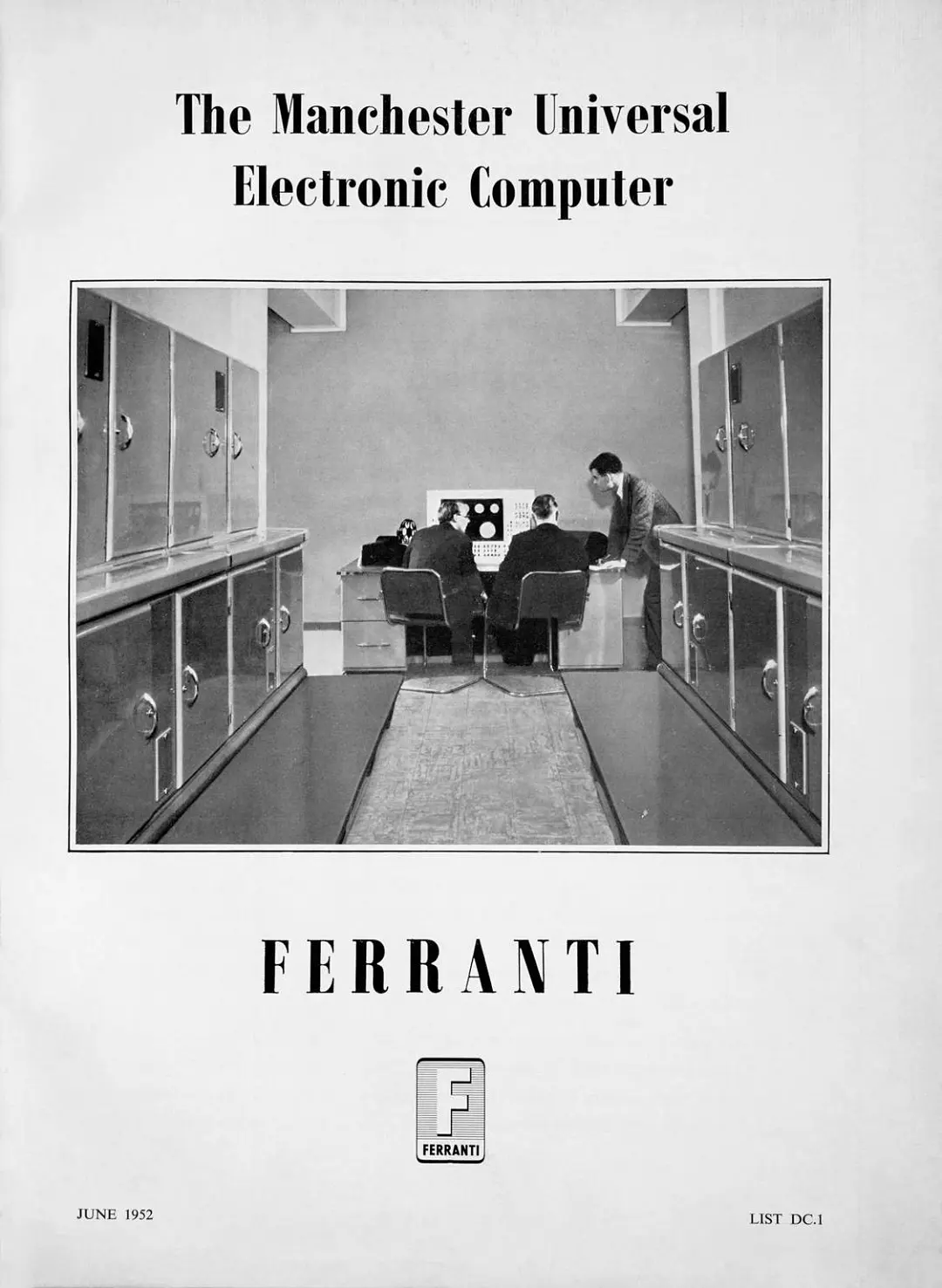

Ferranti Mark I — Britain’s First Commercial Computer

While UNIVAC made waves in the U.S., the UK had its own breakthrough in 1951: the Ferranti Mark I, derived from the Manchester Mark I project at the University of Manchester. It holds the distinction of being the first commercially available stored-program digital computer — predating UNIVAC by a few months. While primarily delivered to research institutions, it marked the beginning of programmable computing as a product.

The machine was designed to process 20-bit words, with a memory architecture that included vacuum tube storage (each tube holding 64 words) and a magnetic drum with capacity for 512 pages. It sold for between £50,000 and £100,000, and crucially, was made available beyond the confines of academia or government labs.

The Ferranti Mark I supported a wide range of programs, from business analytics and scientific modeling to experimental computer music and some of the first computer games. Its stored-program design — the ability to modify instructions directly in memory — allowed far more complex data manipulation than earlier fixed-instruction machines.

B16_FERRANTI

B16_FERRANTI

B17_DNEPR

The Ferranti Mark I hinted at a new order, where software and algorithms could evolve independently of hardware. In Britain, the machine also served as a proof that national research could be translated into products and infrastructures to rival American advances.

B17_DNEPR

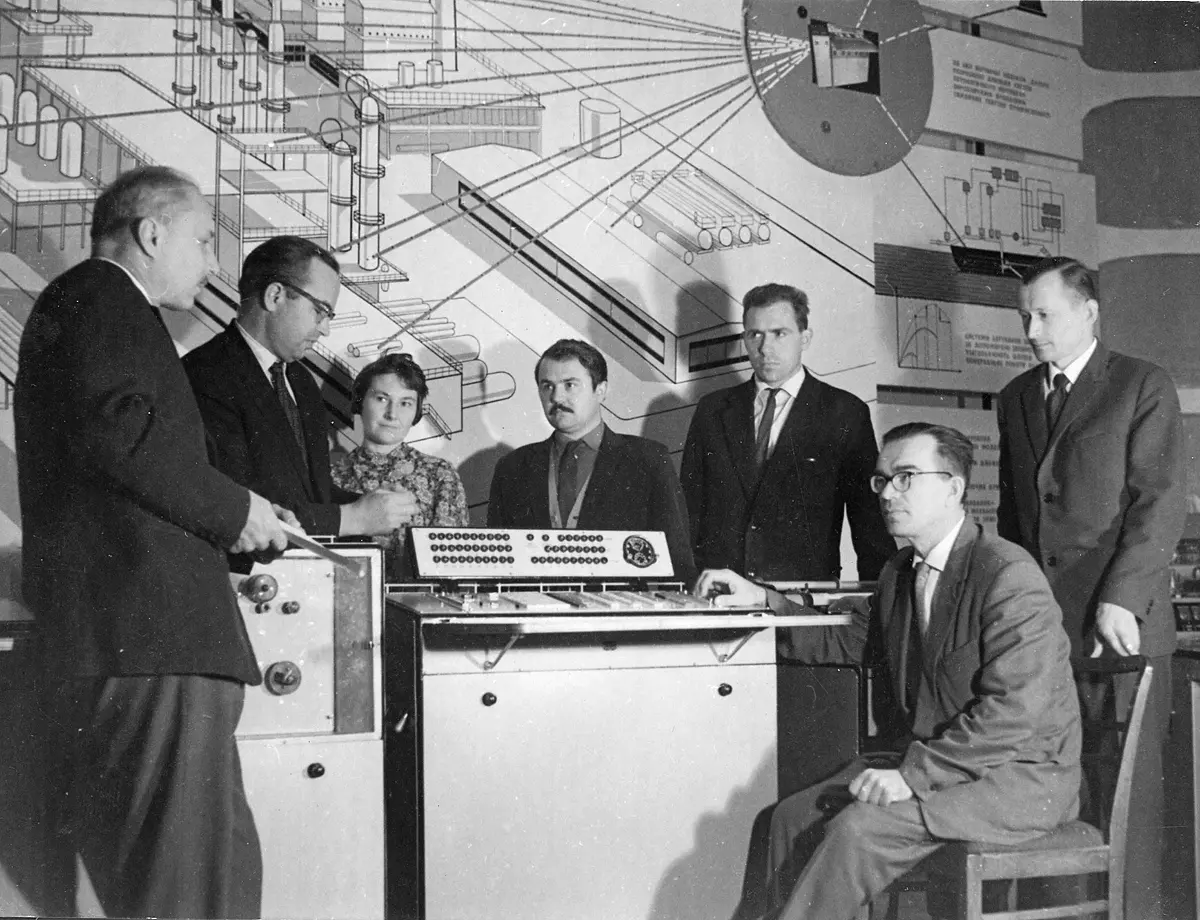

Dnipro (Dnepr) — Soviet Transistorized Computing

The Dnipro (Dnepr) computer, developed in Kyiv, Ukraine in the late 1950s and early 1960s, marked a key shift in Soviet computing: the move from vacuum tubes to transistors. It was part of a broader effort to modernize scientific and industrial computation across the USSR and its satellite states.

Designed for engineering calculations, process control, and scientific modeling, it found applications in aerospace, power generation, and metallurgy. Its general-purpose design allowed deployment across state institutions, though use remained tightly controlled by government and industrial planners.

Despite limited commercialization, the system marked the Soviet Union’s entry into the transistor era and its attempt to match Western advances in computing.

B18_CER

CER — Yugoslavia’s Independent Computing Path

Yugoslavia entered the digital age in the 1960s and 1970s with the CER (Cifarski Elektronski Računar) series, developed in Belgrade by the Mihajlo Pupin Institute. It was one of the first independent national computing initiatives outside the United States and the Soviet Union.

Early models like the CER-10 used transistor-based architecture with punched cards for input, later adding magnetic tape for storage and backup. The system featured 4 KB of magnetic core memory, expandable in later versions, and processing speeds in the tens of thousands of operations per second.

CER machines were applied to scientific research, state statistics, and media tasks such as automated news distribution. They played a central role in modernizing Yugoslavia’s administrative and information infrastructure.

B18_CER

B18_CER

B19_CANNING_EDP

Yugoslavia’s CER machines embodied a political claim: that digital infrastructure could be built outside the Cold War superpowers. In practice, their role was less about rivalry than about administration. This was part of a broader turn in the 1960s, when computing itself began to be defined less by machines and more by the management of processes — what engineers and managers alike started to call electronic data processing.

B19_CANNING_EDP

Canning and Electronic Data Processing — Defining EDP

In 1956, statistician Richard Canning gave currency to the term Electronic Data Processing (EDP) to describe the systematic use of computers for managing information. He broke it into three integrated functions:

- Decision support — automating lower-level management choices using structured data.

- Order issuance — translating those decisions into paperwork such as production orders, invoices, and notices.

- Feedback control — comparing outputs with goals and flagging discrepancies for correction.

This definition emphasized decomposing workflows into discrete steps, sequencing tasks, and keeping data stored, checked, and synchronized. EDP became a framework for applying computers to large-scale projects, from military logistics to industrial production and business operations.

B19_CANNING_EDP

B19_CANNING_EDP

Cluster B2

By the mid-1950s, computing was no longer defined only by hardware. UNIVAC, Ferranti, Dnipro, and CER showed how machines could take root in government, business, and national projects. Canning’s language of electronic data processing gave this shift a name: computers started organizing flows of information into decisions, orders, and controls.

B2

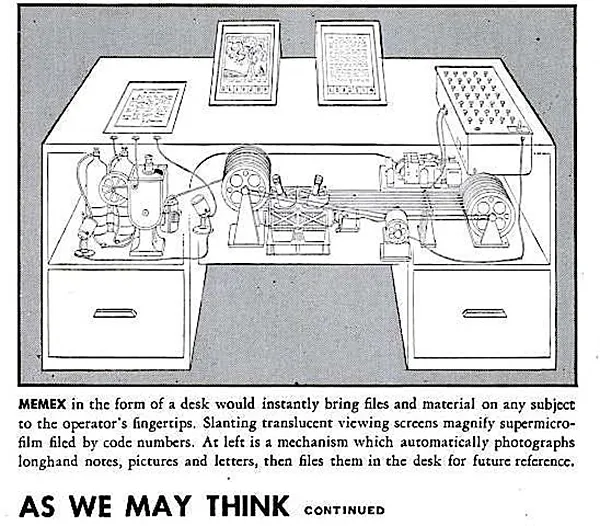

Information Inventory

As computers spread into business and government in the 1960s, new ambitions emerged. The aim was no longer to automate single tasks, but to integrate every record of an organization into one system.

Engineers and managers began to imagine “information inventories” — digital mirrors of enterprises where payroll, logistics, production, and planning could be stored, searched, and updated in real time. These visions pushed computing from isolated applications toward models of total information management.

B21_INFORMATION_INVENTORY

Information Inventory — Toward Integrated Business Data

To make “information inventory” usable, designers emphasized indexing and navigation: maps and keys were imagined as ways to move through an ocean of records.

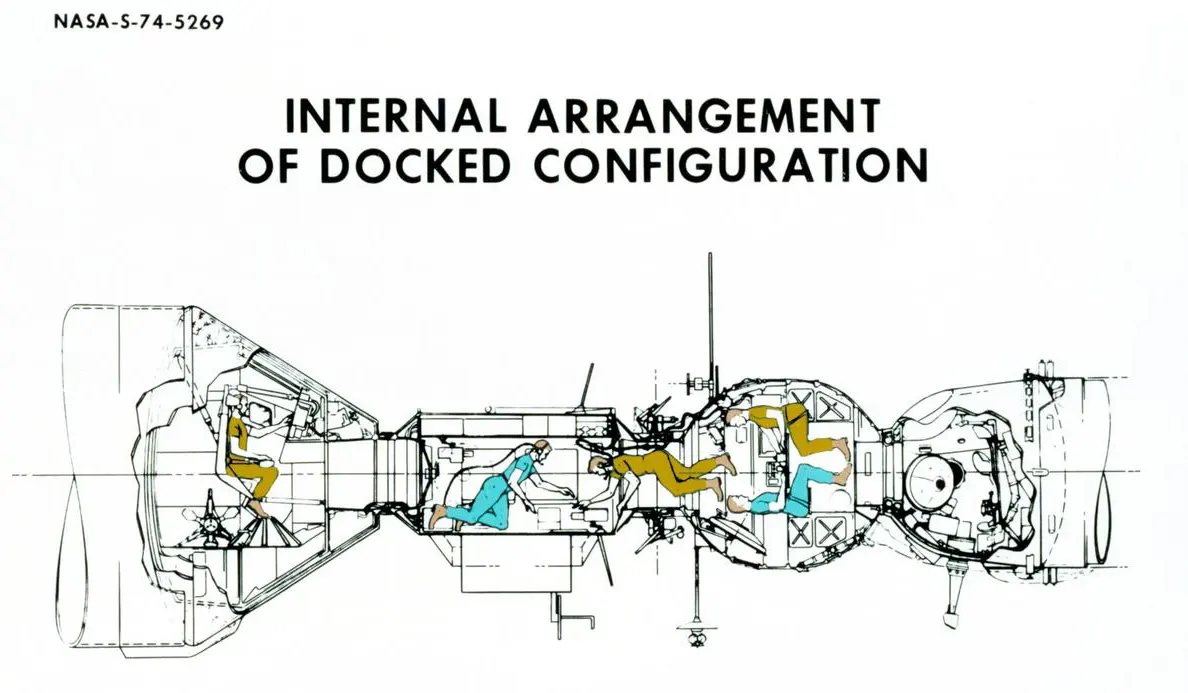

The urgency of integration grew as industrial systems became more complex: NASA’s Apollo program, for instance, involved millions of components, while military logistics had already outgrown manual handling.

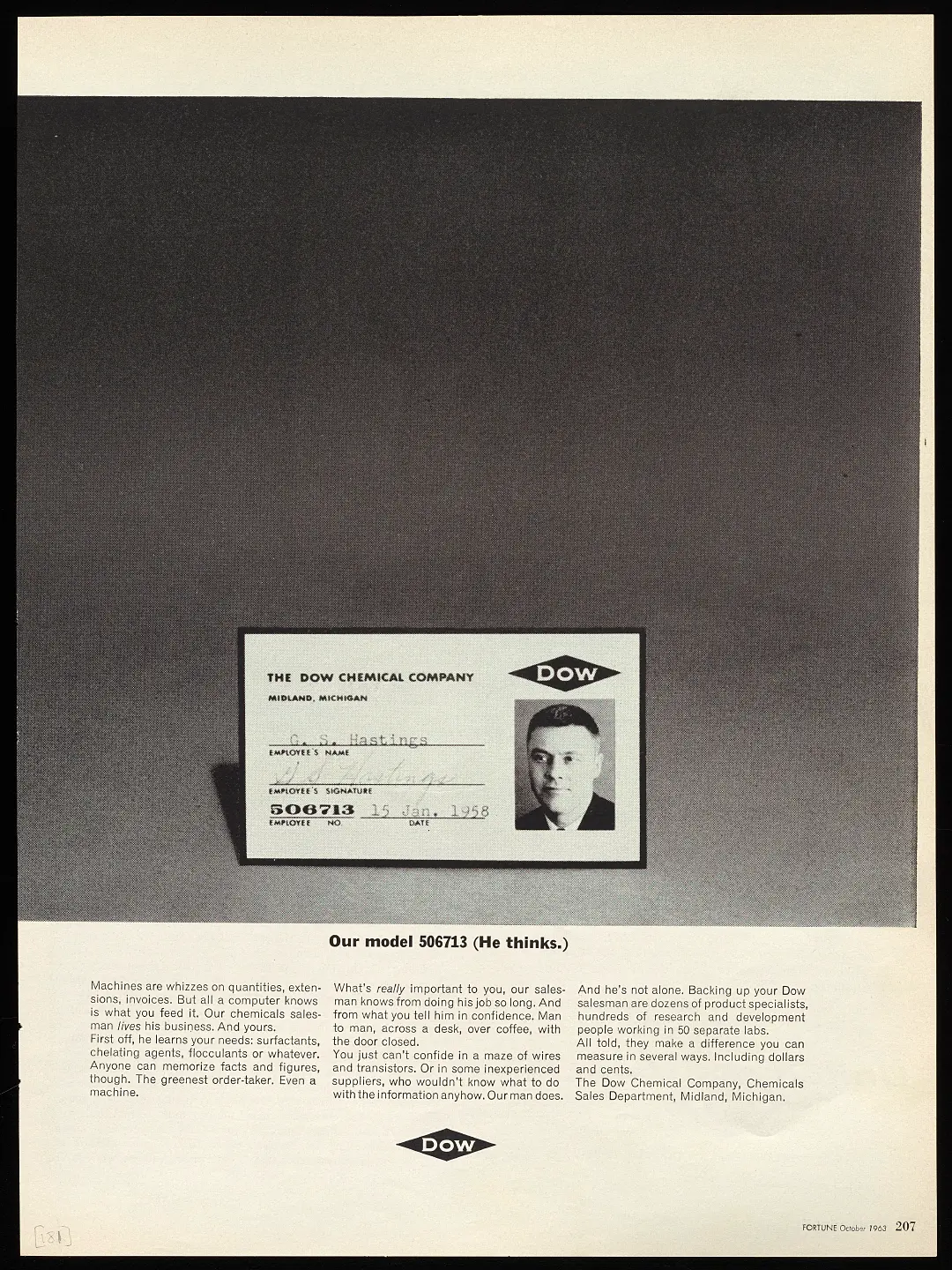

At Dow Chemical and later at General Electric, Charles Bachman faced the limits of separate departmental files. Dow’s early attempts at integration never took hold, but he carried forward the vision of shared, navigable data that could link records across the entire company.

B21_INFORMATION_INVENTORY

B21_INFORMATION_INVENTORY

B22_BACHMAN_IDS

The information inventory concept reframed computing as a tool of administration. Instead of automating individual paperwork tasks, it sought to coordinate entire organizations by aligning data with managerial routines. Decision support, order issuance, and feedback control became part of a single cycle, with computers positioned as the infrastructure for running enterprises.

B22_BACHMAN_IDS

Bachman and IDS — Inventing the Network Model

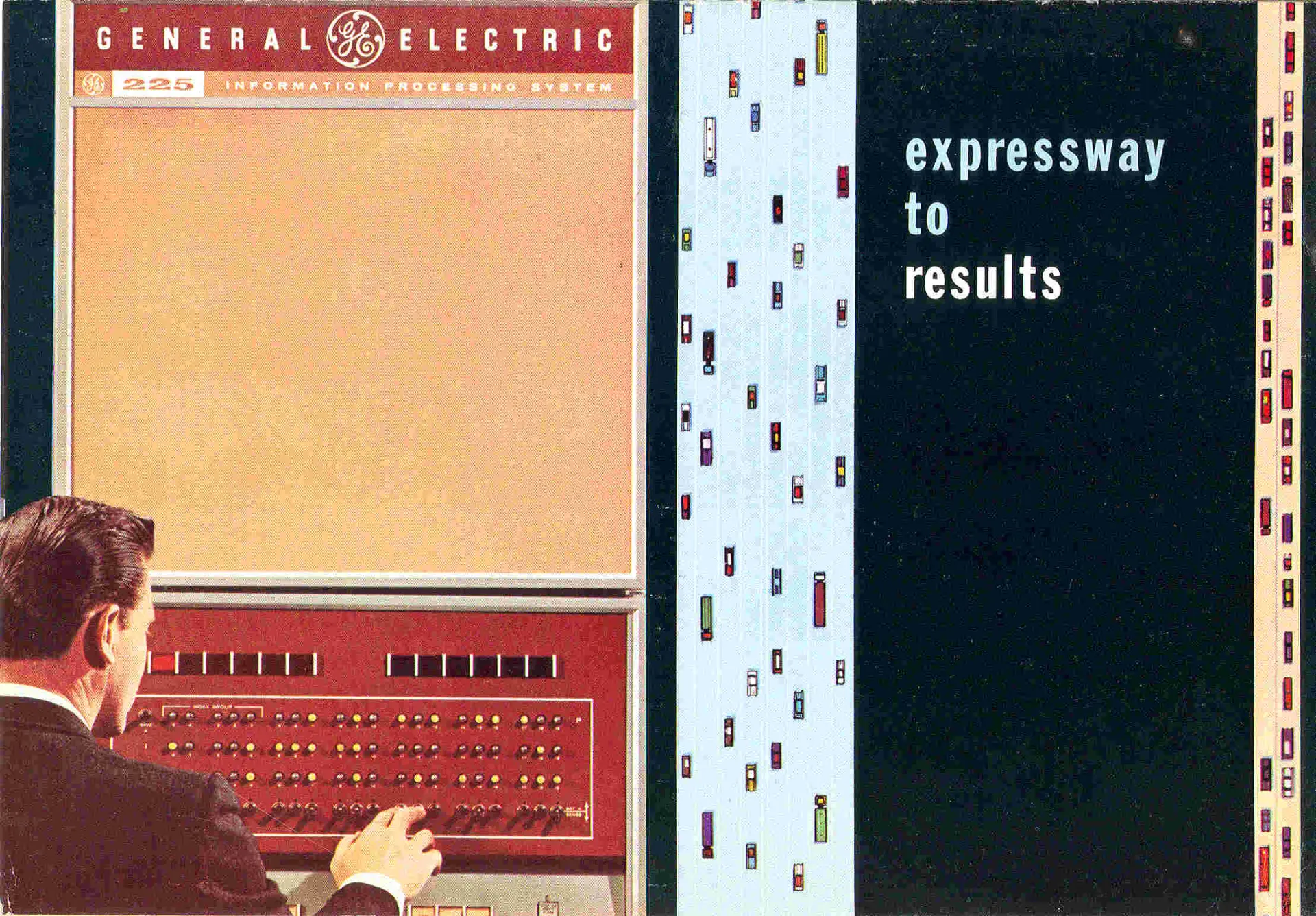

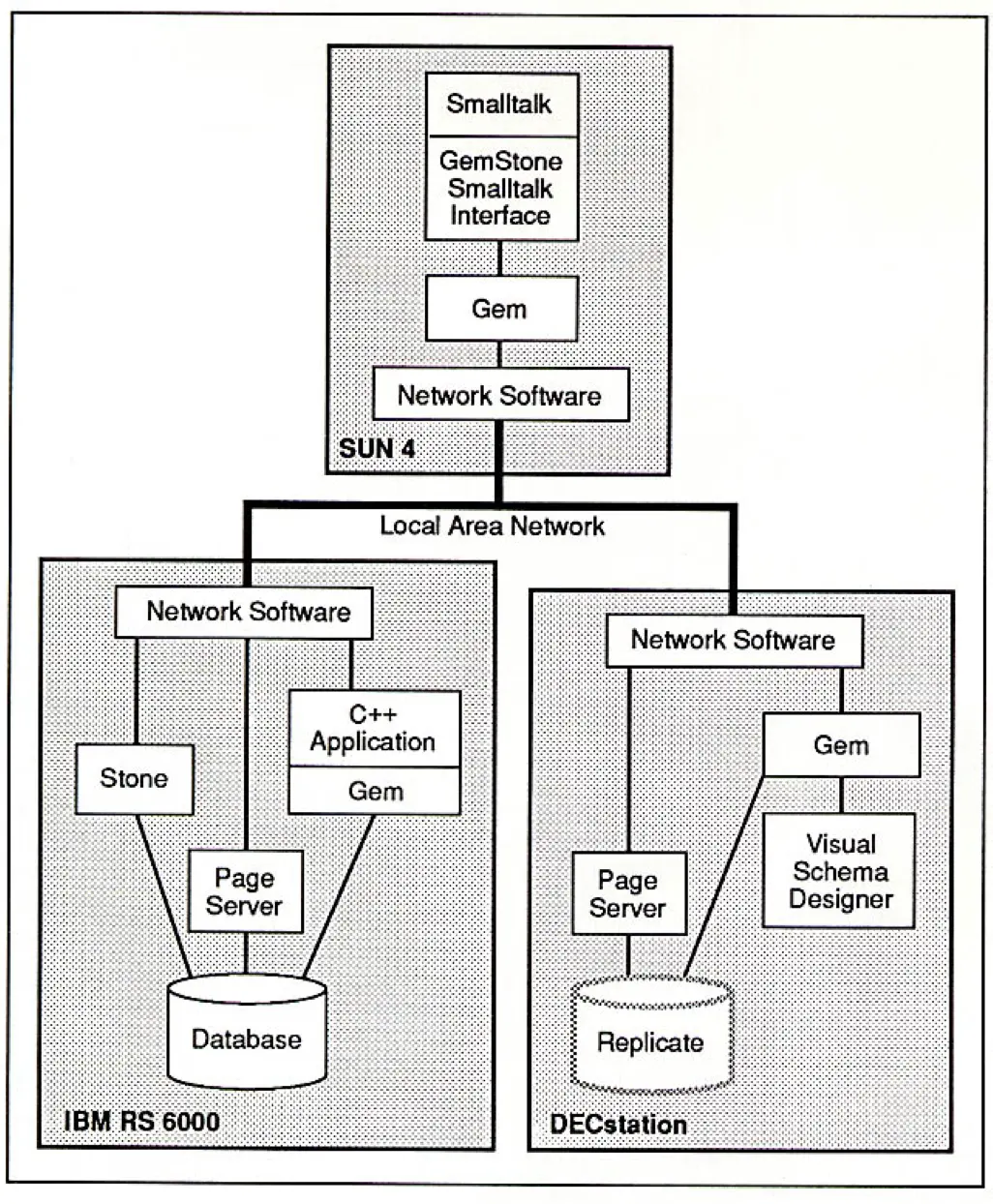

At General Electric in the early 1960s, Charles Bachman led the development of the Integrated Data Store (IDS) — one of the earliest database management systems. IDS introduced the concept of an information inventory: a centralized, disk-based repository of shared records that could be queried and updated by multiple applications.

This architecture enforced relationships between records — what would later be called the network data model. IDS supported a data dictionary to track record structures and allowed programmers to retrieve or update data using early forms of a data manipulation language.

IDS ran on the GE-225 computer, paired with the MRADS disk system — offering random access to data, rather than the sequential tape storage common at the time. Its first major deployment, MIACS (Manufacturing Information and Control System), provided real-time production control at a GE factory in Philadelphia.

By unifying data storage and enabling shared access, IDS became the prototype for modern database systems. In 1973, Bachman received the Turing Award for this work.

B22_BACHMAN_IDS

B22_BACHMAN_IDS

B23_WEYERHAEUSER_IDS

IDS showed the capability of computing as a platform for coordination: production schedules, inventories, and records could be aligned within a single system. What began as a technical experiment inside GE pointed to a new model of processing — data as a common resource rather than a series of isolated tasks.

B23_WEYERHAEUSER_IDS

Weyerhaeuser IDS — A Real-Time Business Network

In 1965–1966, Weyerhaeuser, a major U.S. timber company, became the first non-GE customer to implement IDS — turning the database into the foundation for a real-time, nationwide business system.

The setup linked over 100 remote teletype terminals to a central GE-235 computer using a DATANET-30 network. Orders placed across the country were entered directly into the system, which updated inventory, managed shipping logistics, and generated invoices.

The system used Problem Controller, an early form of online transaction processing (OLTP), to prioritize and manage concurrent requests. At peak demand, transaction volumes briefly overwhelmed the system, but the architecture proved robust and adaptable.

B23_WEYERHAEUSER_IDS

B23_WEYERHAEUSER_IDS

B24_SOVIET_PARADOX

Weyerhaeuser’s system showed how databases could become part of the operating core of a company. Inventory, logistics, and orders were no longer separate files but interconnected records managed in real time. In this setting, computing stopped being an accessory to administration and became its medium. The lesson was that processing power could coordinate entire organizations.

B24_SOVIET_PARADOX

The Soviet Information Paradox — Power, Plans, and Resistance

By the late 1950s, the Soviet Union faced a contradiction: while its military and scientific sectors deployed digital computers with growing sophistication, civilian institutions — especially Gosplan, the State Planning Committee — remained wary of adopting the same tools.

The USSR had previously used imported tabulators like Powers and Hollerith machines, later building its own analogs such as the T-5, exported to other socialist countries. But the leap to electronic computing was politicized. Even as Gosplan quietly launched its own Computer Center in 1959 and installed its first Ural-2 machine in 1960, internal resistance to large-scale data automation persisted.

The paradox deepened in the 1960s. Despite new equipment — including British Elliott 403 and ICL System-4 machines — and cooperation with East Germany on ALGOL translators, Gosplan remained cautious.

A proposed national automation system, the OGAS network, led by cybernetician Victor Glushkov, envisioned a nationwide digital system for economic planning. But it was blocked — not due to technical failure, but because it threatened existing institutional control over data flows. In the Soviet model, information was not only infrastructure but also political power.

B24_SOVIET_PARADOX

B24_SOVIET_PARADOX

B25_OGAS_PROJECT

In the Soviet Union, computing could never be treated as neutral infrastructure. Cybernetics itself had once been denounced as “bourgeois pseudoscience,” and even after its partial rehabilitation, every attempt to expand automation ran into political suspicion.

The OGAS project revealed the paradox in sharp form: the technical capacity to build a nationwide planning network existed, but implementation meant redistributing authority. Ministries defended their own data, party officials feared loss of control, and information itself became a contested resource. Processing power was available — but it could not be separated from the politics of who was allowed to use it.

B25_OGAS_PROJECT

OGAS — The Internet That Almost Was

In the 1960s, Soviet cybernetician Victor Glushkov proposed a nationwide network of computers to manage the USSR’s economy in real time. Called OGAS (Общегосударственная автоматизированная система учёта и обработки информации), or the National Automated System for Computation and Information Processing, it aimed to unify factories, ministries, and planners in a single, continuously updated digital infrastructure.

The proposed system included regional computing centers linked to central hubs in a three-tier architecture, real-time inputs from across industries, and automated modeling to aid planning. Terminals were planned for remote enterprises, from industrial plants to mining sites and state-owned farms.

Glushkov’s team at the Kyiv Institute of Cybernetics had experience in systems design, and prototypes for smaller-scale implementations already existed. But OGAS never launched.

The system promised transparency, efficiency, and redistributed authority, challenging the entrenched gatekeepers of economic knowledge. Despite repeated proposals through the late 1960s and early 1970s, OGAS was quietly defunded, gutted, and eventually abandoned.

B25_OGAS_PROJECT

B25_OGAS_PROJECT

B26_GE225_VS_IBM1401

The Soviet Union had the expertise to design a nationwide network, but not the institutional flexibility to allow it to function. In the same decade, American firms were locked in market competition, refining business data processing into the standard model that still shapes enterprise computing today. The contrast showed how processing could evolve into infrastructure — or be strangled before it began.

B26_GE225_VS_IBM1401

GE-225 vs IBM 1401 — Rival Models of Business Computing

In the early 1960s, two very different machines shaped how organizations approached digital information: the General Electric GE-225 and the IBM 1401.

The GE-225, introduced in 1960, was built for power and flexibility. It featured simultaneous input/output handling, supported both alphabetic and numeric data, and could connect to disk drives like MRADS. Input came from magnetic tape, punched cards, MICR documents, and even paper tape, with long-distance connections supported via the DATANET-15. It was a machine for custom-built systems like real-time data integration.

Meanwhile, the IBM 1401, released in 1959, took the market by storm with its affordability and reliability. Its six-bit character system, magnetic core memory (4K–16K), and decimal arithmetic made it perfect for payroll, billing, and inventory. Leased for around $2,500/month, it brought computing within reach of small and mid-sized businesses — earning the nickname "the Model T of the computer industry."

Their rivalry wasn’t just about specs — it was about philosophies:

- IBM offered safe, standardized tools for the mainstream.

- GE pushed toward modularity, extensibility, and integration.

The GE-225 later found academic life as well — a time-sharing version at Dartmouth became the birthplace of the BASIC programming language.

B26_GE225_VS_IBM1401

B26_GE225_VS_IBM1401

Cluster B3

By the mid-1960s, databases were no longer only technical experiments. They had become tools for business, contested instruments of state planning, and competing visions of how information should be organized. Across the Atlantic divide, companies and governments pursued different strategies, but the stakes were converging: whoever mastered the storage and movement of records would shape how economies functioned.

B3

COBOL — From Business Language to Standard

At the turn of the 1960s, computers were already processing vast quantities of business data, but they lacked a common language. Each manufacturer promoted its own programming systems, locking users into specific machines and workflows.

The push for a business-oriented language brought together an unusual alliance. Out of that effort came COBOL, designed not for scientists but for clerks and managers, promising to make programs portable and data processing more standardized.

B31_COBOL_CREATION

The Birth of COBOL — A Business Language for All

In May 1959, over 40 representatives from government, industry, and academia gathered at the Pentagon to address a growing problem: early computer languages were difficult to learn and maintain, limiting business adoption. Their solution was CODASYL — the Conference on Data Systems Languages — a volunteer group aimed at creating a common, English-like programming language for business.

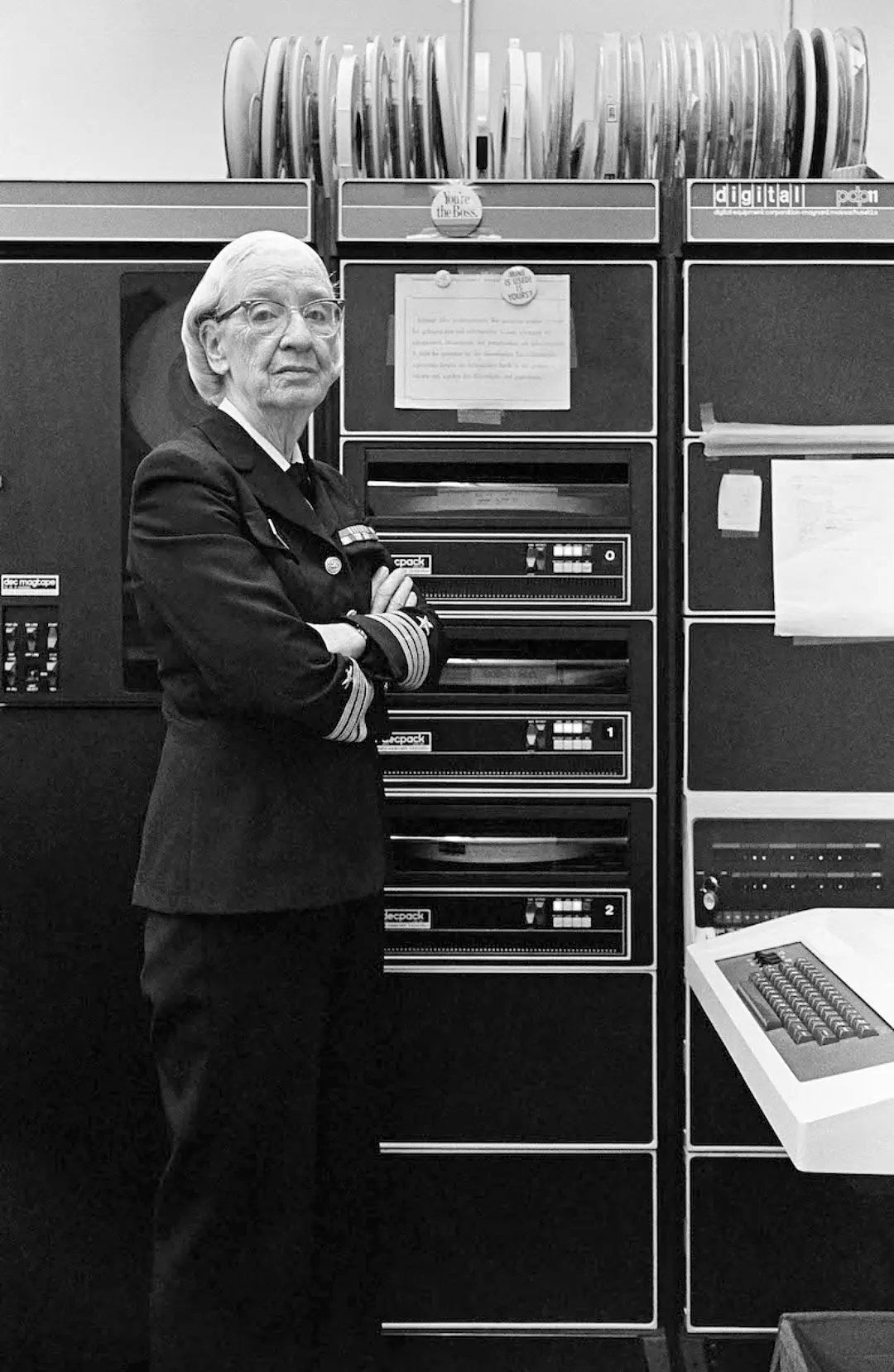

At the time, Grace Hopper’s FLOW-MATIC, developed at Remington Rand UNIVAC, was the only business-oriented language using human-readable commands. Hopper, a pioneering computer scientist and naval officer, had long championed making programming more accessible. The committee, with members from Burroughs, Honeywell, IBM, RCA, Sperry Rand, and Sylvania, drew inspiration from her work.

On December 6, 1960, a live demonstration proved COBOL could compile and run the same code on both UNIVAC and RCA systems — a landmark moment for interoperability.

B31_COBOL_CREATION

B31_COBOL_CREATION

B32_HARDWARE_OF_COBOL

COBOL’s creation reframed how processing was understood. Instead of writing cryptic machine code, programmers could issue instructions in something close to plain English. This shift lowered the cost of training and expanded the pool of potential programmers.

In practice, teaching machines a human-readable language proved easier than expecting managers and engineers to learn machine dialects. Standardization turned programming into a shared resource, laying the foundation for software as an industry.

B32_HARDWARE_OF_COBOL

Hardware of COBOL — The Machines Behind the Language

COBOL wasn’t designed in a vacuum — it was shaped by the constraints and capabilities of the early business computers it was meant to run on. The language was intended for high-volume, data-driven tasks on large systems like the UNIVAC I and II, IBM 705, Honeywell 800, RCA 501, Sylvania MOBIDIC, and Burroughs B-5000.

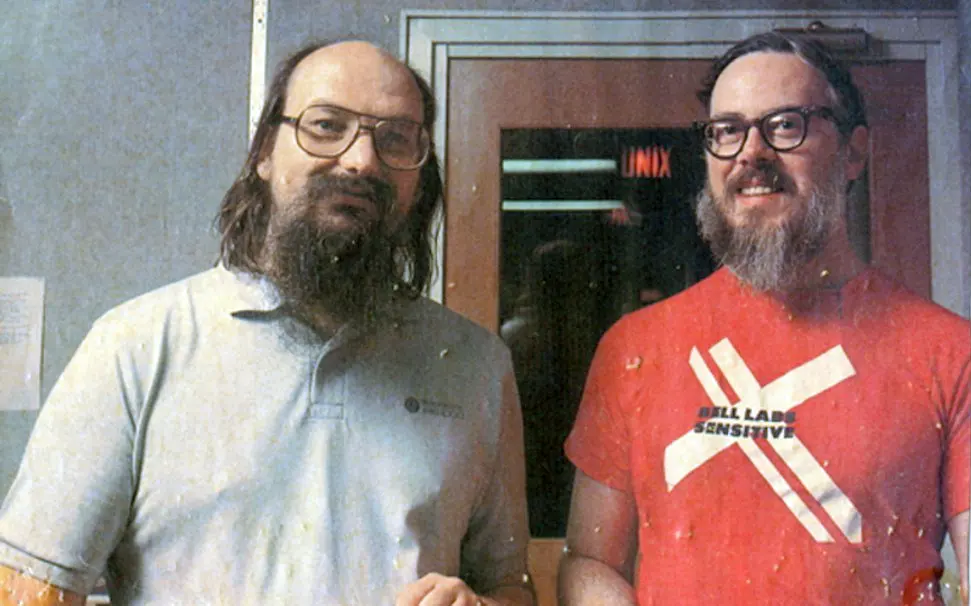

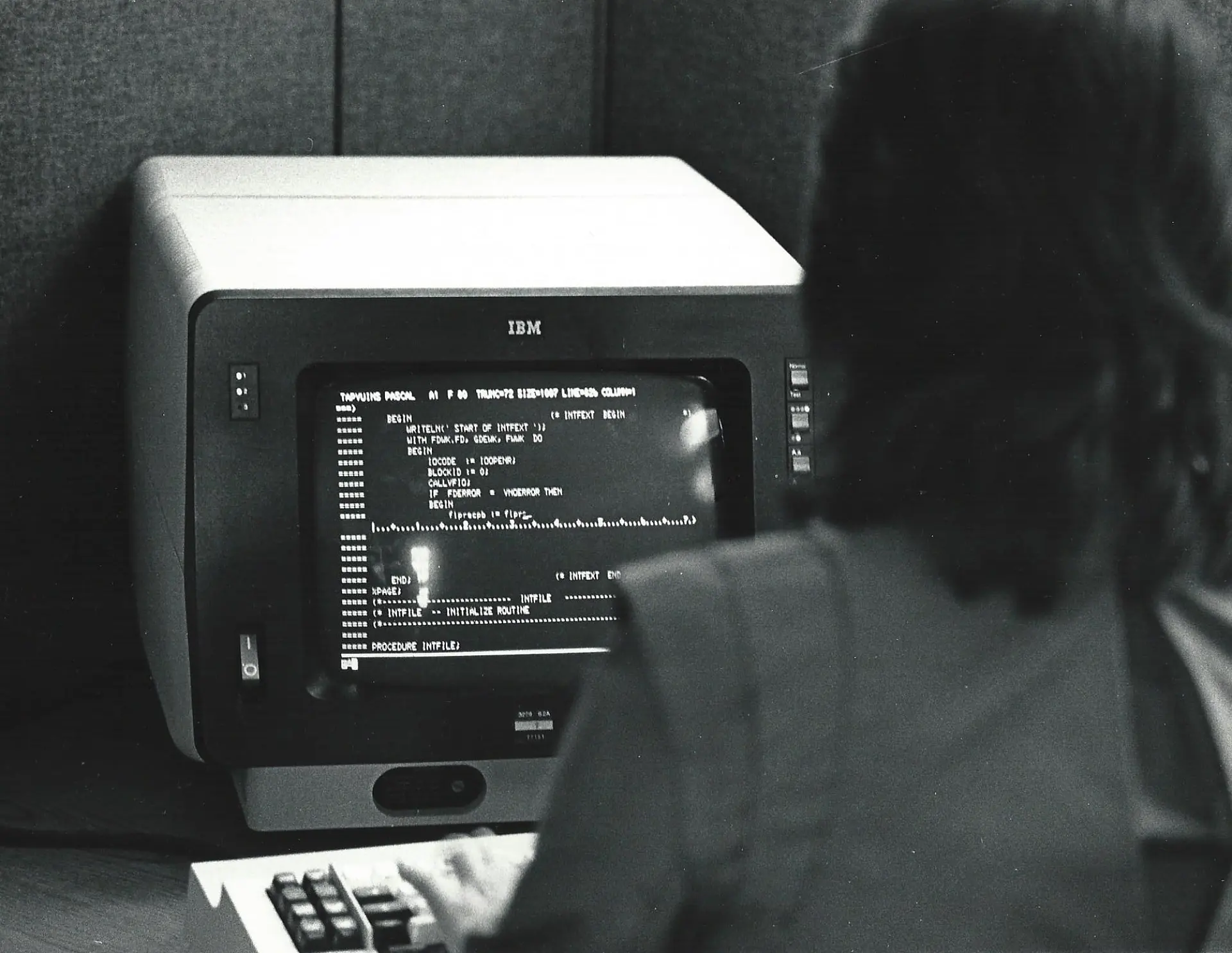

Grace Hopper speaking in 1982 about running COBOL and FORTRAN on various machines.

These machines varied widely in architecture and I/O capabilities, but COBOL’s standardization effort required them to speak a shared language. This forced a shift in how hardware designers thought about software compatibility, and it catalyzed interest in portable, high-level languages. The 1960 live demonstration of COBOL running on both UNIVAC and RCA systems proved the concept.

B32_HARDWARE_OF_COBOL

B32_HARDWARE_OF_COBOL

B33_EARLY_COBOL_APPLICATIONS

COBOL’s portability redefined the relationship between software and hardware. Programs could be conceived as transferable instructions, no longer bound to a single processor’s quirks.

That shift encouraged organizations to think of computing not in terms of registers and opcodes, but in terms of tasks, records, and flows of data. The idea of software as infrastructure began to take hold: an independent layer of logic that could outlive the machines it ran on.

B33_EARLY_COBOL_APPLICATIONS

Early COBOL Applications — From Contract Coding to AUTOFLOW

COBOL’s standardization opened the door to an entirely new market: commercial software development. In 1959, Advanced Data Research (ADR), a New Jersey–based firm, began offering contract programming services, specifically advertising COBOL expertise in Datamation magazine by 1961.

One of ADR’s key products was AUTOFLOW, an application that automatically generated flowcharts from COBOL source code. At a time before video terminals, visualizing program logic on paper was essential. AUTOFLOW helped users trace and document complex logic paths, making it easier to debug, explain, or maintain large-scale software.

B33_EARLY_COBOL_APPLICATIONS

B33_EARLY_COBOL_APPLICATIONS

B34_SOVIET_COBOL

The spread of COBOL moved coding from a specialized craft inside machine-rooms to a professional market of its own. With ADR marketing AUTOFLOW directly to users, programming was becoming a business, with products, contracts, and careers defined around maintaining the new language of data processing.

B34_SOVIET_COBOL

COBOL Behind the Iron Curtain — Adaptation and Translation

Despite Cold War tensions, the Soviet Union closely followed developments in Western data processing — and COBOL did not go unnoticed. In 1965, the Kyiv-based journal Kibernetika published a technical overview of COBOL by Lyudmila Babenko, helping introduce the language to Soviet scientific circles. She later earned a PhD for formalizing COBOL-style data translation methods for mass data processing.

Soviet adaptations emerged rapidly: the Dnipro-2 control system, built in Kyiv, integrated COBOL-like translators designed by Kateryna Yuschenko’s team. Meanwhile, the Minsk-22 computer from Belarus offered its own compiler variant. These efforts reflected a broader drive to align with international standards while maintaining domestic engineering autonomy.

The arrival of the ES EVM (Unified System of Electronic Computers), based on IBM System/360, brought COBOL-style programming into the mainstream of Soviet and Eastern Bloc computing. By 1975, COBOL had received an official Soviet standard.

B34_SOVIET_COBOL

B34_SOVIET_COBOL

Cluster B4

The Soviet embrace of COBOL signaled that data processing was now central to economic planning, and that aligning with international norms could serve governance as much as engineering.

Across both East and West, COBOL’s spread marked a broader transformation: programming was no longer a patchwork of proprietary codes, but a shared infrastructure for business and government alike. The language made automation portable, training scalable, and software itself a transferable resource. By the mid-1970s, data processing had been reshaped not only by machines but by the codes that told them what to do.

B4

Business Data Management

By the late 1960s, businesses were beginning to think in terms of systems rather than isolated programs. Reservation platforms, financial networks, and enterprise databases bound together thousands of users and millions of transactions.

What distinguished this era was not the invention of new hardware or languages, but the orchestration of processes at scale. Computing was becoming a management tool — one that promised to coordinate complex organizations as efficiently as it handled numbers.

B41_DBMS_EMERGENCE

Managing Data — From Reports to Databases

In the early 1960s, computing began to move beyond producing static reports. Organizations were starting to manage dynamic, shared datasets. The first genuine database management systems (DBMSs) — GE’s IDS (1964) and IBM’s IMS (1965) — were built for disk drives and introduced persistent, structured storage that could be reused across applications.

The concept of a “database” itself came out of Cold War defense projects. In 1962, the System Development Corporation, which had worked on the SAGE air defense network, described shared repositories of information accessible to multiple users and programs — a step beyond archived output.

By the late 1960s, business computing circles were converging on the same need for consistent storage and retrieval. The CODASYL Data Base Task Group, backed by firms such as RCA and Remington Rand, began formalizing DBMS architecture. Their work intersected with advances in software portability and the push for standard program–data interfaces.

B41_DBMS_EMERGENCE

B41_DBMS_EMERGENCE

B42_DBMS_IBM_USERGROUPS

The move from reports to databases marked a conceptual shift: data was no longer treated as the end product of computation but as a resource to be stored, reused, and shared. Once businesses and governments began to see information as a live asset, the database became a platform for everything else — planning, coordination, and decision-making.

B42_DBMS_IBM_USERGROUPS

IBM’s Role — User Groups and the Rise of IMS

In 1957, IBM users formed SHARE and GUIDE groups to collaborate on programs for new hardware. Projects like SURGE and 9PAC extended the IBM 704/709’s capabilities. Though IBM didn’t develop them, it later supported and maintained 9PAC, seeing its value. These early systems experimented with data dictionaries, file hierarchies, and routines for migrating between hardware environments.

Still, tape-based storage had limits. The real breakthrough came when IBM pivoted to disk — and partnered with aerospace contractor North American Rockwell to build IMS (Information Management System). Originally developed to manage components for the Apollo program, IMS pioneered hierarchical data models and multitasking data access.

By the late 1960s, IMS ran on IBM’s System/360 and supported multiple concurrent applications from a single memory image. IBM states that IMS still supports 95% of Fortune 1000 companies today.

B42_DBMS_IBM_USERGROUPS

B42_DBMS_IBM_USERGROUPS

B43_DBMS_HARDWARE

The early experiments of user groups showed that data handling could be organized collectively. IMS took that lesson and institutionalized it: databases were no longer improvised code libraries but packaged systems integrated into corporate computing.

B43_DBMS_HARDWARE

System/360 and Beyond — Hardware for Databases

IBM’s System/360, launched in 1964, introduced modular computers with upgrade paths, standardized architecture, and compatibility across a wide range of peripherals. Its design popularized the 8-bit byte, data channels, and 9-track magnetic tape, pushing the industry toward mass production and interoperability. European and Japanese manufacturers soon followed, building IBM-compatible systems.

Key hardware elements for early database management included:

- Magnetic tape drives for sequential backups and bulk data archiving.

- DASDs (Direct Access Storage Devices) for fast, random access to structured data.

- Punched cards and paper tape as legacy media for data entry and programs.

- Terminals such as the IBM 2260 and Univac Uniscope, which enabled full-page interaction and gave users real-time access to enter, correct, and visualize data.

B43_DBMS_HARDWARE

B43_DBMS_HARDWARE

B44_SABRE_SYSTEM

System/360 architecture created a common platform where storage devices, terminals, and software could work together. For business computing, the change was decisive: data handling moved from overnight reports to interactive use on screens.

Once users could enter, correct, and query records directly, processing unfolded through continuous exchanges between people and systems. This became the foundation for real-time applications.

B44_SABRE_SYSTEM

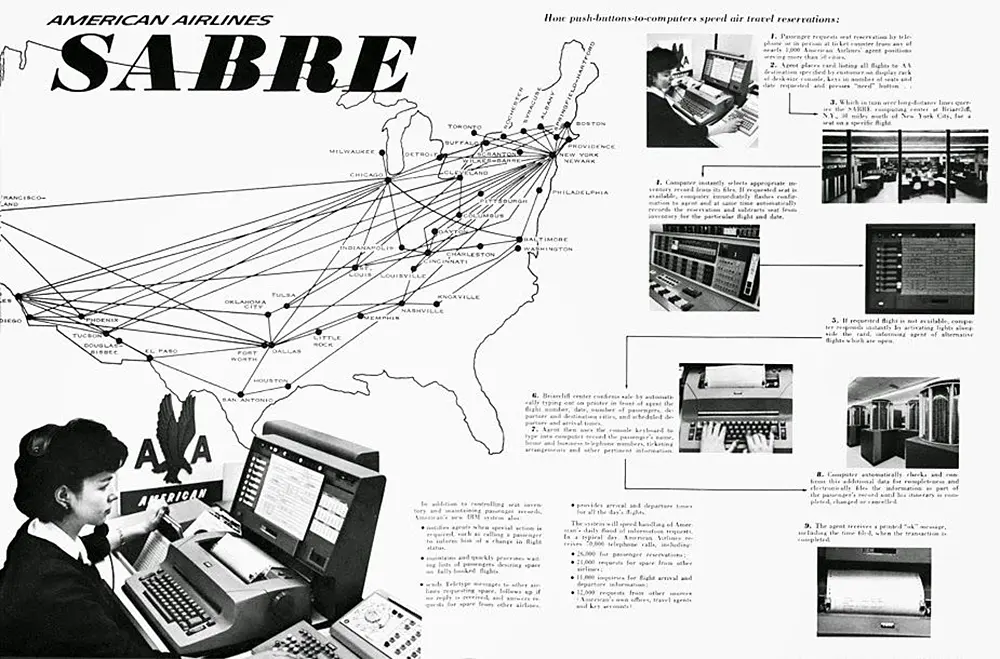

SABRE — The First Real-Time Business System

In the late 1950s, a spontaneous meeting between IBM and American Airlines executives on a flight sparked the idea for a computerized reservation system. Drawing from IBM’s military experience with the SAGE air defense system, the two companies built SABRE — the Semi-Automated Business Research Environment.

Launched in 1960, SABRE became the world’s first commercial real-time data system, handling 83,000 bookings per day via two IBM 7090 mainframes. Its interactive model allowed agents to check seat availability, confirm tickets, and update records instantly, replacing manual booking processes.

B44_SABRE_SYSTEM

B44_SABRE_SYSTEM

B45_OLYMPICS_DB

More than a tool for booking flights, SABRE became a template for real-time systems. Its design showed that records could be updated continuously and shared instantly across a network — a model soon echoed in banking, finance, and logistics.

B45_OLYMPICS_DB

Tokyo Olympics 1964 — Real-Time on a Global Stage

The 1964 Tokyo Olympics posed a monumental data challenge: 7,000 athletes, 100 countries, and up to 25 simultaneous contests across 32 venues. To manage this, IBM Japan deployed a real-time data processing system that transformed event coordination forever.

Using 62 IBM 1050 terminals across venues, scores were sent to a central Olympic Data Center powered by eight IBM computers. With a disk capacity of 56 million characters, the center processed rankings, judge scores, and registration data for 12,000 participants — within seconds. Over 100,000 messages were expected, with three to four times as many replies sent back.

B45_OLYMPICS_DB

B45_OLYMPICS_DB

Cluster B5

SABRE proved that continuous data flows could manage a service industry, while Tokyo Olympics showed that the same logic could coordinate global events. Together they marked a turning point: computing was no longer producing reports after the fact, but sustaining transactions and interactions as they happened.

Processing had become infrastructure — the nervous system through which organizations, and soon societies, would operate.

B5

Foundations of Data Handling

As computing spread through business and government in the 1960s, the challenge was no longer simply to store or transmit information. It was to decide how data should be processed.

Should jobs be collected and run in long, efficient batches, or should systems respond immediately to events as they happened? The answers produced different architectures, each with its own strengths, costs, and trade-offs. The contrast between batch and online processing defined the foundations of modern data handling.

B51_BATCH_VS_OLTP

Batch vs. Online Processing — From Census to Seats

By default, early computing relied on batch processing — collecting data over hours or days, then feeding it into a system for a single, uninterrupted run. This was ideal for repetitive, large-scale jobs like payroll or census tabulation. The method emphasized efficiency in an era when computer time was scarce and expensive.

The shift to Online Transaction Processing (OLTP) enabled systems to handle data in real time, allowing for immediate response to user input. The change was made possible by new magnetic disks (offering random access), faster processors, and networked terminals.

B51_BATCH_VS_OLTP

B51_BATCH_VS_OLTP

B52_DATA_INTEGRITY

The arrival of online transaction processing redefined what computing meant. Data was no longer processed in blocks after the fact but managed as it happened, one transaction at a time. That shift created the architecture of modern systems: continuous, interactive, and responsive.

OLTP became a cornerstone of data handling — but once transactions were live, new questions followed. How could records stay consistent across departments? How could errors be prevented before they spread? Real-time processing made data powerful, but it also made integrity essential.

B52_DATA_INTEGRITY

Data Integrity — Redundancy, Consistency, and Security

In the early years of digital computing, businesses faced a fundamental problem: data was everywhere, and nowhere unified. The same customer’s name or address might live in multiple departmental files, each updated (or not) independently. This data redundancy consumed storage and bred inconsistency. A change made in sales might never reach accounting or shipping.

The emergence of Database Management Systems (DBMS) in the 1960s — such as GE’s IDS and IBM’s IMS — centralized data storage, enabling multiple applications to access the same version of a record.

Centralization also raised the stakes. Errors in one place could propagate everywhere, making data integrity critical: records had to be valid, correct, and complete. Fields were expected to follow strict rules — for example, prices had to be numeric and dates had to conform to valid calendar values. As data became more valuable, concerns about security also grew.

B52_DATA_INTEGRITY

B52_DATA_INTEGRITY

B54_EARLY_INTERFACES

Redundancy, consistency, integrity, and security became the pillars on which modern data systems were built. Every entry had to be checked, every rule enforced, every access controlled.

These principles made data processing trustworthy enough to scale — but they also underscored how technical and mediated the act of using a computer still was. The question of who could interact with these systems, and how, was becoming just as important as the data they safeguarded.

B54_EARLY_INTERFACES

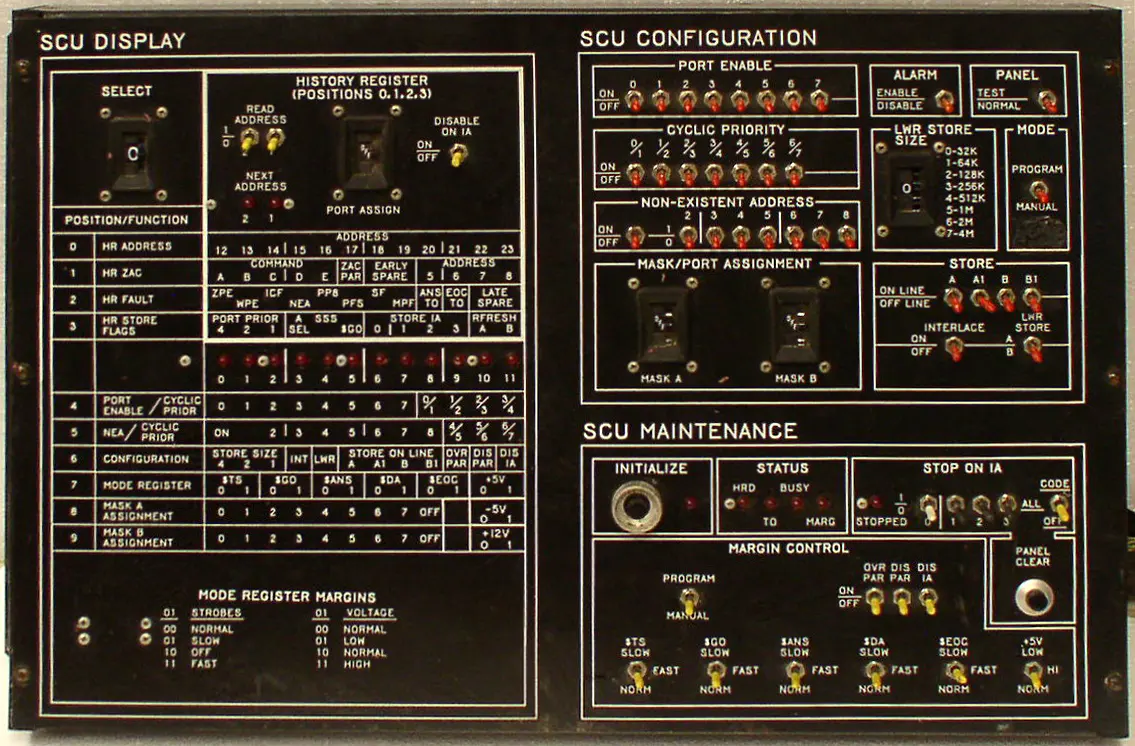

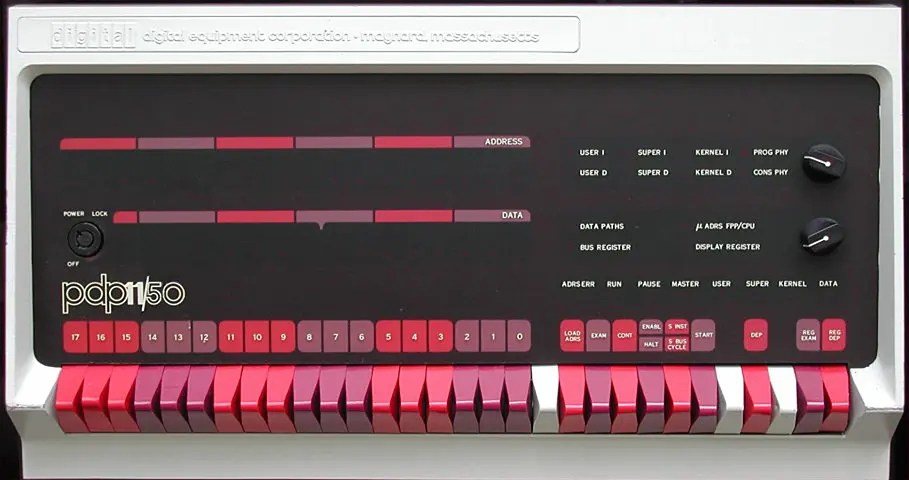

Early Interfaces — Command Lines and Control Panels

Early computers didn’t greet users with icons or buttons. Interaction meant command-line precision, punched card stacks, and binary switches. Human–machine communication was intense, physical, and deeply technical.

Users were mostly operators, engineers, and programmers — not everyday clerks or analysts. Programs were written in machine code or assembly, entered via punch cards or front-panel switches. The idea of a “user-friendly” system simply didn’t exist.

Control panels featured toggle switches, status lights, and manual overrides. Later came command line interfaces (CLIs), where users had to type exact instructions. A single typo could crash an entire batch run.

To improve efficiency, Job Control Languages (JCLs) emerged — scripts that told the computer what to do, in what order, and how to handle results. This was automation before the GUI.

Meanwhile, efforts to standardize peripheral interfaces, data formats, and operating procedures foreshadowed the shift toward interoperable systems. Symbolic abstractions like mnemonic codes pointed toward usability, but the user was still a technician, not a typist.

B55_EARLY_STRUCTURES_ALGOS

Organizing Information — Data Structures and Algorithms

While interfaces determined how people spoke to machines, the machines themselves needed internal structures to manage information once it was loaded. Early programmers discovered that efficiency depended not only on commands, but on how data was arranged in memory.

The first systematic solutions were data structures. Arrays assigned each item to a fixed slot in memory, like numbered positions in a filing cabinet — quick to access but inflexible. Linked lists, by contrast, let each record point to the next, forming a chain that could grow or shrink as needed. Together, they gave programmers reliable ways to store and retrieve data on machines with limited resources.

Processing required algorithms — step-by-step procedures for sorting, searching, or merging records. A payroll system, for example, depended on routines to alphabetize employees or locate specific names. These instructions were hard-coded in assembly or other low-level languages, demanding technical skill to write and maintain.

Later, thinkers such as Donald Knuth and Niklaus Wirth codified these ideas in textbooks and programming languages, ensuring that structures and algorithms became the foundation of computer science education and practice.

B55_EARLY_STRUCTURES_ALGOS

B55_EARLY_STRUCTURES_ALGOS

Supercluster C

The foundations of data handling took shape in practices that rarely made headlines: the choice between batch and online transactions, the push to keep records consistent, the unforgiving interfaces, and the coded routines that organized information in memory. Each solved immediate technical problems, but together they defined what it meant to process data reliably.

By the end of the 1960s, computing had become less about individual machines and more about the principles that made systems trustworthy and usable.

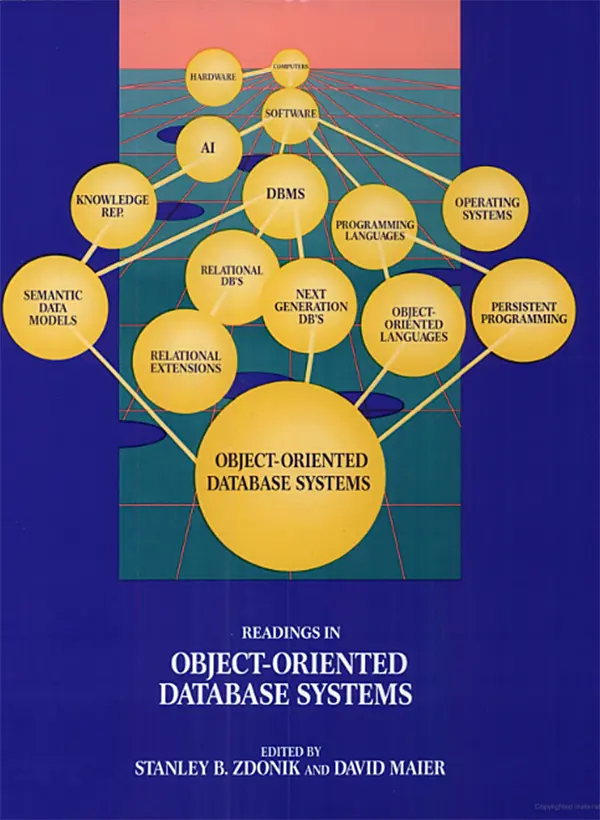

The Relational Model of Databases — Logic, Language, and Independence

Database management systems (DBMSs) emerged as tools of control and as symbols of computing’s rising managerial role. As historians Bergin and Haigh observe, they marked the moment when data itself was framed as a corporate resource. Media theorist Lev Manovich later noted how DBMSs encouraged people to treat information as structured and purposeful rather than as a random collection of records.

The first commercial packages, IBM’s IMS and Cullinane’s IDMS, made this shift tangible. They centralized large bodies of business and government data, but they remained tied to the logic of specific machines and programs. Changing a data structure often meant breaking the applications built on top. The unresolved problem was how to separate data from the hardware and code that handled it.

It was into this setting that Edgar F. Codd introduced the relational model.

C1

Codd and the Relational Revolution

The most influential challenge to existing databases came from inside IBM. In the late 1960s, as the company was consolidating its System/360 empire, one of its researchers argued that the whole foundation of data management was flawed. Edgar F. Codd proposed that information should be understood as elements in a formal system that could be reasoned about independently.

In 1970 his paper in Communications of the ACM set out the principles of a relational approach, sparking debates that would reshape both theory and practice. The relational revolution began not with hardware or code, but with an argument on paper.

C11_CODD_RELATIONAL

The Relational Breakthrough — Codd’s 1970 Paper

After serving as a WWII pilot, Edgar F. Codd returned to Oxford for a mathematics degree and later joined IBM in the U.S. In the 1960s, he developed software for the IBM 7090 mainframe — systems deeply tied to physical data structures. At IBM’s research lab in San Jose, California, he advanced a new idea: databases should represent information using only data values, not pointers, hierarchies, or physical record order.

His 1970 paper, A Relational Model of Data for Large Shared Data Banks, introduced the concept of data independence — separating logical data relationships from how data is physically stored. This allowed users to pose declarative queries without understanding the internal structure. It laid the foundation for SQL and shifted database design from hardware-bound logic to abstract, high-level models.

Although the mathematical style puzzled many readers — even IBM engineers admitted they “couldn’t make heads or tails” of it — the paper defined a new direction for database research. In 1981, Codd received the Turing Award for this contribution.

C11_CODD_RELATIONAL

C11_CODD_RELATIONAL

C12_RELATIONAL_HARDWARE

The challenge Codd addressed was deeper than hardware or software compatibility. Those could be standardized through engineering fixes and corporate agreements. The harder problem was the data itself.

Early hierarchical systems buried information inside rigid formats, making it difficult to share, extend, or reorganize. Codd’s model separated data from the programs that processed it, so that meaning could stand apart from machinery. That separation turned a technical proposal into a conceptual break — and a new horizon for how information could be structured and used.

C12_RELATIONAL_HARDWARE

IBM Context — Hardware First, Ideas Later

Codd’s work on the relational model was rooted in IBM’s dramatic hardware evolution. In the early 1960s, he worked with the IBM 7090, a transistorized mainframe widely used for scientific and business computing. Bulky by today’s standards, it nonetheless laid the groundwork for systematizing data operations.

By the late 1960s, IBM introduced the System/360, a platform that unified architectures for business and scientific applications. Its design brought direct-access storage devices (DASDs) — a crucial step toward random-access data retrieval and, eventually, relational systems.

Codd’s vision explicitly abstracted away hardware dependence, promoting logical organization over machine constraints.

C12_RELATIONAL_HARDWARE

C12_RELATIONAL_HARDWARE

C13_RELATIONAL_HONEYWELL

Yet independence could not stand on logic alone. Without addressable disks and faster CPUs, the relational model might have remained a paper exercise. The hardware context made it feasible, but IBM’s culture kept it theoretical. Bound to the System/360 business model, the company hesitated to push an idea that threatened established practices.

That left space for others to act. Honeywell’s MRDS would become the first real demonstration that relational systems could work outside the lab — and outside IBM’s control.

C13_RELATIONAL_HONEYWELL

MRDS on Multics — The First Relational System

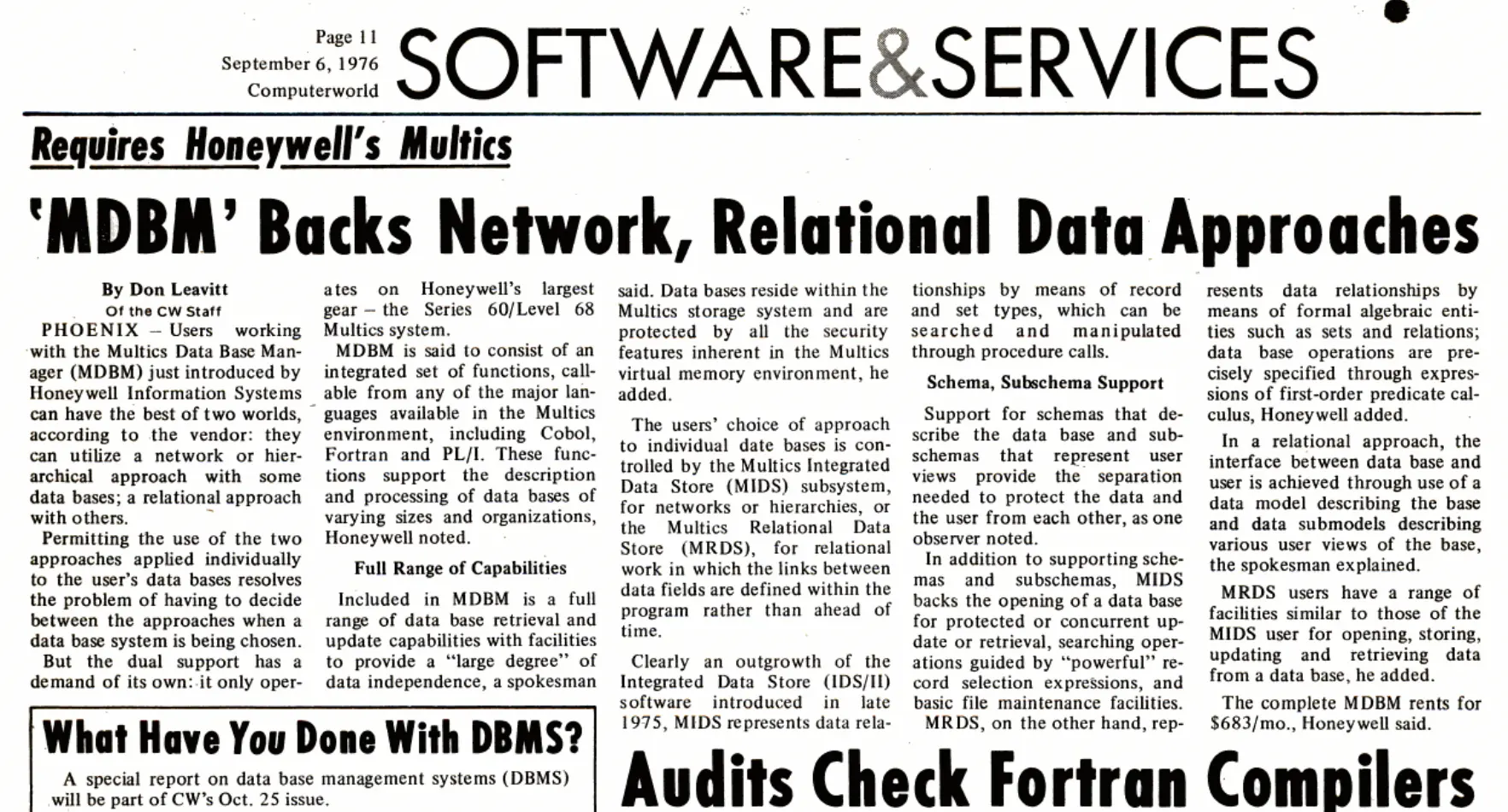

In the mid-1970s, the Multics Relational Data Store (MRDS) became the first commercial implementation of Codd’s relational model. At Honeywell’s Phoenix facility, Jim Weeldreyer and Oris Friesen led its development, drawing on Codd’s theoretical framework while working in parallel with early relational projects such as IBM’s System R and Berkeley’s Ingres.

Running on the secure, time-sharing Multics operating system, MRDS took advantage of virtual memory and was written in PL/1. Its design allowed users to access and manipulate structured data using high-level relational commands. In 1976, Honeywell marketed MRDS as part of the Multics Data Base Manager (MDBM), which also included a CODASYL-style layer.

C13_RELATIONAL_HONEYWELL

C13_RELATIONAL_HONEYWELL

Cluster C2

Punched card systems changed the scale of data processing. No longer tied to a single loom or census, they became the connective tissue of states and corporations. Civil registries, payrolls, tax records, and inventories all began to flow through standardized cards and tabulators.

Processing here was not invention but normalization — a logic absorbed into institutions until bureaucracy itself depended on it. To see how deep these habits ran, we need to rewind to older traditions of counting, where enumeration was less about machines than about ritual order.

C2

Practical Birth of Relational Databases

If MRDS proved that relational systems could exist, System R asked whether they could thrive. At IBM’s San Jose Research Lab, engineers set out to turn Codd’s theory into working code, complete with language, storage, and performance benchmarks. This shift marked the practical birth of relational databases: from abstract algebra to prototypes, and then from prototypes to industrial trials.

C21_SYSTEM_R_CONCEPT

System R — Bringing the Relational Model to Life

By the mid-1970s, IBM’s San Jose Research Lab launched a bold experiment: could Codd’s abstract relational model actually work in practice? The answer was System R, a prototype designed to show that a relational database could offer full production functionality without sacrificing performance.

The project began in 1974, led by Don Chamberlin, Ray Boyce, Irv Traiger, and Morton Astrahan — though Codd himself watched it from the side. Chamberlin later remarked, “He really didn’t get involved in the nuts and bolts of System R very much. I think he may have wanted to maintain a certain distance from it in case we didn’t get it right. Which I think he would probably say we didn’t.”

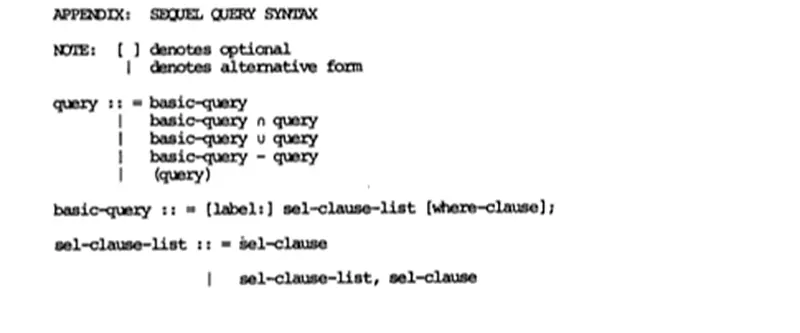

System R unfolded in stages. Phase Zero tested the feasibility of a relational language — SEQUEL (later SQL) — using early prototypes. By 1976, the team had demonstrated SQL’s potential to make databases accessible to non-programmers. Internally, the project split into two groups: the Relational Data System (RDS) focused on language and query logic, while the Research Storage System (RSS) tackled data handling, transactions, and concurrency.

System R was both a proof of concept and a development engine: it validated that a declarative approach to data could scale and laid the groundwork for a new generation of commercial database products.

C21_SYSTEM_R_CONCEPT

C21_SYSTEM_R_CONCEPT

C22_SYSTEMR_USE_CASES

Inside IBM, System R set new patterns for how software could be designed, tested, and extended. What began as an experiment in a research lab became proof that relational logic was fit for industrial practice, and from there it seeded broader trends across computing.

C22_SYSTEMR_USE_CASES

Real-World Trials — System R in Industry

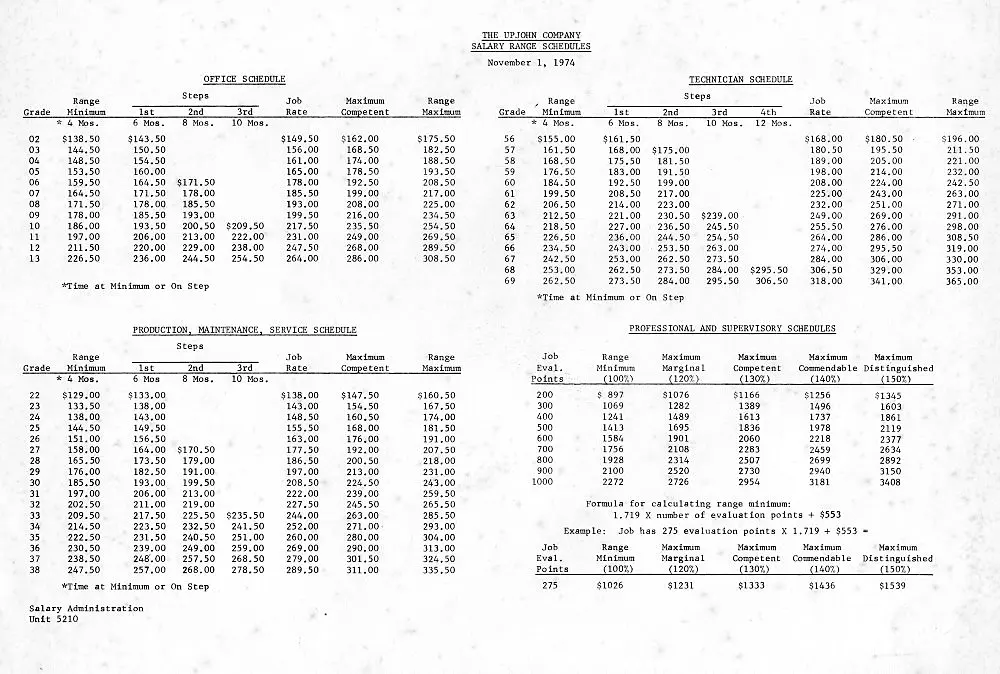

In the late 1970s, IBM partnered with real-world users to validate the relational model’s performance in live environments. The first major test came in 1977, when Pratt & Whitney, a jet engine manufacturer, used System R to manage parts and inventory.

Shortly after, Upjohn Pharmaceuticals deployed it to track clinical research data for FDA applications. While these early adopters didn’t leverage features like concurrency, locking, or transactions to their fullest, they proved relational systems could serve serious industrial use cases.

Among the growing pains IBM engineers faced was the “Halloween Problem,” discovered on October 31, 1976. Updates to a table could cause rows to re-enter a scan and be modified repeatedly — a quirk that haunted the SQL optimizer until engineers redesigned update handling. Despite these challenges, the project’s success was undeniable: it laid the groundwork for SQL/DS (1981) and the launch of IBM DB2.

C22_SYSTEMR_USE_CASES

C22_SYSTEMR_USE_CASES

Cluster C3

System R’s field trials shifted focus in database design: the crucial question became how systems shaped the work of those who dealt with them. From this point forward, the future of databases would be defined by usability as much as by engineering.

C3

Practical Birth of Relational Databases

Relational systems required a language that could express requests in everyday terms, turning complex queries into simple statements. Structured Query Language (SQL) became that medium. Compact and declarative, it allowed users to treat data access as a dialogue rather than an engineering task.

By the late 1970s, SQL was ready to move into real business systems — the point where implementation began to define its future.

C32_SQL_IMPLEMENTATIONS

Early Implementations and Common Patterns

IBM prepared SQL for enterprise deployment with SQL/DS (announced in 1981) and DB2 (released in 1983), adapting relational concepts for large-scale business use.

Meanwhile, Oracle — founded by Larry Ellison, Bob Miner, and Ed Oates — seized the opportunity to commercialize relational ideas. Its second release, Oracle V2 in 1979, was the first SQL-based RDBMS available on the market. Built not on IBM’s source code but on published research about System R, it proved that relational design could be replicated and sold outside the labs.

SQL also gained traction in universities, where it was integrated into teaching tools and research systems. Students and researchers learned to write short, declarative statements — a style of interaction that began to define how users expected databases to work.

C32_SQL_IMPLEMENTATIONS

C32_SQL_IMPLEMENTATIONS

C33_SQL_STANDARDIZATION

The first commercial releases established SQL as a practical foundation for products and research. Multiple vendors and universities built their own versions, which demonstrated its flexibility but also risked fragmentation. Without coordination, SQL could have splintered into dialects tied to individual machines. The next step was to create a shared standard that would hold the field together.

C33_SQL_STANDARDIZATION

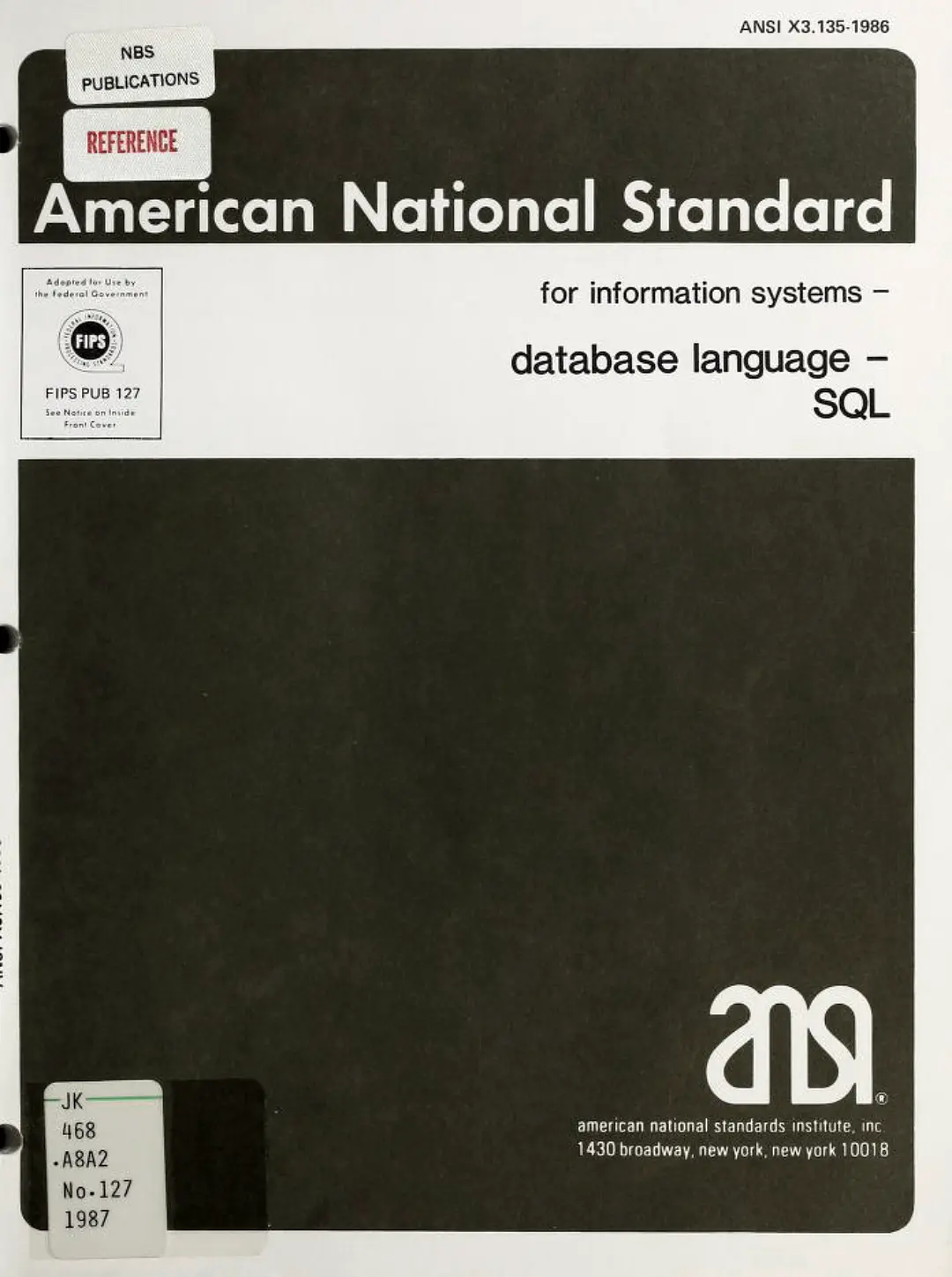

SQL Becomes the Standard

In 1986, the American National Standards Institute (ANSI) ratified SQL as the official database language, followed by the International Organization for Standardization (ISO) in 1987. This recognition established SQL as a common interface across vendors and platforms, fixing its role at the core of relational computing.